Berikut ini penjelasan semantik operasi yang ditetapkan di antarmuka

XlaBuilder. Biasanya, operasi ini memetakan one-to-one ke operasi yang ditentukan dalam antarmuka RPC di xla_data.proto.

Catatan tentang nomenklatur: jenis data umum yang ditangani XLA adalah array N dimensi yang berisi elemen dari beberapa jenis seragam (seperti float 32-bit). Di seluruh dokumentasi, array digunakan untuk menunjukkan array dimensi arbitrer. Untuk memudahkan, kasus khusus memiliki nama yang lebih spesifik dan sudah dikenal; misalnya vektor adalah array 1 dimensi dan matriks adalah array 2 dimensi.

AfterAll

Lihat juga

XlaBuilder::AfterAll.

AfterAll mengambil sejumlah token token dan menghasilkan satu token. Token adalah jenis primitif yang dapat di-thread antara operasi efek samping untuk menerapkan pengurutan. AfterAll dapat digunakan sebagai gabungan token untuk mengurutkan operasi setelah operasi yang ditetapkan.

AfterAll(operands)

| Argumen | Jenis | Semantik |

|---|---|---|

operands |

XlaOp |

jumlah token variadic |

AllGather

Lihat juga

XlaBuilder::AllGather.

Melakukan penyambungan di seluruh replika.

AllGather(operand, all_gather_dim, shard_count, replica_group_ids,

channel_id)

| Argumen | Jenis | Semantik |

|---|---|---|

operand

|

XlaOp

|

Array untuk menyambungkan seluruh replika |

all_gather_dim |

int64 |

Dimensi penyambungan |

replica_groups

|

vektor vektor

int64 |

Grup di mana penggabungan dilakukan |

channel_id

|

int64 opsional

|

ID saluran opsional untuk komunikasi lintas modul |

replica_groupsadalah daftar grup replika tempat penggabungan dilakukan (ID replika untuk replika saat ini dapat diambil menggunakanReplicaId). Urutan replika dalam setiap grup menentukan urutan inputnya dalam hasil.replica_groupsharus kosong (dalam hal ini semua replika dimiliki oleh satu grup, diurutkan dari0hinggaN - 1), atau berisi jumlah elemen yang sama dengan jumlah replika. Misalnya,replica_groups = {0, 2}, {1, 3}melakukan penyambungan antara replika0dan2, serta1dan3.shard_countadalah ukuran setiap grup replika. Kita memerlukannya jikareplica_groupskosong.channel_iddigunakan untuk komunikasi lintas modul: hanya operasiall-gatherdenganchannel_idyang sama yang dapat saling berkomunikasi.

Bentuk output adalah bentuk input dengan all_gather_dim yang dibuat shard_count

kali lebih besar. Misalnya, jika ada dua replika dan operand memiliki nilai [1.0, 2.5] dan [3.0, 5.25] masing-masing pada kedua replika, nilai output dari operasi ini dengan all_gather_dim 0 akan menjadi [1.0, 2.5, 3.0,

5.25] di kedua replika.

AllReduce

Lihat juga

XlaBuilder::AllReduce.

Melakukan komputasi kustom di seluruh replika.

AllReduce(operand, computation, replica_group_ids, channel_id)

| Argumen | Jenis | Semantik |

|---|---|---|

operand

|

XlaOp

|

Array atau tuple array yang tidak kosong untuk direduksi di berbagai replika |

computation |

XlaComputation |

Komputasi pengurangan |

replica_groups

|

vektor vektor

int64 |

Grup di mana pengurangan dilakukan |

channel_id

|

int64 opsional

|

ID saluran opsional untuk komunikasi lintas modul |

- Jika

operandadalah tuple array, semua pengurangan akan dilakukan pada setiap elemen tuple. replica_groupsadalah daftar grup replika tempat pengurangan dilakukan (ID replika untuk replika saat ini dapat diambil menggunakanReplicaId).replica_groupsharus kosong (jika semua replika dimiliki satu grup), atau berisi jumlah elemen yang sama dengan jumlah replika. Misalnya,replica_groups = {0, 2}, {1, 3}melakukan pengurangan antara replika0dan2, serta1dan3.channel_iddigunakan untuk komunikasi lintas modul: hanya operasiall-reducedenganchannel_idyang sama yang dapat saling berkomunikasi.

Bentuk output sama dengan bentuk input. Misalnya, jika ada dua

replika dan operand memiliki nilai [1.0, 2.5] dan [3.0, 5.25]

masing-masing di dua replika, nilai output dari komputasi

op dan penjumlahan ini akan menjadi [4.0, 7.75] di kedua replika. Jika inputnya adalah

tuple, output-nya juga merupakan tuple.

Menghitung hasil AllReduce memerlukan satu input dari setiap replika,

jadi jika satu replika mengeksekusi node AllReduce lebih kali daripada yang lain, replika

sebelumnya akan menunggu selamanya. Karena semua replika menjalankan program yang sama, tidak banyak cara yang dapat dilakukan. Namun, ada kemungkinan jika kondisi loop bergantung pada data dari infeed dan data yang dimasukkan menyebabkan loop sementara melakukan iterasi lebih sering pada satu replika daripada yang lainnya.

AllToAll

Lihat juga

XlaBuilder::AllToAll.

AllToAll adalah operasi kolektif yang mengirimkan data dari semua core ke semua core. Ini memiliki dua fase:

- Fase pencar. Pada setiap core, operand dibagi menjadi

split_countjumlah blok di sepanjangsplit_dimensions, dan blok tersebut tersebar ke semua core, misalnya, blok ith dikirim ke core i. - Fase pengumpulan. Setiap inti menggabungkan blok yang diterima di sepanjang

concat_dimension.

Core yang berpartisipasi dapat dikonfigurasi dengan:

replica_groups: setiap ReplicaGroup berisi daftar ID replika yang berpartisipasi dalam komputasi (ID replika untuk replika saat ini dapat diambil menggunakanReplicaId). AllToAll akan diterapkan dalam subgrup sesuai urutan yang ditentukan. Misalnya,replica_groups = { {1,2,3}, {4,5,0} }berarti AllToAll akan diterapkan dalam replika{1, 2, 3}, dan dalam fase pengumpulan, dan blok yang diterima akan digabungkan dalam urutan yang sama, yaitu 1, 2, 3. Kemudian, AllToAll lainnya akan diterapkan dalam replika 4, 5, 0, dan urutan penyambungannya juga 4, 5, 0. Jikareplica_groupskosong, semua replika dimiliki oleh satu grup, dalam urutan penyambungan tampilannya.

Prasyarat:

- Ukuran dimensi operand pada

split_dimensiondapat dibagi dengansplit_count. - Bentuk operand bukan tuple.

AllToAll(operand, split_dimension, concat_dimension, split_count,

replica_groups)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array input n dimensi |

split_dimension

|

int64

|

Nilai dalam interval [0,

n) yang memberi nama dimensi

bersama operand yang

dipisah |

concat_dimension

|

int64

|

Nilai dalam interval [0,

n) yang memberi nama dimensi

dan blok pemisahan

digabungkan |

split_count

|

int64

|

Jumlah core yang

berpartisipasi dalam operasi ini. Jika

replica_groups kosong, nilai ini

harus menunjukkan jumlah

replika; jika tidak, jumlah ini harus sama dengan jumlah

replika dalam setiap grup. |

replica_groups

|

Vektor ReplicaGroup

|

Setiap grup berisi daftar ID replika. |

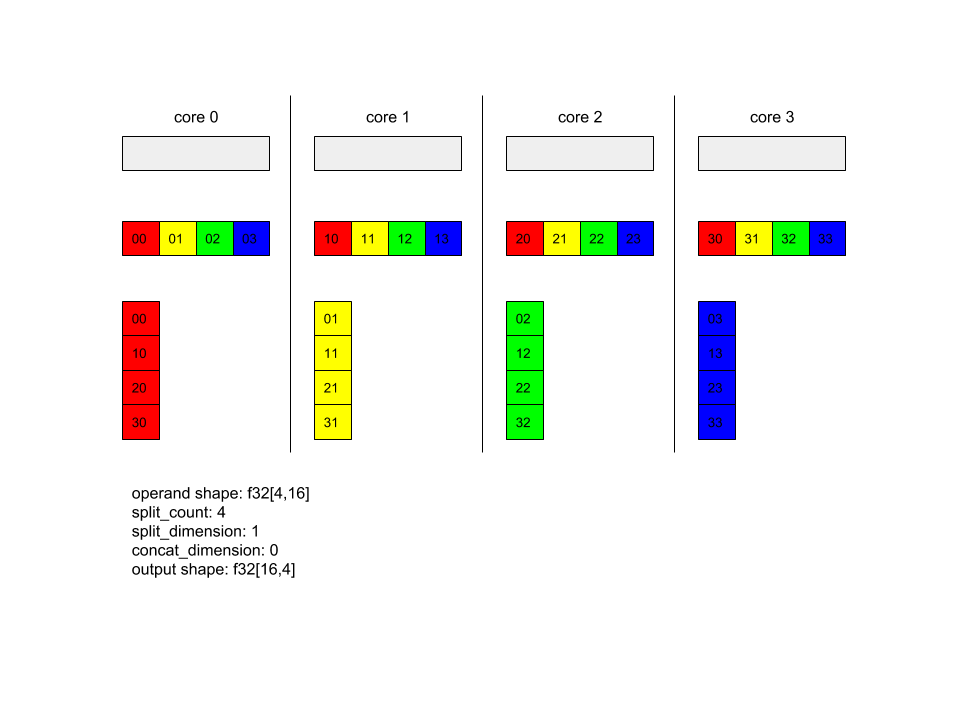

Di bawah ini menunjukkan contoh Alltoall.

XlaBuilder b("alltoall");

auto x = Parameter(&b, 0, ShapeUtil::MakeShape(F32, {4, 16}), "x");

AllToAll(x, /*split_dimension=*/1, /*concat_dimension=*/0, /*split_count=*/4);

Dalam contoh ini, ada 4 core yang berpartisipasi dalam Alltoall. Pada setiap inti, operan dibagi menjadi 4 bagian di sepanjang dimensi 0, sehingga setiap bagian memiliki bentuk f32[4,4]. Keempat bagian tersebut tersebar ke semua core. Kemudian setiap inti menggabungkan bagian yang diterima di sepanjang dimensi 1, dalam urutan inti 0-4. Jadi {i>output<i} pada setiap inti memiliki bentuk f32[16,4].

BatchNormGrad

Lihat juga

XlaBuilder::BatchNormGrad

dan kertas normalisasi batch asli

untuk mengetahui deskripsi mendetail tentang algoritme.

Menghitung gradien norma tumpukan.

BatchNormGrad(operand, scale, mean, variance, grad_output, epsilon, feature_index)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array dimensi n yang akan dinormalisasi (x) |

scale |

XlaOp |

Array 1 dimensi (\(\gamma\)) |

mean |

XlaOp |

Array 1 dimensi (\(\mu\)) |

variance |

XlaOp |

Array 1 dimensi (\(\sigma^2\)) |

grad_output |

XlaOp |

Gradien yang diteruskan ke BatchNormTraining (\(\nabla y\)) |

epsilon |

float |

Nilai epsilon (\(\epsilon\)) |

feature_index |

int64 |

Indeks ke dimensi fitur di operand |

Untuk setiap fitur dalam dimensi fitur (feature_index adalah indeks untuk dimensi fitur dalam operand), operasi akan menghitung gradien yang sesuai dengan operand, offset, dan scale di semua dimensi lainnya. feature_index harus berupa indeks yang valid untuk dimensi fitur di operand.

Ketiga gradien ditentukan oleh formula berikut (dengan asumsi array 4 dimensi sebagai operand dan dengan indeks dimensi fitur l, ukuran batch m, serta ukuran spasial w dan h):

\[ \begin{split} c_l&= \frac{1}{mwh}\sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h \left( \nabla y_{ijkl} \frac{x_{ijkl} - \mu_l}{\sigma^2_l+\epsilon} \right) \\\\ d_l&= \frac{1}{mwh}\sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h \nabla y_{ijkl} \\\\ \nabla x_{ijkl} &= \frac{\gamma_{l} }{\sqrt{\sigma^2_{l}+\epsilon} } \left( \nabla y_{ijkl} - d_l - c_l (x_{ijkl} - \mu_{l}) \right) \\\\ \nabla \gamma_l &= \sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h \left( \nabla y_{ijkl} \frac{x_{ijkl} - \mu_l}{\sqrt{\sigma^2_{l}+\epsilon} } \right) \\\\\ \nabla \beta_l &= \sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h \nabla y_{ijkl} \end{split} \]

Input mean dan variance mewakili nilai momen di seluruh dimensi batch dan

spasial.

Jenis output-nya adalah tuple dari tiga handle:

| Output | Jenis | Semantik |

|---|---|---|

grad_operand

|

XlaOp

|

gradien terhadap input operand ($\nabla

x$) |

grad_scale

|

XlaOp

|

gradien terhadap input scale ($\nabla

\gamma$) |

grad_offset

|

XlaOp

|

gradien terhadap input offset($\nabla

\beta$) |

BatchNormInference

Lihat juga

XlaBuilder::BatchNormInference

dan kertas normalisasi batch asli

untuk mengetahui deskripsi mendetail tentang algoritme.

Menormalisasi array di seluruh dimensi spasi dan batch.

BatchNormInference(operand, scale, offset, mean, variance, epsilon, feature_index)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array dimensi n yang akan dinormalisasi |

scale |

XlaOp |

Array 1 dimensi |

offset |

XlaOp |

Array 1 dimensi |

mean |

XlaOp |

Array 1 dimensi |

variance |

XlaOp |

Array 1 dimensi |

epsilon |

float |

Nilai Epsilon |

feature_index |

int64 |

Indeks ke dimensi fitur di operand |

Untuk setiap fitur dalam dimensi fitur (feature_index adalah indeks untuk dimensi fitur di operand), operasi tersebut menghitung rata-rata dan varians di semua dimensi lainnya dan menggunakan rata-rata dan varian untuk menormalisasi setiap elemen di operand. feature_index harus berupa indeks yang valid untuk dimensi fitur di operand.

BatchNormInference setara dengan memanggil BatchNormTraining tanpa

menghitung mean dan variance untuk setiap batch. Diagram ini menggunakan input mean dan

variance sebagai estimasi nilai. Tujuan dari operasi ini adalah untuk mengurangi latensi dalam inferensi, oleh karena itu dinamakan BatchNormInference.

Outputnya berupa array n dimensi yang dinormalisasi dengan bentuk yang sama seperti operand input.

BatchNormTraining

Lihat juga

XlaBuilder::BatchNormTraining

dan the original batch normalization paper

untuk mengetahui deskripsi mendetail tentang algoritme.

Menormalisasi array di seluruh dimensi spasi dan batch.

BatchNormTraining(operand, scale, offset, epsilon, feature_index)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array dimensi n yang akan dinormalisasi (x) |

scale |

XlaOp |

Array 1 dimensi (\(\gamma\)) |

offset |

XlaOp |

Array 1 dimensi (\(\beta\)) |

epsilon |

float |

Nilai epsilon (\(\epsilon\)) |

feature_index |

int64 |

Indeks ke dimensi fitur di operand |

Untuk setiap fitur dalam dimensi fitur (feature_index adalah indeks untuk dimensi fitur di operand), operasi tersebut menghitung rata-rata dan varians di semua dimensi lainnya dan menggunakan rata-rata dan varian untuk menormalisasi setiap elemen di operand. feature_index harus berupa indeks yang valid untuk dimensi fitur di operand.

Algoritme berjalan sebagai berikut untuk setiap batch di operand \(x\) yang berisi elemen m dengan w dan h sebagai ukuran dimensi spasial (dengan asumsi operand adalah array 4 dimensi):

Menghitung rata-rata batch \(\mu_l\) untuk setiap fitur

ldalam dimensi fitur: \(\mu_l=\frac{1}{mwh}\sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h x_{ijkl}\)Menghitung varians batch \(\sigma^2_l\): $\sigma^2l=\frac{1}{mwh}\sum{i=1}^m\sum{j=1}^w\sum{k=1}^h (x_{ijkl} - \mu_l)^2$

Menormalisasi, menskalakan, dan bergeser: \(y_{ijkl}=\frac{\gamma_l(x_{ijkl}-\mu_l)}{\sqrt[2]{\sigma^2_l+\epsilon} }+\beta_l\)

Nilai epsilon, biasanya angka kecil, ditambahkan untuk menghindari kesalahan pembagian dengan nol.

Jenis output adalah tuple dari tiga XlaOp:

| Output | Jenis | Semantik |

|---|---|---|

output

|

XlaOp

|

array n dimensi dengan bentuk yang sama seperti input operand (y) |

batch_mean |

XlaOp |

Array 1 dimensi (\(\mu\)) |

batch_var |

XlaOp |

Array 1 dimensi (\(\sigma^2\)) |

batch_mean dan batch_var adalah momen yang dihitung di seluruh dimensi batch dan spasial menggunakan formula di atas.

BitcastConvertType

Lihat juga

XlaBuilder::BitcastConvertType.

Serupa dengan tf.bitcast di TensorFlow, melakukan operasi bitcast berdasarkan elemen dari bentuk data ke bentuk target. Ukuran input dan output harus

cocok: misalnya elemen s32 menjadi elemen f32 melalui rutinitas bitcast, dan satu

elemen s32 akan menjadi empat elemen s8. Bitcast diimplementasikan sebagai transmisi level rendah, sehingga mesin dengan representasi floating point yang berbeda akan memberikan hasil yang berbeda.

BitcastConvertType(operand, new_element_type)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array jenis T dengan redup D |

new_element_type |

PrimitiveType |

tipe U |

Dimensi operand dan bentuk target harus cocok, terlepas dari dimensi terakhir yang akan berubah berdasarkan rasio ukuran dasar sebelum dan setelah konversi.

Jenis elemen sumber dan tujuan tidak boleh berupa tupel.

Mengonversi bitcast ke jenis primitif dengan lebar yang berbeda

Petunjuk HLO BitcastConvert mendukung kasus ketika ukuran jenis elemen

output T' tidak sama dengan ukuran elemen input T. Karena

seluruh operasi secara konseptual merupakan bitcast dan tidak mengubah byte

yang mendasarinya, bentuk elemen output harus berubah. Untuk B = sizeof(T), B' =

sizeof(T'), ada dua kemungkinan kasus.

Pertama, saat B > B', bentuk output mendapatkan dimensi minor baru dari ukuran B/B'. Contoh:

f16[10,2]{1,0} %output = f16[10,2]{1,0} bitcast-convert(f32[10]{0} %input)

Aturannya tetap sama untuk skalar efektif:

f16[2]{0} %output = f16[2]{0} bitcast-convert(f32[] %input)

Atau, untuk B' > B, petunjuk mengharuskan dimensi logika terakhir

dari bentuk input harus sama dengan B'/B, dan dimensi ini dihapus selama

konversi:

f32[10]{0} %output = f32[10]{0} bitcast-convert(f16[10,2]{1,0} %input)

Perhatikan bahwa konversi antara bitwidth yang berbeda tidak bersifat elementwise.

Siarkan

Lihat juga

XlaBuilder::Broadcast.

Menambahkan dimensi ke array dengan menduplikasi data dalam array.

Broadcast(operand, broadcast_sizes)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

Array yang akan diduplikasi |

broadcast_sizes |

ArraySlice<int64> |

Ukuran dimensi baru |

Dimensi baru disisipkan di sebelah kiri, yaitu jika broadcast_sizes memiliki

nilai {a0, ..., aN} dan bentuk operand memiliki dimensi {b0, ..., bM},

bentuk output memiliki dimensi {a0, ..., aN, b0, ..., bM}.

Indeks dimensi baru ke dalam salinan operand, yaitu

output[i0, ..., iN, j0, ..., jM] = operand[j0, ..., jM]

Misalnya, jika operand adalah f32 skalar dengan nilai 2.0f, dan

broadcast_sizes adalah {2, 3}, hasilnya adalah array dengan bentuk

f32[2, 3] dan semua nilai dalam hasilnya adalah 2.0f.

BroadcastInDim

Lihat juga

XlaBuilder::BroadcastInDim.

Memperluas ukuran dan peringkat array dengan menduplikasi data dalam array.

BroadcastInDim(operand, out_dim_size, broadcast_dimensions)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

Array yang akan diduplikasi |

out_dim_size |

ArraySlice<int64> |

Ukuran dimensi bentuk target |

broadcast_dimensions |

ArraySlice<int64> |

Dimensi mana dalam bentuk target yang sesuai dengan setiap dimensi bentuk operand |

Mirip dengan Broadcast, tetapi memungkinkan penambahan dimensi di mana saja dan memperluas dimensi yang ada dengan ukuran 1.

operand disiarkan ke bentuk yang dijelaskan oleh out_dim_size.

broadcast_dimensions memetakan dimensi operand ke dimensi

bentuk target, yaitu dimensi ke-i operand dipetakan ke dimensi

broadcast_dimension[i] dari bentuk output. Dimensi

operand harus memiliki ukuran 1 atau sama ukurannya dengan dimensi dalam bentuk

output yang dipetakan. Dimensi yang tersisa diisi dengan dimensi

ukuran 1. Penyiaran dimensi merosot, lalu menyiarkan bersama dimensi

yang merosot ini untuk mencapai bentuk output. Semantik ini dijelaskan secara mendetail di

halaman penyiaran.

Panggilan Telepon

Lihat juga

XlaBuilder::Call.

Memanggil komputasi dengan argumen yang diberikan.

Call(computation, args...)

| Argumen | Jenis | Semantik |

|---|---|---|

computation |

XlaComputation |

komputasi jenis T_0, T_1, ..., T_{N-1} -> S dengan parameter N dari jenis arbitrer |

args |

urutan N XlaOpd |

Argumen N jenis arbitrer |

Aritas dan jenis args harus cocok dengan parameter

computation. Diperbolehkan untuk tidak memiliki args.

Kolesky

Lihat juga

XlaBuilder::Cholesky.

Menghitung dekomposisi Cholesky dari batch matriks pasti positif simetris (Hermitian).

Cholesky(a, lower)

| Argumen | Jenis | Semantik |

|---|---|---|

a |

XlaOp |

array peringkat > 2 dari jenis kompleks atau floating point. |

lower |

bool |

apakah akan menggunakan segitiga atas atau bawah a. |

Jika lower adalah true, menghitung matriks segitiga lebih rendah l sedemikian rupa sehingga $a = l .

l^T$. Jika lower adalah false, akan menghitung matriks segitiga atas u sedemikian rupa sehingga

\(a = u^T . u\).

Data input hanya dibaca dari segitiga bawah/atas a, bergantung pada

nilai lower. Nilai dari segitiga lainnya akan diabaikan. Data output

ditampilkan dalam segitiga yang sama; nilai dalam segitiga lainnya

ditentukan oleh implementasi dan bisa berupa apa saja.

Jika peringkat a lebih besar dari 2, a akan diperlakukan sebagai batch matriks,

dengan semua dimensi kecuali 2 minor adalah dimensi batch.

Jika a tidak simetris (Hermitian) positif pasti, hasilnya adalah

ditentukan implementasi.

Penjepit

Lihat juga

XlaBuilder::Clamp.

Menjepit operand ke dalam rentang antara nilai minimum dan maksimum.

Clamp(min, operand, max)

| Argumen | Jenis | Semantik |

|---|---|---|

min |

XlaOp |

array jenis T |

operand |

XlaOp |

array jenis T |

max |

XlaOp |

array jenis T |

Dengan mempertimbangkan operand dan nilai minimum dan maksimum, tampilkan operand jika operand berada dalam

rentang antara minimum dan maksimum, jika tidak, nilai minimum akan ditampilkan jika

operand berada di bawah rentang ini, atau nilai maksimum jika operand berada di atas rentang

ini. Artinya, clamp(a, x, b) = min(max(a, x), b).

Ketiga array harus memiliki bentuk yang sama. Atau, sebagai bentuk terbatas

penyiaran, min dan/atau max dapat menjadi skalar berjenis T.

Contoh dengan skalar min dan max:

let operand: s32[3] = {-1, 5, 9};

let min: s32 = 0;

let max: s32 = 6;

==>

Clamp(min, operand, max) = s32[3]{0, 5, 6};

Ciutkan

Lihat juga

operasi XlaBuilder::Collapse

dan tf.reshape.

Menciutkan dimensi array menjadi satu dimensi.

Collapse(operand, dimensions)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array jenis T |

dimensions |

Vektor int64 |

{i>subset<i} dimensi T yang berurutan dan berurutan. |

Ciutkan menggantikan subset dimensi operand yang diberikan dengan satu

dimensi. Argumen input adalah array arbitrer dari jenis T dan

vektor konstanta waktu kompilasi indeks dimensi. Indeks dimensi harus

berurutan (bilangan dimensi rendah ke tinggi), subset dimensi

T yang berurutan. Dengan demikian, {0, 1, 2}, {0, 1}, atau {1, 2} adalah kumpulan dimensi yang valid, tetapi

{1, 0} atau {0, 2} tidak valid. Dimensi tersebut diganti dengan satu dimensi baru, pada posisi yang sama dalam urutan dimensi seperti saat dimensi diganti, dengan ukuran dimensi baru yang sama dengan produk ukuran dimensi asli. Jumlah dimensi

terendah di dimensions adalah dimensi dengan variasi paling lambat (paling utama)

di tingkatan loop yang menciutkan dimensi ini, dan jumlah dimensi

tertinggi bervariasi paling cepat (paling kecil). Lihat operator tf.reshape jika pengurutan penciutan yang lebih umum diperlukan.

Misalnya, biarkan v menjadi array dari 24 elemen:

let v = f32[4x2x3] { { {10, 11, 12}, {15, 16, 17} },

{ {20, 21, 22}, {25, 26, 27} },

{ {30, 31, 32}, {35, 36, 37} },

{ {40, 41, 42}, {45, 46, 47} } };

// Collapse to a single dimension, leaving one dimension.

let v012 = Collapse(v, {0,1,2});

then v012 == f32[24] {10, 11, 12, 15, 16, 17,

20, 21, 22, 25, 26, 27,

30, 31, 32, 35, 36, 37,

40, 41, 42, 45, 46, 47};

// Collapse the two lower dimensions, leaving two dimensions.

let v01 = Collapse(v, {0,1});

then v01 == f32[4x6] { {10, 11, 12, 15, 16, 17},

{20, 21, 22, 25, 26, 27},

{30, 31, 32, 35, 36, 37},

{40, 41, 42, 45, 46, 47} };

// Collapse the two higher dimensions, leaving two dimensions.

let v12 = Collapse(v, {1,2});

then v12 == f32[8x3] { {10, 11, 12},

{15, 16, 17},

{20, 21, 22},

{25, 26, 27},

{30, 31, 32},

{35, 36, 37},

{40, 41, 42},

{45, 46, 47} };

CollectivePermute

Lihat juga

XlaBuilder::CollectivePermute.

CollectivePermute adalah operasi kolektif yang mengirim dan menerima replikasi silang data.

CollectivePermute(operand, source_target_pairs)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array input n dimensi |

source_target_pairs |

Vektor <int64, int64> |

Daftar pasangan (source_replica_id, target_replica_id). Untuk setiap pasangan, operand dikirim dari replika sumber ke replika target. |

Perhatikan bahwa ada pembatasan berikut di source_target_pair:

- Dua pasangan tidak boleh memiliki ID replika target yang sama dan tidak boleh memiliki ID replika sumber yang sama.

- Jika ID replika bukan target dalam pasangan apa pun, output pada replika tersebut adalah tensor yang terdiri dari 0 dengan bentuk yang sama dengan inputnya.

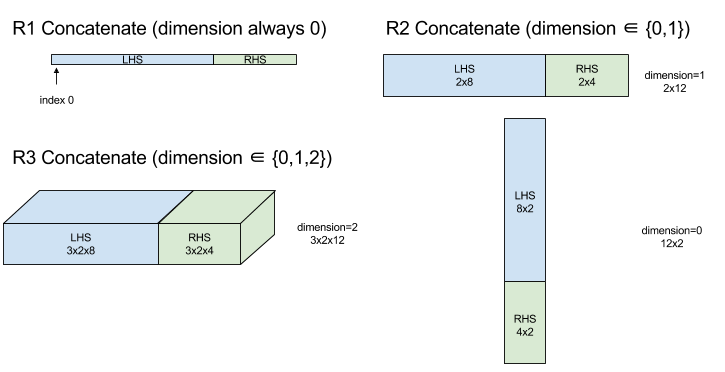

Gabungkan

Lihat juga

XlaBuilder::ConcatInDim.

Penyambungan akan menyusun array dari beberapa operand array. Array memiliki peringkat yang sama dengan setiap operand array input (yang harus berperingkat sama satu sama lain) dan berisi argumen dalam urutan yang ditentukan.

Concatenate(operands..., dimension)

| Argumen | Jenis | Semantik |

|---|---|---|

operands |

urutan dari N XlaOp |

Array N dari jenis T dengan dimensi [L0, L1, ...]. Membutuhkan N >= 1. |

dimension |

int64 |

Nilai dalam interval [0, N) yang memberi nama dimensi yang akan digabungkan di antara operands. |

Kecuali dimension, semua dimensi harus sama. Hal ini karena XLA tidak mendukung array "ragged". Perhatikan juga bahwa nilai peringkat 0 tidak dapat digabungkan (karena tidak mungkin untuk memberi nama dimensi tempat terjadinya penyambungan).

Contoh 1 dimensi:

Concat({ {2, 3}, {4, 5}, {6, 7} }, 0)

>>> {2, 3, 4, 5, 6, 7}

Contoh 2 dimensi:

let a = {

{1, 2},

{3, 4},

{5, 6},

};

let b = {

{7, 8},

};

Concat({a, b}, 0)

>>> {

{1, 2},

{3, 4},

{5, 6},

{7, 8},

}

Diagram:

Kondisional

Lihat juga

XlaBuilder::Conditional.

Conditional(pred, true_operand, true_computation, false_operand,

false_computation)

| Argumen | Jenis | Semantik |

|---|---|---|

pred |

XlaOp |

Skalar jenis PRED |

true_operand |

XlaOp |

Argumen jenis \(T_0\) |

true_computation |

XlaComputation |

XlaKomputasi jenis \(T_0 \to S\) |

false_operand |

XlaOp |

Argumen jenis \(T_1\) |

false_computation |

XlaComputation |

XlaKomputasi jenis \(T_1 \to S\) |

Mengeksekusi true_computation jika pred adalah true, false_computation jika pred

adalah false, dan menampilkan hasilnya.

true_computation harus menggunakan satu argumen dari jenis \(T_0\) dan akan

dipanggil dengan true_operand yang harus berjenis sama. false_computation

harus menggunakan satu argumen dari jenis \(T_1\) dan akan

dipanggil dengan false_operand yang harus berjenis sama. Jenis

nilai yang ditampilkan true_computation dan false_computation harus sama.

Perhatikan bahwa hanya satu dari true_computation dan false_computation yang akan

dieksekusi, bergantung pada nilai pred.

Conditional(branch_index, branch_computations, branch_operands)

| Argumen | Jenis | Semantik |

|---|---|---|

branch_index |

XlaOp |

Skalar jenis S32 |

branch_computations |

urutan dari N XlaComputation |

XlaComputasi dari jenis \(T_0 \to S , T_1 \to S , ..., T_{N-1} \to S\) |

branch_operands |

urutan dari N XlaOp |

Argumen jenis \(T_0 , T_1 , ..., T_{N-1}\) |

Mengeksekusi branch_computations[branch_index], dan menampilkan hasilnya. Jika

branch_index adalah S32 yang < 0 atau >= N, branch_computations[N-1]

akan dieksekusi sebagai cabang default.

Setiap branch_computations[b] harus menggunakan satu argumen dari jenis \(T_b\) dan

akan dipanggil dengan branch_operands[b] yang harus berjenis sama. Jenis

nilai yang ditampilkan dari setiap branch_computations[b] harus sama.

Perhatikan bahwa hanya satu dari branch_computations yang akan dieksekusi, bergantung pada

nilai branch_index.

Konv (konvolusi)

Lihat juga

XlaBuilder::Conv.

Sebagai ConvWithGeneralPadding, tetapi padding ditentukan dengan singkat sebagai

SAMA atau VALID. Padding SAME melapisi input (lhs) dengan angka nol, sehingga

output memiliki bentuk yang sama dengan input ketika tidak mempertimbangkan

langkah melangkah. Padding VALID berarti tidak ada padding.

ConvWithGeneralPadding (konvolusi)

Lihat juga

XlaBuilder::ConvWithGeneralPadding.

Menghitung konvolusi jenis yang digunakan dalam jaringan neural. Di sini, konvolusi dapat dianggap sebagai jendela n-dimensi yang bergerak melintasi area dasar n-dimensi dan komputasi dilakukan untuk setiap kemungkinan posisi jendela.

| Argumen | Jenis | Semantik |

|---|---|---|

lhs |

XlaOp |

peringkat n+2 array input |

rhs |

XlaOp |

peringkat n+2 array bobot kernel |

window_strides |

ArraySlice<int64> |

Array n-d dari langkah kernel |

padding |

ArraySlice< pair<int64,int64>> |

array n-d padding (rendah, tinggi) |

lhs_dilation |

ArraySlice<int64> |

Array faktor dilatasi n-d ls |

rhs_dilation |

ArraySlice<int64> |

Array faktor dilatasi n-d rhs |

feature_group_count |

int64 | jumlah grup fitur |

batch_group_count |

int64 | jumlah kelompok batch |

Misalkan n adalah jumlah dimensi spasial. Argumen lhs adalah array peringkat n+2 yang mendeskripsikan area dasar. Ini disebut input, meskipun tentu saja

rhs juga merupakan input. Dalam jaringan neural, ini adalah aktivasi input.

Dimensi n+2, dalam urutan ini:

batch: Setiap koordinat dalam dimensi ini mewakili input independen yang digunakan untuk konvolusi.z/depth/features: Setiap posisi (y,x) di area dasar memiliki vektor yang terkait dengannya, yang masuk ke dimensi ini.spatial_dims: Menjelaskan dimensi spasialnyang menentukan area dasar tempat jendela bergerak.

Argumen rhs adalah array peringkat n+2 yang menjelaskan filter/kernel/jendela konvolusional. Dimensi tersebut, dalam urutan ini:

output-z: Dimensizoutput.input-z: Ukuran dimensi ini dikalifeature_group_countharus sama dengan ukuran dimensizdalam lb.spatial_dims: Menjelaskan dimensi spasialnyang menentukan jendela n-d yang bergerak di seluruh area dasar.

Argumen window_strides menentukan langkah jendela konvolusional

dalam dimensi spasial. Misalnya, jika langkah dalam dimensi spasial pertama

adalah 3, jendela hanya dapat ditempatkan pada koordinat dengan

indeks spasial pertama yang habis dibagi 3.

Argumen padding menentukan jumlah padding nol yang akan diterapkan ke

area dasar. Jumlah padding bisa negatif -- nilai absolut

padding negatif menunjukkan jumlah elemen yang akan dihapus dari dimensi

yang ditentukan sebelum melakukan konvolusi. padding[0] menentukan padding untuk

dimensi y dan padding[1] menentukan padding untuk dimensi x. Setiap

pasangan memiliki padding rendah sebagai elemen pertama dan padding tinggi sebagai elemen

kedua. Padding rendah diterapkan ke arah indeks bawah, sedangkan padding tinggi diterapkan ke arah indeks yang lebih tinggi. Misalnya, jika padding[1] adalah (2,3), akan ada padding dengan 2 angka nol di sebelah kiri dan dengan 3 angka nol di sebelah kanan dalam dimensi spasial kedua. Menggunakan padding

sama dengan memasukkan nilai nol yang sama tersebut ke dalam input (lhs) sebelum

melakukan konvolusi.

Argumen lhs_dilation dan rhs_dilation menentukan faktor dilatasi yang akan

diterapkan ke ls dan rhs, di setiap dimensi spasial. Jika faktor dilatasi dalam dimensi spasial adalah d, lubang d-1 secara implisit ditempatkan di antara setiap entri dalam dimensi tersebut, sehingga ukuran array akan bertambah. Lubang diisi dengan nilai tanpa pengoperasian, yang untuk konvolusi berarti nol.

Pelebaran rhs juga disebut konvolusi yang mengerikan. Untuk detail selengkapnya, lihat

tf.nn.atrous_conv2d. Dilatasi Ls juga disebut

konvolusi {i>transpose<i}. Untuk detail selengkapnya, lihat tf.nn.conv2d_transpose.

Argumen feature_group_count (nilai default 1) dapat digunakan untuk konvolusi yang dikelompokkan. feature_group_count harus menjadi pembagi dari dimensi fitur input dan output. Jika feature_group_count lebih besar dari 1, artinya secara konseptual dimensi fitur input dan output serta dimensi fitur output rhs dibagi secara merata menjadi banyak grup feature_group_count, yang masing-masing grup terdiri dari suburutan fitur yang berurutan. Dimensi

fitur input rhs harus sama dengan dimensi fitur input

lhs dibagi dengan feature_group_count (sehingga sudah memiliki ukuran

grup fitur input). Grup ke-i digunakan bersama untuk menghitung

feature_group_count untuk banyak konvolusi yang terpisah. Hasil konvolusi ini digabungkan bersama dalam dimensi fitur output.

Untuk konvolusi kedalaman, argumen feature_group_count akan ditetapkan ke dimensi fitur input, dan filter akan dibentuk ulang dari [filter_height, filter_width, in_channels, channel_multiplier] menjadi [filter_height, filter_width, 1, in_channels * channel_multiplier]. Untuk mengetahui detail

selengkapnya, lihat tf.nn.depthwise_conv2d.

Argumen batch_group_count (nilai default 1) dapat digunakan untuk filter yang dikelompokkan selama propagasi mundur. batch_group_count harus menjadi pembagi

ukuran dimensi batch lhs (input). Jika batch_group_count lebih besar

dari 1, berarti dimensi batch output harus berukuran input batch

/ batch_group_count. batch_group_count harus menjadi pembagi ukuran fitur

output.

Bentuk output memiliki dimensi berikut, dalam urutan ini:

batch: Ukuran dimensi ini dikalibatch_group_countharus sama dengan ukuran dimensibatchdalam j.z: Ukuran yang sama sepertioutput-zpada kernel (rhs).spatial_dims: Satu nilai untuk setiap penempatan jendela konvolusional yang valid.

Gambar di atas menunjukkan cara kerja kolom batch_group_count. Secara efektif, kita

membagi setiap batch nahs menjadi grup batch_group_count, dan melakukan hal yang sama untuk

fitur output. Kemudian, untuk setiap grup ini, kita melakukan konvolusi berpasangan dan menggabungkan output di sepanjang dimensi fitur output. Semantik operasional

semua dimensi lainnya (fitur dan spasial) tetap sama.

Penempatan jendela konvolusional yang valid ditentukan oleh langkah dan ukuran area dasar setelah padding.

Untuk mendeskripsikan fungsi konvolusi, pertimbangkan konvolusi 2d, dan pilih beberapa koordinat batch, z, y, x yang tetap dalam output. Kemudian, (y,x) adalah

posisi sudut jendela dalam area dasar (mis. sudut kiri atas,

bergantung pada cara Anda menafsirkan dimensi spasial). Kita sekarang memiliki jendela 2d, yang diambil dari area dasar, dengan setiap titik 2d dikaitkan dengan vektor

1d, sehingga kita mendapatkan kotak 3d. Dari kernel konvolusional, karena kita telah menetapkan

koordinat output z, kita juga memiliki kotak 3d. Kedua kotak tersebut memiliki dimensi

yang sama, jadi kita bisa menjumlahkan perkalian dengan unsur-unsur di antara kedua

kotak tersebut (serupa dengan perkalian titik). Itulah nilai output.

Perlu diketahui bahwa jika output-z misalnya, 5, maka setiap posisi jendela akan menghasilkan 5

nilai dalam output ke dimensi z output. Nilai-nilai ini berbeda

di bagian mana dari kernel konvolusional yang digunakan - ada kotak nilai 3d

terpisah yang digunakan untuk setiap koordinat output-z. Jadi Anda bisa menganggapnya sebagai 5

konvolusi terpisah dengan filter berbeda untuk masing-masingnya.

Berikut adalah kode semu untuk konvolusi 2d dengan padding dan striding:

for (b, oz, oy, ox) { // output coordinates

value = 0;

for (iz, ky, kx) { // kernel coordinates and input z

iy = oy*stride_y + ky - pad_low_y;

ix = ox*stride_x + kx - pad_low_x;

if ((iy, ix) inside the base area considered without padding) {

value += input(b, iz, iy, ix) * kernel(oz, iz, ky, kx);

}

}

output(b, oz, oy, ox) = value;

}

ConvertElementType

Lihat juga

XlaBuilder::ConvertElementType.

Serupa dengan static_cast berbasis elemen di C++, menjalankan operasi konversi

berbasis elemen dari bentuk data ke bentuk target. Dimensi harus

cocok, dan konversinya harus berbasis elemen; misalnya, elemen s32 menjadi

elemen f32 melalui rutinitas konversi s32 ke f32.

ConvertElementType(operand, new_element_type)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array jenis T dengan redup D |

new_element_type |

PrimitiveType |

tipe U |

Dimensi operand dan bentuk target harus cocok. Jenis elemen sumber dan tujuan tidak boleh berupa tupel.

Konversi seperti T=s32 ke U=f32 akan melakukan rutinitas konversi int-to-float

yang menormalisasi seperti pembulatan ke nilai terdekat genap.

let a: s32[3] = {0, 1, 2};

let b: f32[3] = convert(a, f32);

then b == f32[3]{0.0, 1.0, 2.0}

CrossReplicaSum

Melakukan AllReduce dengan komputasi penjumlahan.

CustomCall

Lihat juga

XlaBuilder::CustomCall.

Memanggil fungsi yang disediakan pengguna dalam komputasi.

CustomCall(target_name, args..., shape)

| Argumen | Jenis | Semantik |

|---|---|---|

target_name |

string |

Nama fungsi. Petunjuk panggilan akan dikeluarkan yang menargetkan nama simbol ini. |

args |

urutan N XlaOpd |

N argumen jenis arbitrer, yang akan diteruskan ke fungsi. |

shape |

Shape |

Bentuk output fungsi |

Tanda tangan fungsi sama, terlepas dari aritas atau jenis argumen:

extern "C" void target_name(void* out, void** in);

Misalnya, jika CustomCall digunakan sebagai berikut:

let x = f32[2] {1,2};

let y = f32[2x3] { {10, 20, 30}, {40, 50, 60} };

CustomCall("myfunc", {x, y}, f32[3x3])

Berikut adalah contoh implementasi myfunc:

extern "C" void myfunc(void* out, void** in) {

float (&x)[2] = *static_cast<float(*)[2]>(in[0]);

float (&y)[2][3] = *static_cast<float(*)[2][3]>(in[1]);

EXPECT_EQ(1, x[0]);

EXPECT_EQ(2, x[1]);

EXPECT_EQ(10, y[0][0]);

EXPECT_EQ(20, y[0][1]);

EXPECT_EQ(30, y[0][2]);

EXPECT_EQ(40, y[1][0]);

EXPECT_EQ(50, y[1][1]);

EXPECT_EQ(60, y[1][2]);

float (&z)[3][3] = *static_cast<float(*)[3][3]>(out);

z[0][0] = x[1] + y[1][0];

// ...

}

Fungsi yang diberikan pengguna tidak boleh memiliki efek samping dan eksekusinya harus idempoten.

Titik

Lihat juga

XlaBuilder::Dot.

Dot(lhs, rhs)

| Argumen | Jenis | Semantik |

|---|---|---|

lhs |

XlaOp |

array jenis T |

rhs |

XlaOp |

array jenis T |

Semantik persis operasi ini bergantung pada peringkat operand:

| Input | Output | Semantik |

|---|---|---|

vektor [n] vektor dot [n] |

skalar | perkalian titik vektor |

matriks [m x k] vektor dot [k] |

vektor [m] | perkalian matriks-vektor |

matriks [m x k] dot matriks [k x n] |

matriks [m x n] | perkalian matriks-matriks |

Operasi tersebut menjalankan jumlah perkalian pada dimensi kedua lhs (atau

yang pertama jika memiliki peringkat 1) dan dimensi pertama rhs. Ini adalah dimensi

"terkontrak". Dimensi terkontrak lhs dan rhs harus

berukuran sama. Dalam praktiknya, AI generatif dapat digunakan untuk melakukan perkalian titik di antara vektor, perkalian vektor/matriks, atau perkalian matriks/matriks.

DotGeneral

Lihat juga

XlaBuilder::DotGeneral.

DotGeneral(lhs, rhs, dimension_numbers)

| Argumen | Jenis | Semantik |

|---|---|---|

lhs |

XlaOp |

array jenis T |

rhs |

XlaOp |

array jenis T |

dimension_numbers |

DotDimensionNumbers |

nomor dimensi batch dan kontrak |

Serupa dengan Dot, tetapi memungkinkan nomor dimensi kontrak dan batch ditentukan untuk lhs dan rhs.

| Kolom DotDimensionNumbers | Jenis | Semantik |

|---|---|---|

lhs_contracting_dimensions

|

int64 berulang | lhs nomor dimensi

mengontrak |

rhs_contracting_dimensions

|

int64 berulang | rhs nomor dimensi

mengontrak |

lhs_batch_dimensions

|

int64 berulang | lhs nomor dimensi

batch |

rhs_batch_dimensions

|

int64 berulang | rhs nomor dimensi

batch |

DotGeneral melakukan jumlah produk dibandingkan dimensi kontrak yang ditentukan dalam

dimension_numbers.

Angka dimensi terkontrak terkait dari lhs dan rhs tidak harus

sama tetapi harus memiliki ukuran dimensi yang sama.

Contoh dengan nomor dimensi kontrak:

lhs = { {1.0, 2.0, 3.0},

{4.0, 5.0, 6.0} }

rhs = { {1.0, 1.0, 1.0},

{2.0, 2.0, 2.0} }

DotDimensionNumbers dnums;

dnums.add_lhs_contracting_dimensions(1);

dnums.add_rhs_contracting_dimensions(1);

DotGeneral(lhs, rhs, dnums) -> { {6.0, 12.0},

{15.0, 30.0} }

Nomor dimensi batch terkait dari lhs dan rhs harus memiliki ukuran dimensi

yang sama.

Contoh dengan nomor dimensi batch (ukuran tumpukan 2, matriks 2x2):

lhs = { { {1.0, 2.0},

{3.0, 4.0} },

{ {5.0, 6.0},

{7.0, 8.0} } }

rhs = { { {1.0, 0.0},

{0.0, 1.0} },

{ {1.0, 0.0},

{0.0, 1.0} } }

DotDimensionNumbers dnums;

dnums.add_lhs_contracting_dimensions(2);

dnums.add_rhs_contracting_dimensions(1);

dnums.add_lhs_batch_dimensions(0);

dnums.add_rhs_batch_dimensions(0);

DotGeneral(lhs, rhs, dnums) -> { { {1.0, 2.0},

{3.0, 4.0} },

{ {5.0, 6.0},

{7.0, 8.0} } }

| Input | Output | Semantik |

|---|---|---|

[b0, m, k] dot [b0, k, n] |

[b0, b, n] | matmul batch |

[b0, b1, m, k] dot [b0, b1, k, n] |

[b0, b1, m, n] | matmul batch |

Dengan demikian, nomor dimensi yang dihasilkan dimulai dengan dimensi batch,

lalu dimensi non-kontrak/non-batch lhs, dan terakhir dimensi

non-kontrak/non-batch rhs.

DynamicSlice

Lihat juga

XlaBuilder::DynamicSlice.

DynamicSlice mengekstrak sub-array dari array input pada start_indices dinamis. Ukuran irisan di setiap dimensi diteruskan dalam

size_indices, yang menentukan titik akhir interval irisan eksklusif di setiap

dimensi: [awal, awal + ukuran). Bentuk start_indices harus berperingkat ==

1, dengan ukuran dimensi sama dengan peringkat operand.

DynamicSlice(operand, start_indices, size_indices)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

Array dimensi N jenis T |

start_indices |

urutan dari N XlaOp |

Daftar bilangan bulat skalar N yang berisi indeks awal irisan untuk setiap dimensi. Nilai harus lebih besar atau sama dengan nol. |

size_indices |

ArraySlice<int64> |

Daftar bilangan bulat N yang berisi ukuran irisan untuk setiap dimensi. Setiap nilai harus benar-benar lebih besar dari nol, dan ukuran awal + harus kurang dari atau sama dengan ukuran dimensi untuk menghindari penggabungan ukuran dimensi modulus. |

Indeks irisan efektif dihitung dengan menerapkan transformasi

berikut untuk setiap indeks i di [1, N) sebelum menjalankan slice:

start_indices[i] = clamp(start_indices[i], 0, operand.dimension_size[i] - size_indices[i])

Hal ini memastikan bahwa slice yang diekstrak selalu dalam terikat sehubungan dengan array operand. Jika slice berada di dalam sebelum transformasi diterapkan, transformasi tidak akan berpengaruh.

Contoh 1 dimensi:

let a = {0.0, 1.0, 2.0, 3.0, 4.0}

let s = {2}

DynamicSlice(a, s, {2}) produces:

{2.0, 3.0}

Contoh 2 dimensi:

let b =

{ {0.0, 1.0, 2.0},

{3.0, 4.0, 5.0},

{6.0, 7.0, 8.0},

{9.0, 10.0, 11.0} }

let s = {2, 1}

DynamicSlice(b, s, {2, 2}) produces:

{ { 7.0, 8.0},

{10.0, 11.0} }

DynamicUpdateSlice

Lihat juga

XlaBuilder::DynamicUpdateSlice.

DynamicUpdateSlice menghasilkan hasil yang merupakan nilai array input operand, dengan irisan update yang ditimpa di start_indices.

Bentuk update menentukan bentuk sub-array hasil yang

diupdate.

Bentuk start_indices harus berperingkat == 1, dengan ukuran dimensi yang sama dengan

peringkat operand.

DynamicUpdateSlice(operand, update, start_indices)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

Array dimensi N jenis T |

update |

XlaOp |

Array dimensi N jenis T yang berisi pembaruan irisan. Setiap dimensi bentuk update harus lebih besar dari nol, dan start + update harus kurang dari atau sama dengan ukuran operand untuk setiap dimensi agar tidak menghasilkan indeks update di luar batas. |

start_indices |

urutan dari N XlaOp |

Daftar bilangan bulat skalar N yang berisi indeks awal irisan untuk setiap dimensi. Nilai harus lebih besar atau sama dengan nol. |

Indeks irisan efektif dihitung dengan menerapkan transformasi

berikut untuk setiap indeks i di [1, N) sebelum menjalankan slice:

start_indices[i] = clamp(start_indices[i], 0, operand.dimension_size[i] - update.dimension_size[i])

Hal ini memastikan bahwa slice yang diperbarui selalu dalam batasan sehubungan dengan array Operand. Jika slice berada di dalam sebelum transformasi diterapkan, transformasi tidak akan berpengaruh.

Contoh 1 dimensi:

let a = {0.0, 1.0, 2.0, 3.0, 4.0}

let u = {5.0, 6.0}

let s = {2}

DynamicUpdateSlice(a, u, s) produces:

{0.0, 1.0, 5.0, 6.0, 4.0}

Contoh 2 dimensi:

let b =

{ {0.0, 1.0, 2.0},

{3.0, 4.0, 5.0},

{6.0, 7.0, 8.0},

{9.0, 10.0, 11.0} }

let u =

{ {12.0, 13.0},

{14.0, 15.0},

{16.0, 17.0} }

let s = {1, 1}

DynamicUpdateSlice(b, u, s) produces:

{ {0.0, 1.0, 2.0},

{3.0, 12.0, 13.0},

{6.0, 14.0, 15.0},

{9.0, 16.0, 17.0} }

Operasi aritmatika biner {i>element<i}-{i>wise<i}

Lihat juga

XlaBuilder::Add.

Mendukung serangkaian operasi aritmatika biner berbasis elemen.

Op(lhs, rhs)

Dengan Op adalah salah satu dari Add (penambahan), Sub (pengurangan), Mul

(perkalian), Div (pembagian), Rem (sisa), Max (maksimum), Min

(minimum), LogicalAnd (AND logis), atau LogicalOr (log logis).

| Argumen | Jenis | Semantik |

|---|---|---|

lhs |

XlaOp |

operand kiri: array jenis T |

rhs |

XlaOp |

operand kanan: array jenis T |

Bentuk argumen harus mirip atau kompatibel. Lihat dokumentasi penyiaran tentang apa artinya kompatibel dengan bentuk. Hasil operasi memiliki bentuk yang merupakan hasil dari penyiaran dua array input. Dalam varian ini, operasi antar-array dengan peringkat yang berbeda tidak didukung, kecuali jika salah satu operand adalah skalar.

Jika Op adalah Rem, tanda hasil diambil dari dividen, dan nilai absolut hasilnya selalu lebih kecil dari nilai absolut pembagi.

Overflow pembagian bilangan bulat (pembagian/sisa yang ditandatangani/tidak ditandatangani dengan nol atau pembagian/sisa

yang ditandatangani dari INT_SMIN dengan -1) menghasilkan nilai yang ditentukan

implementasi.

Terdapat varian alternatif dengan dukungan penyiaran dengan tingkat yang berbeda untuk operasi berikut:

Op(lhs, rhs, broadcast_dimensions)

Dengan Op sama dengan yang di atas. Varian operasi ini harus digunakan untuk operasi aritmetika antara array dengan peringkat yang berbeda (seperti menambahkan matriks ke vektor).

Operand broadcast_dimensions tambahan adalah bagian bilangan bulat yang digunakan untuk

memperluas peringkat operand dengan peringkat lebih rendah ke peringkat operand

yang lebih tinggi. broadcast_dimensions memetakan dimensi bentuk berperingkat lebih rendah ke dimensi bentuk berperingkat lebih tinggi. Dimensi yang tidak dipetakan dari bentuk yang diperluas

diisi dengan dimensi ukuran satu. Penyiaran dimensi menurun

lalu menyiarkan bentuk di sepanjang dimensi yang merosot ini untuk menyamakan

bentuk kedua operand. Semantik ini dijelaskan secara mendetail di

halaman penyiaran.

Operasi perbandingan {i>element<i}-{i>wise<i}

Lihat juga

XlaBuilder::Eq.

Kumpulan operasi perbandingan biner standar yang memperhitungkan elemen didukung. Perhatikan bahwa semantik perbandingan floating point IEEE 754 standar berlaku saat membandingkan jenis floating point.

Op(lhs, rhs)

Dengan Op adalah salah satu dari Eq (sama dengan), Ne (tidak sama dengan), Ge

(lebih besar atau sama dari), Gt (lebih besar dari), Le (kurang atau sama dengan), Lt

(kurang-dari). Kumpulan operator lainnya, EqTotalOrder, NeTotalOrder, GeTotalOrder, GtTotalOrder, LeTotalOrder, dan LtTotalOrder, memberikan fungsi yang sama, kecuali bahwa operator tersebut juga mendukung urutan total pada bilangan floating point, dengan menerapkan -NaN < -Inf < -Finite < -0 < +0 < +Finite <Na

| Argumen | Jenis | Semantik |

|---|---|---|

lhs |

XlaOp |

operand kiri: array jenis T |

rhs |

XlaOp |

operand kanan: array jenis T |

Bentuk argumen harus mirip atau kompatibel. Lihat

dokumentasi penyiaran tentang apa artinya kompatibel

dengan bentuk. Hasil operasi memiliki bentuk yang merupakan hasil dari

penyiaran dua array input dengan jenis elemen PRED. Dalam varian ini,

operasi antara array dengan peringkat yang berbeda tidak didukung, kecuali jika salah satu

operand adalah skalar.

Terdapat varian alternatif dengan dukungan penyiaran dengan tingkat yang berbeda untuk operasi berikut:

Op(lhs, rhs, broadcast_dimensions)

Dengan Op sama dengan yang di atas. Varian operasi ini harus digunakan untuk operasi perbandingan antara array dengan peringkat yang berbeda (seperti menambahkan matriks ke vektor).

Operand broadcast_dimensions tambahan adalah bagian bilangan bulat yang menentukan

dimensi yang akan digunakan untuk menyiarkan operand. Semantik dijelaskan

secara mendetail di halaman penyiaran.

Fungsi unary yang {i>element<i}-{i>wise<i}

XlaBuilder mendukung fungsi unary berbasis elemen ini:

Abs(operand) absen berbasis elemen x -> |x|.

Ceil(operand) Ceil berbasis elemen x -> ⌈x⌉.

Cos(operand) Kosinus x -> cos(x) berdasarkan elemen.

Exp(operand) x -> e^x eksponensial alami berbasis elemen.

Floor(operand) Lantai berdasarkan elemen x -> ⌊x⌋.

Imag(operand) Bagian imajiner dari elemen yang kompleks (atau nyata). x -> imag(x). Jika operand adalah tipe floating point, menampilkan 0.

IsFinite(operand) Menguji apakah setiap elemen operand terbatas,

artinya, bukan tak terbatas positif atau negatif, dan bukan NaN. Menampilkan array nilai PRED dengan bentuk yang sama seperti input, dengan setiap elemen bernilai true jika dan hanya jika elemen input yang sesuai terbatas.

Log(operand) Logaritma alami berbasis elemen x -> ln(x).

LogicalNot(operand) Logika element-wise, bukan x -> !(x).

Logistic(operand) Komputasi fungsi logistik berbasis elemen x ->

logistic(x).

PopulationCount(operand) Menghitung jumlah bit yang ditetapkan dalam setiap

elemen operand.

Neg(operand) negasi berbasis elemen x -> -x.

Real(operand) Bagian nyata berbasis elemen dari bentuk kompleks (atau nyata).

x -> real(x). Jika operand adalah jenis floating point, akan menampilkan nilai yang sama.

Rsqrt(operand) Kebalikan elemen dari operasi akar kuadrat x -> 1.0 / sqrt(x).

Sign(operand) Operasi tanda {i>element<i}-wise x -> sgn(x) dengan

\[\text{sgn}(x) = \begin{cases} -1 & x < 0\\ -0 & x = -0\\ NaN & x = NaN\\ +0 & x = +0\\ 1 & x > 0 \end{cases}\]

menggunakan operator perbandingan jenis elemen operand.

Sqrt(operand) Operasi akar kuadrat berbasis elemen x -> sqrt(x).

Cbrt(operand) Operasi akar kubik berbasis elemen x -> cbrt(x).

Tanh(operand) Tangen hiperbolik berbasis elemen x -> tanh(x).

Round(operand) Pembulatan berbasis elemen, mengikat menjauh dari nol.

RoundNearestEven(operand) Pembulatan berbasis elemen, mengikat ke bilangan genap terdekat.

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

Operand ke fungsi |

Fungsi ini diterapkan ke setiap elemen dalam array operand, sehingga menghasilkan array dengan bentuk yang sama. operand diizinkan untuk menjadi skalar (peringkat 0).

Fft

Operasi XLA FFT mengimplementasikan Transformasi Fourier maju dan terbalik untuk input/output yang nyata dan kompleks. FFT multidimensi pada maksimal 3 sumbu didukung.

Lihat juga

XlaBuilder::Fft.

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

Array yang akan kita transformasikan Fourier. |

fft_type |

FftType |

Lihat tabel di bawah. |

fft_length |

ArraySlice<int64> |

Panjang domain waktu sumbu yang diubah. Hal ini diperlukan khususnya agar IRFFT dapat menyesuaikan ukuran sumbu terdalam, karena RFFT(fft_length=[16]) memiliki bentuk output yang sama dengan RFFT(fft_length=[17]). |

FftType |

Semantik |

|---|---|

FFT |

Meneruskan FFT kompleks ke kompleks. Bentuk tidak berubah. |

IFFT |

FFT kompleks ke kompleks terbalik. Bentuk tidak berubah. |

RFFT |

Meneruskan FFT real-ke-kompleks. Bentuk sumbu terdalam dikurangi menjadi fft_length[-1] // 2 + 1 jika fft_length[-1] bukan nilai nol, sehingga menghilangkan bagian konjugasi terbalik dari sinyal yang diubah di luar frekuensi Nyquist. |

IRFFT |

FFT real-ke-kompleks terbalik (yaitu mengambil kompleks, menampilkan real). Bentuk sumbu terdalam diperluas menjadi fft_length[-1] jika fft_length[-1] adalah nilai bukan nol, yang menyimpulkan bagian sinyal yang diubah di luar frekuensi Nyquist dari konjugasi terbalik entri 1 ke fft_length[-1] // 2 + 1. |

FFT Multidimensi

Jika lebih dari 1 fft_length disediakan, hal ini setara dengan menerapkan

operasi FFT ke setiap sumbu terdalam. Perhatikan bahwa untuk

kasus nyata yang nyata> kompleks dan kompleks, transformasi sumbu terdalam

(secara efektif) dilakukan terlebih dahulu (RFFT; terakhir untuk IRFFT), itulah sebabnya sumbu

terdalam adalah yang mengubah ukuran. Transformasi sumbu lainnya akan

menjadi kompleks->kompleks.

Detail implementasi

CPU FFT didukung oleh TensorFFT Eigen. GPU FFT menggunakan cuFFT.

Kumpulkan

Operasi pengumpulan XLA menggabungkan beberapa irisan (setiap irisan pada offset runtime yang mungkin berbeda) dari array input.

Semantik Umum

Lihat juga

XlaBuilder::Gather.

Untuk deskripsi yang lebih intuitif, lihat bagian "Deskripsi Informal" di bawah.

gather(operand, start_indices, offset_dims, collapsed_slice_dims, slice_sizes, start_index_map)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

Array yang kita kumpulkan. |

start_indices |

XlaOp |

Array yang berisi indeks awal dari irisan yang kita kumpulkan. |

index_vector_dim |

int64 |

Dimensi dalam start_indices yang "berisi" indeks awal. Lihat di bawah untuk deskripsi mendetail. |

offset_dims |

ArraySlice<int64> |

Kumpulan dimensi dalam bentuk output yang di-offset ke dalam array yang diiris dari operand. |

slice_sizes |

ArraySlice<int64> |

slice_sizes[i] adalah batas untuk bagian pada dimensi i. |

collapsed_slice_dims |

ArraySlice<int64> |

Kumpulan dimensi di setiap irisan yang diciutkan. Dimensi ini harus memiliki ukuran 1. |

start_index_map |

ArraySlice<int64> |

Peta yang menjelaskan cara memetakan indeks di start_indices ke indeks hukum ke dalam operand. |

indices_are_sorted |

bool |

Apakah indeks dijamin akan diurutkan oleh pemanggil. |

unique_indices |

bool |

Apakah indeks dijamin unik oleh pemanggil. |

Untuk memudahkan, kami melabeli dimensi dalam array output, bukan dalam offset_dims sebagai batch_dims.

Output-nya adalah array peringkat batch_dims.size + offset_dims.size.

operand.rank harus sama dengan jumlah offset_dims.size dan

collapsed_slice_dims.size. Selain itu, slice_sizes.size harus sama dengan

operand.rank.

Jika index_vector_dim sama dengan start_indices.rank, secara implisit kami menganggap

start_indices memiliki dimensi 1 di akhir (yaitu, jika start_indices memiliki

bentuk [6,7] dan index_vector_dim adalah 2, secara implisit kami menganggap

bentuk start_indices sebagai [6,7,1]).

Batas untuk array output di sepanjang dimensi i dihitung sebagai berikut:

Jika

iada dibatch_dims(yaitu sama denganbatch_dims[k]untuk beberapak), kami memilih batas dimensi yang sesuai daristart_indices.shape, melewatiindex_vector_dim(yaitu pilihstart_indices.shape.dims[k] jikak<index_vector_dimdanstart_indices.shape.dims[k+1] sebaliknya).Jika

iada dioffset_dims(yaitu sama denganoffset_dims[k] untuk beberapak), kita memilih batas yang sesuai darislice_sizessetelah memperhitungkancollapsed_slice_dims(yaitu, kita memilihadjusted_slice_sizes[k] denganadjusted_slice_sizesslice_sizesdengan batas pada indekscollapsed_slice_dimsdihapus).

Secara formal, indeks operand In yang sesuai dengan indeks output yang diberikan Out

dihitung sebagai berikut:

Biarkan

G= {Out[k] untukkdalambatch_dims}. GunakanGuntuk membagi vektorSsehinggaS[i] =start_indices[Gabungkan(G,i)] tempat Gabungkan(A, b) menyisipkan b pada posisiindex_vector_dimke A. Perhatikan bahwa hal ini ditentukan dengan baik meskipunGkosong: JikaGkosong, makaS=start_indices.Buat indeks awal,

Sin, keoperandmenggunakanSdengan menyebarkanSmenggunakanstart_index_map. Lebih tepatnya:Sin[start_index_map[k]] =S[k] jikak<start_index_map.size.Sin[_] =0jika sebaliknya.

Buat indeks

Oinkeoperanddengan menyebarkan indeks pada dimensi offset diOutsesuai dengancollapsed_slice_dimsyang ditetapkan. Lebih tepatnya:Oin[remapped_offset_dims(k)] =Out[offset_dims[k]] jikak<offset_dims.size(remapped_offset_dimsditentukan di bawah).Oin[_] =0jika sebaliknya.

InadalahOin+Sindengan + adalah penambahan menurut elemen.

remapped_offset_dims adalah fungsi monoton dengan domain [0,

offset_dims.size) dan rentang [0, operand.rank) \ collapsed_slice_dims. Jadi,

jika, misalnya, offset_dims.size adalah 4, operand.rank adalah 6, dan

collapsed_slice_dims adalah {0, 2}, lalu remapped_offset_dims adalah {0→1,

1→3, 2→4, 3→5}.

Jika indices_are_sorted disetel ke benar (true), XLA dapat mengasumsikan bahwa start_indices

diurutkan (dalam urutan start_index_map menaik) oleh pengguna. Jika tidak,

semantik akan ditetapkan.

Jika unique_indices disetel ke benar (true), XLA dapat mengasumsikan bahwa semua elemen

yang tersebar bersifat unik. Jadi XLA dapat menggunakan operasi non-atom. Jika

unique_indices disetel ke benar (true) dan indeks yang tersebar tidak

unik, maka semantik diimplementasikan.

Deskripsi dan Contoh informal

Secara informal, setiap Out indeks dalam array output sesuai dengan elemen E

dalam array operand, yang dihitung sebagai berikut:

Kita menggunakan dimensi batch di

Outuntuk mencari indeks awal daristart_indices.Kita menggunakan

start_index_mapuntuk memetakan indeks awal (yang ukurannya mungkin lebih kecil dari operand.rank) ke indeks awal "penuh" ke dalamoperand.Kita secara dinamis membagi potongan dengan ukuran

slice_sizesmenggunakan indeks awal lengkap.Kita membentuk ulang irisan dengan menciutkan dimensi

collapsed_slice_dims. Karena semua dimensi irisan yang diciutkan harus memiliki batas 1, bentuk ulang ini selalu sah.Kita menggunakan dimensi offset di

Outuntuk mengindeks ke dalam irisan ini guna mendapatkan elemen input,E, yang sesuai dengan indeks outputOut.

index_vector_dim ditetapkan ke start_indices.rank - 1 di semua contoh

berikutnya. Nilai yang lebih menarik untuk index_vector_dim tidak akan mengubah

operasi secara mendasar, tetapi membuat representasi visual menjadi lebih rumit.

Untuk mendapatkan intuisi tentang kecocokan semua hal di atas, mari kita lihat

contoh yang mengumpulkan 5 irisan bentuk [8,6] dari array [16,11]. Posisi

irisan ke dalam array [16,11] dapat direpresentasikan sebagai vektor

indeks bentuk S64[2], sehingga kumpulan 5 posisi dapat direpresentasikan sebagai

array S64[5,2].

Perilaku operasi pengumpulan kemudian dapat digambarkan sebagai transformasi

indeks yang menggunakan [G,O0,O1], sebuah indeks dalam

bentuk output, dan memetakannya ke elemen dalam array input dengan cara

berikut:

Pertama-tama, kita memilih vektor (X,Y) dari array indeks pengumpulan menggunakan G.

Kemudian, elemen dalam array output pada indeks

[G,O0,O1] adalah elemen dalam array input

pada indeks [X+O0,Y+O1].

slice_sizes adalah [8,6], yang menentukan rentang O0 dan

O1, dan selanjutnya menentukan batas irisan tersebut.

Operasi pengumpulan ini bertindak sebagai irisan dinamis batch dengan G sebagai dimensi

batch.

Indeks pengumpulan mungkin multidimensi. Misalnya, versi yang lebih umum dari contoh di atas yang menggunakan array "budaya indeks" dari bentuk [4,5,2] akan menerjemahkan indeks seperti ini:

Sekali lagi, ini berfungsi sebagai irisan dinamis batch G0 dan

G1 sebagai dimensi batch. Ukuran irisan masih [8,6].

Operasi pengumpulan di XLA menggeneralisasi semantik informal yang diuraikan di atas dengan cara berikut:

Kita dapat mengonfigurasi dimensi mana dalam bentuk output yang merupakan dimensi offset (dimensi yang berisi

O0,O1di contoh terakhir). Dimensi batch output (dimensi yang berisiG0,G1di contoh terakhir) ditentukan sebagai dimensi output yang tidak mengimbangi dimensi.Jumlah dimensi offset output yang secara eksplisit ada dalam bentuk output mungkin lebih kecil daripada peringkat input. Dimensi yang "tidak ada" ini, yang dicantumkan secara eksplisit sebagai

collapsed_slice_dims, harus memiliki ukuran irisan1. Karena keduanya memiliki ukuran irisan1, satu-satunya indeks yang valid untuk keduanya adalah0dan mengeluarkannya tidak menimbulkan ambiguitas.Bagian yang diekstrak dari array "Mengumpulkan Indeks" ((

X,Y) pada contoh terakhir) mungkin memiliki lebih sedikit elemen daripada peringkat array input, dan pemetaan eksplisit menentukan bagaimana indeks harus diperluas agar memiliki peringkat yang sama dengan input.

Sebagai contoh terakhir, kita menggunakan (2) dan (3) untuk mengimplementasikan tf.gather_nd:

G0 dan G1 digunakan untuk memisahkan indeks awal dari array indeks pengumpulan seperti biasa, kecuali indeks awal hanya memiliki satu elemen, yaitu X. Demikian pula, hanya ada satu indeks offset output dengan nilai

O0. Namun, sebelum digunakan sebagai indeks ke dalam array input,

nilai ini diperluas sesuai dengan "Kumpulkan Pemetaan Indeks" (start_index_map dalam

deskripsi formal) dan "Pemetaan Offset" (remapped_offset_dims dalam

deskripsi formal) menjadi [X,0] dan [0,O0],

menambahkan hingga [X,O0]. Dengan kata lain, indeks output

[0O] dan O sebagai indeks output

1 dan G1.G0000GGGatherIndicestf.gather_nd

slice_sizes untuk kasus ini adalah [1,11]. Secara intuitif, ini berarti bahwa setiap indeks X dalam array indeks pengumpulan akan memilih seluruh baris dan hasilnya adalah penyambungan dari semua baris tersebut.

GetDimensionSize

Lihat juga

XlaBuilder::GetDimensionSize.

Menampilkan ukuran dimensi yang diberikan dari operand. Operand harus berbentuk array.

GetDimensionSize(operand, dimension)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array input n dimensi |

dimension |

int64 |

Nilai dalam interval [0, n) yang menentukan dimensi |

SetDimensionSize

Lihat juga

XlaBuilder::SetDimensionSize.

Menetapkan ukuran dinamis dimensi yang ditentukan XlaOp. Operand harus berbentuk array.

SetDimensionSize(operand, size, dimension)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

n array input dimensi. |

size |

XlaOp |

int32 yang mewakili ukuran dinamis runtime. |

dimension |

int64 |

Nilai dalam interval [0, n) yang menentukan dimensi. |

Teruskan operand sebagai hasil, dengan dimensi dinamis yang dilacak oleh compiler.

Nilai dengan padding akan diabaikan oleh operasi pengurangan downstream.

let v: f32[10] = f32[10]{1, 2, 3, 4, 5, 6, 7, 8, 9, 10};

let five: s32 = 5;

let six: s32 = 6;

// Setting dynamic dimension size doesn't change the upper bound of the static

// shape.

let padded_v_five: f32[10] = set_dimension_size(v, five, /*dimension=*/0);

let padded_v_six: f32[10] = set_dimension_size(v, six, /*dimension=*/0);

// sum == 1 + 2 + 3 + 4 + 5

let sum:f32[] = reduce_sum(padded_v_five);

// product == 1 * 2 * 3 * 4 * 5

let product:f32[] = reduce_product(padded_v_five);

// Changing padding size will yield different result.

// sum == 1 + 2 + 3 + 4 + 5 + 6

let sum:f32[] = reduce_sum(padded_v_six);

GetTupleElement

Lihat juga

XlaBuilder::GetTupleElement.

Mengindeks ke dalam tuple dengan nilai konstanta waktu kompilasi.

Nilai harus berupa konstanta waktu kompilasi sehingga inferensi bentuk dapat menentukan jenis nilai yang dihasilkan.

Ini serupa dengan std::get<int N>(t) di C++. Secara konseptual:

let v: f32[10] = f32[10]{0, 1, 2, 3, 4, 5, 6, 7, 8, 9};

let s: s32 = 5;

let t: (f32[10], s32) = tuple(v, s);

let element_1: s32 = gettupleelement(t, 1); // Inferred shape matches s32.

Lihat juga tf.tuple.

Dalam Feed

Lihat juga

XlaBuilder::Infeed.

Infeed(shape)

| Argumen | Jenis | Semantik |

|---|---|---|

shape |

Shape |

Bentuk data yang dibaca dari antarmuka Infeed. Kolom tata letak bentuk harus disetel agar sesuai dengan tata letak data yang dikirim ke perangkat; jika tidak, perilakunya tidak ditentukan. |

Membaca satu item data dari antarmuka streaming Infeed implisit

perangkat, menafsirkan data sebagai bentuk tertentu dan tata letaknya, serta menampilkan

XlaOp data. Beberapa operasi Infeed diizinkan dalam

komputasi, tetapi harus ada urutan total di antara operasi Infeed. Misalnya, dua Infeed dalam kode di bawah memiliki total urutan karena ada dependensi di antara loop if.

result1 = while (condition, init = init_value) {

Infeed(shape)

}

result2 = while (condition, init = result1) {

Infeed(shape)

}

Bentuk tuple bertingkat tidak didukung. Untuk bentuk tuple kosong, operasi Infeed pada dasarnya adalah tanpa pengoperasian dan dilanjutkan tanpa membaca data apa pun dari Infeed perangkat.

Iota

Lihat juga

XlaBuilder::Iota.

Iota(shape, iota_dimension)

Membangun literal konstan di perangkat, bukan transfer host yang berpotensi

besar. Membuat array yang telah menentukan bentuk dan menyimpan nilai mulai dari nol dan bertambah satu di sepanjang dimensi yang ditentukan. Untuk jenis floating point, array yang dihasilkan setara dengan ConvertElementType(Iota(...)) yang

Iota adalah jenis integral dan konversinya ke jenis floating point.

| Argumen | Jenis | Semantik |

|---|---|---|

shape |

Shape |

Bentuk array yang dibuat oleh Iota() |

iota_dimension |

int64 |

Dimensi yang akan bertambah. |

Misalnya, Iota(s32[4, 8], 0) akan menampilkan

[[0, 0, 0, 0, 0, 0, 0, 0 ],

[1, 1, 1, 1, 1, 1, 1, 1 ],

[2, 2, 2, 2, 2, 2, 2, 2 ],

[3, 3, 3, 3, 3, 3, 3, 3 ]]

Pengembalian dengan biaya Iota(s32[4, 8], 1)

[[0, 1, 2, 3, 4, 5, 6, 7 ],

[0, 1, 2, 3, 4, 5, 6, 7 ],

[0, 1, 2, 3, 4, 5, 6, 7 ],

[0, 1, 2, 3, 4, 5, 6, 7 ]]

Peta

Lihat juga

XlaBuilder::Map.

Map(operands..., computation)

| Argumen | Jenis | Semantik |

|---|---|---|

operands |

urutan N XlaOpd |

Array N dari jenis T0..T{N-1} |

computation |

XlaComputation |

komputasi jenis T_0, T_1, .., T_{N + M -1} -> S dengan parameter N jenis T dan M dari jenis arbitrer |

dimensions |

Array int64 |

array dimensi peta |

Menerapkan fungsi skalar pada array operands tertentu, yang menghasilkan array dimensi yang sama yang setiap elemennya merupakan hasil dari fungsi yang dipetakan yang diterapkan pada elemen yang sesuai dalam array input.

Fungsi yang dipetakan adalah komputasi arbitrer dengan batasan bahwa fungsi tersebut memiliki N input jenis skalar T dan satu output dengan jenis S. Output memiliki

dimensi yang sama dengan operand, kecuali jenis elemen T diganti

dengan S.

Misalnya: Map(op1, op2, op3, computation, par1) memetakan elem_out <-

computation(elem1, elem2, elem3, par1) pada setiap indeks (multi-dimensi) dalam

array input untuk menghasilkan array output.

OptimizationBarrier

Memblokir penerusan pengoptimalan apa pun agar tidak memindahkan komputasi melintasi batasan.

Memastikan bahwa semua input dievaluasi sebelum operator yang bergantung pada output penghalang.

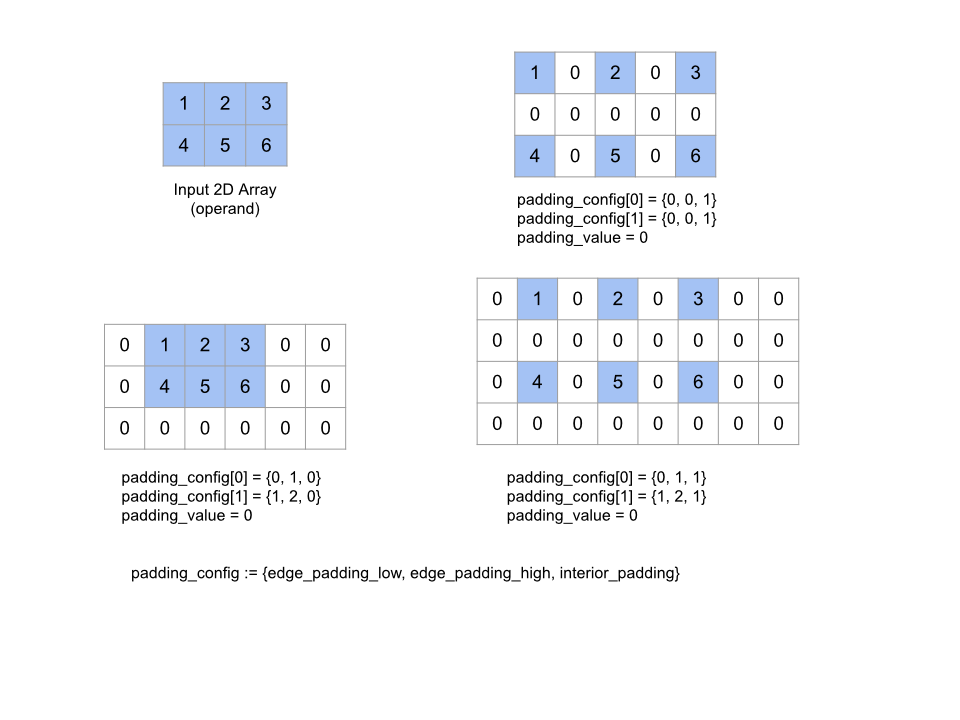

Bantalan

Lihat juga

XlaBuilder::Pad.

Pad(operand, padding_value, padding_config)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array jenis T |

padding_value |

XlaOp |

skalar jenis T untuk mengisi padding yang ditambahkan |

padding_config |

PaddingConfig |

jumlah padding di kedua tepi (rendah, tinggi) dan di antara elemen pada setiap dimensi |

Memperluas array operand yang diberikan dengan padding di sekitar array serta di antara elemen array dengan padding_value yang diberikan. padding_config

menentukan jumlah padding tepi dan padding interior untuk setiap

dimensi.

PaddingConfig adalah kolom berulang PaddingConfigDimension, yang berisi

tiga kolom untuk setiap dimensi: edge_padding_low, edge_padding_high, dan

interior_padding.

edge_padding_low dan edge_padding_high menentukan jumlah padding yang ditambahkan

di bagian bawah (di samping indeks 0) dan kelas atas (di samping indeks tertinggi)

dari setiap dimensi. Jumlah padding tepi bisa negatif --

nilai absolut padding negatif menunjukkan jumlah elemen yang akan dihapus

dari dimensi yang ditentukan.

interior_padding menentukan jumlah padding yang ditambahkan antara dua

elemen di setiap dimensi; jumlah ini tidak boleh negatif. Padding interior terjadi

secara logis sebelum padding tepi, sehingga pada kasus padding tepi negatif, elemen

akan dihapus dari operand dengan padding bagian dalam.

Operasi ini tidak beroperasi jika pasangan padding tepi semuanya (0, 0) dan

nilai padding interior semuanya 0. Gambar di bawah menunjukkan contoh nilai edge_padding dan interior_padding yang berbeda untuk array dua dimensi.

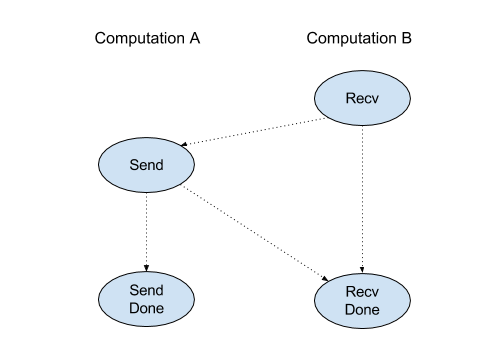

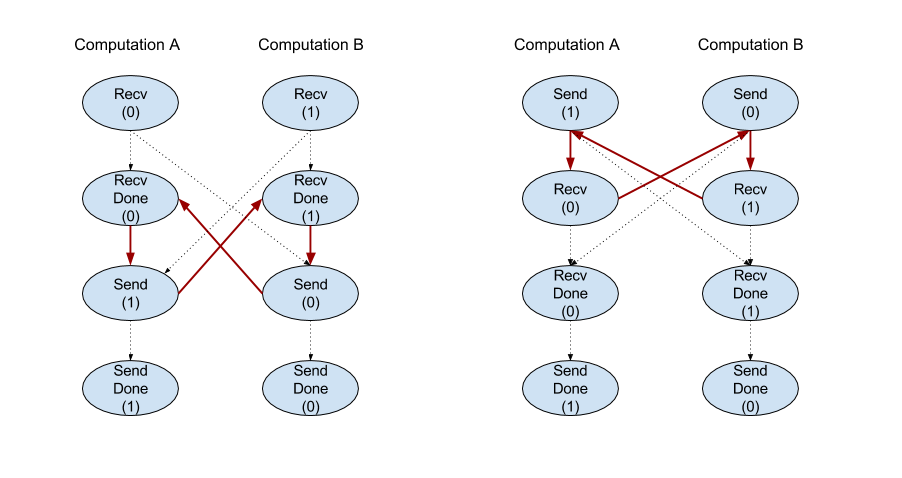

Diterima

Lihat juga

XlaBuilder::Recv.

Recv(shape, channel_handle)

| Argumen | Jenis | Semantik |

|---|---|---|

shape |

Shape |

bentuk data yang akan diterima |

channel_handle |

ChannelHandle |

ID unik untuk setiap pasangan pengirim/penerima |

Menerima data bentuk tertentu dari petunjuk Send dalam komputasi

lain yang memiliki handle saluran yang sama. Menampilkan XlaOp untuk data yang diterima.

API klien dari operasi Recv mewakili komunikasi sinkron.

Namun, petunjuk ini diurai secara internal menjadi 2 petunjuk HLO

(Recv dan RecvDone) untuk memungkinkan transfer data asinkron. Lihat juga

HloInstruction::CreateRecv dan HloInstruction::CreateRecvDone.

Recv(const Shape& shape, int64 channel_id)

Mengalokasikan resource yang diperlukan untuk menerima data dari petunjuk Send dengan channel_id yang sama. Menampilkan konteks untuk resource yang dialokasikan, yang digunakan

oleh petunjuk RecvDone berikut untuk menunggu penyelesaian transfer

data. Konteksnya adalah tuple dari {menerima buffer (bentuk), ID permintaan

(U32)}, dan hanya dapat digunakan oleh petunjuk RecvDone.

RecvDone(HloInstruction context)

Dengan mempertimbangkan konteks yang dibuat oleh petunjuk Recv, menunggu transfer data selesai dan menampilkan data yang diterima.

Reduce (Mengurangi)

Lihat juga

XlaBuilder::Reduce.

Menerapkan fungsi pengurangan ke satu atau beberapa array secara paralel.

Reduce(operands..., init_values..., computation, dimensions)

| Argumen | Jenis | Semantik |

|---|---|---|

operands |

Urutan N XlaOp |

Array N dari jenis T_0, ..., T_{N-1}. |

init_values |

Urutan N XlaOp |

Skalar N jenis T_0, ..., T_{N-1}. |

computation |

XlaComputation |

komputasi jenis T_0, ..., T_{N-1}, T_0, ..., T_{N-1} -> Collate(T_0, ..., T_{N-1}). |

dimensions |

Array int64 |

dimensi yang tidak berurutan. |

Dengan keterangan:

- N harus lebih besar atau sama dengan 1.

- Komputasi harus "secara kasar" asosiatif (lihat di bawah).

- Semua array input harus memiliki dimensi yang sama.

- Semua nilai awal harus membentuk identitas di

computation. - Jika

N = 1,Collate(T)adalahT. - Jika

N > 1,Collate(T_0, ..., T_{N-1})adalah tuple dari elemenNdari jenisT.

Operasi ini mengurangi satu atau beberapa dimensi dari setiap array input menjadi skalar.

Peringkat setiap array yang ditampilkan adalah rank(operand) - len(dimensions). Output

op adalah Collate(Q_0, ..., Q_N) dengan Q_i adalah array jenis T_i, yang

dimensinya dijelaskan di bawah ini.

Backend yang berbeda diizinkan untuk mengaitkan kembali komputasi pengurangan. Hal ini dapat menyebabkan perbedaan numerik, karena beberapa fungsi pengurangan seperti penambahan tidak asosiatif untuk nilai mengambang. Namun, jika rentang data terbatas, penambahan floating point hampir bersifat asosiatif untuk sebagian besar penggunaan praktis.

Contoh

Saat mengurangi satu dimensi dalam array 1D tunggal dengan nilai [10, 11,

12, 13], dengan fungsi pengurangan f (ini adalah computation), hal tersebut dapat dihitung sebagai

f(10, f(11, f(12, f(init_value, 13)))

tetapi ada juga banyak kemungkinan lain, mis.

f(init_value, f(f(10, f(init_value, 11)), f(f(init_value, 12), f(init_value, 13))))

Berikut adalah contoh kasar kode pseudo tentang cara penerapan pengurangan, menggunakan penjumlahan sebagai komputasi pengurangan dengan nilai awal 0.

result_shape <- remove all dims in dimensions from operand_shape

# Iterate over all elements in result_shape. The number of r's here is equal

# to the rank of the result

for r0 in range(result_shape[0]), r1 in range(result_shape[1]), ...:

# Initialize this result element

result[r0, r1...] <- 0

# Iterate over all the reduction dimensions

for d0 in range(dimensions[0]), d1 in range(dimensions[1]), ...:

# Increment the result element with the value of the operand's element.

# The index of the operand's element is constructed from all ri's and di's

# in the right order (by construction ri's and di's together index over the

# whole operand shape).

result[r0, r1...] += operand[ri... di]

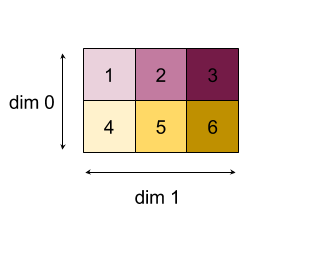

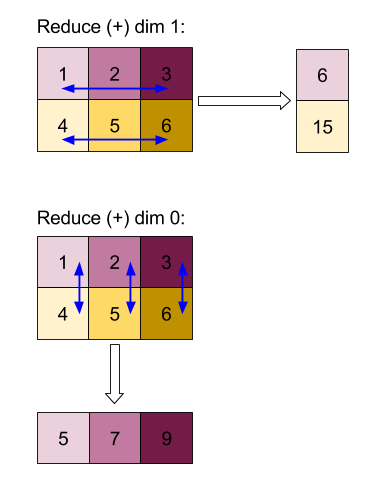

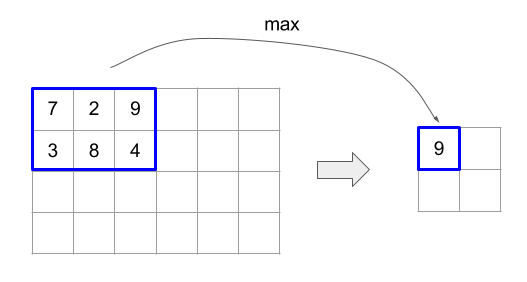

Berikut adalah contoh pengurangan array 2D (matriks). Bentuk ini memiliki peringkat 2, dimensi 0 untuk ukuran 2 dan dimensi 1 untuk ukuran 3:

Hasil pengurangan dimensi 0 atau 1 dengan fungsi "tambahkan":

Perhatikan bahwa kedua hasil pengurangan adalah array 1D. Diagram menunjukkan satu kolom sebagai kolom dan satu lagi sebagai baris hanya untuk kemudahan visual.

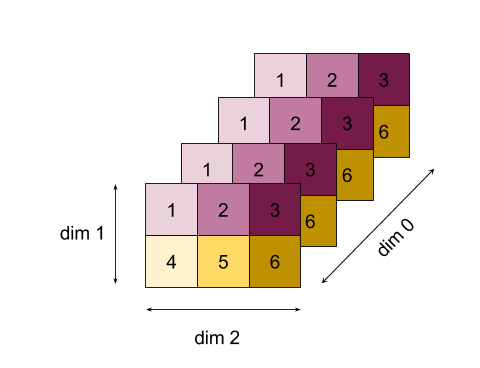

Untuk contoh yang lebih kompleks, berikut adalah array 3D. Peringkatnya adalah 3, dimensi 0 dari ukuran 4, dimensi 1 dari ukuran 2, dan dimensi 2 dari ukuran 3. Untuk mempermudah, nilai 1 hingga 6 direplikasi di seluruh dimensi 0.

Sama seperti contoh 2D, kita hanya dapat mengurangi satu dimensi. Misalnya, jika kita mengurangi dimensi 0, kita akan mendapatkan array peringkat 2 dengan semua nilai di dimensi 0 dilipat menjadi skalar:

| 4 8 12 |

| 16 20 24 |

Jika kita mengurangi dimensi 2, kita juga mendapatkan array peringkat 2 dengan semua nilai di seluruh dimensi 2 dilipat menjadi skalar:

| 6 15 |

| 6 15 |

| 6 15 |

| 6 15 |

Perhatikan bahwa urutan relatif antara dimensi yang tersisa dalam input dipertahankan dalam output, tetapi beberapa dimensi mungkin akan diberi angka baru (karena peringkat berubah).

Kita juga bisa mengurangi

beberapa dimensi. Menambahkan dimensi 0 dan 1 yang mengurangi

menghasilkan array 1D [20, 28, 36].

Mengurangi array 3D pada semua dimensinya menghasilkan 84 skalar.

Pengurangan Variadik

Saat N > 1, kurangi aplikasi fungsi sedikit lebih kompleks, karena

diterapkan secara bersamaan ke semua input. Operand diberikan ke

komputasi dengan urutan berikut:

- Menjalankan nilai yang dikurangi untuk operand pertama

- ...

- Menjalankan nilai yang dikurangi untuk operand ke-N

- Nilai input untuk operand pertama

- ...

- Nilai input untuk operand ke-N

Misalnya, pertimbangkan fungsi reduksi berikut, yang dapat digunakan untuk menghitung nilai maksimum dan argmax dari array 1-D secara paralel:

f: (Float, Int, Float, Int) -> Float, Int

f(max, argmax, value, index):

if value >= max:

return (value, index)

else:

return (max, argmax)

Untuk array Input 1-D V = Float[N], K = Int[N], dan nilai init

I_V = Float, I_K = Int, hasil f_(N-1) pengurangan di satu-satunya

dimensi input setara dengan aplikasi rekursif berikut:

f_0 = f(I_V, I_K, V_0, K_0)

f_1 = f(f_0.first, f_0.second, V_1, K_1)

...

f_(N-1) = f(f_(N-2).first, f_(N-2).second, V_(N-1), K_(N-1))

Menerapkan pengurangan ini ke array nilai, dan array indeks berurutan (yaitu iota), akan melakukan iterasi bersama pada array tersebut, dan menampilkan tuple yang berisi nilai maksimal dan indeks yang cocok.

ReducePrecision

Lihat juga

XlaBuilder::ReducePrecision.

Memodelkan efek konversi nilai floating point ke format presisi yang lebih rendah (seperti IEEE-FP16) dan kembali ke format aslinya. Jumlah bit eksponen dan mantissa dalam format presisi lebih rendah dapat ditentukan secara arbitrer, meskipun semua ukuran bit mungkin tidak didukung di semua implementasi hardware.

ReducePrecision(operand, mantissa_bits, exponent_bits)

| Argumen | Jenis | Semantik |

|---|---|---|

operand |

XlaOp |

array jenis floating point T. |

exponent_bits |

int32 |