View on TensorFlow.org View on TensorFlow.org

|

Run in Google Colab Run in Google Colab

|

View source on GitHub View source on GitHub

|

Download notebook Download notebook

|

This tutorial will demonstrate the recommended best practice for training models with user-level Differential Privacy using Tensorflow Federated. We will use the DP-SGD algorithm of Abadi et al., "Deep Learning with Differential Privacy" modified for user-level DP in a federated context in McMahan et al., "Learning Differentially Private Recurrent Language Models".

Differential Privacy (DP) is a widely used method for bounding and quantifying the privacy leakage of sensitive data when performing learning tasks. Training a model with user-level DP guarantees that the model is unlikely to learn anything significant about the data of any individual, but can still (hopefully!) learn patterns that exist in the data of many clients.

We will train a model on the federated EMNIST dataset. There is an inherent trade-off between utility and privacy, and it may be difficult to train a model with high privacy that performs as well as a state-of-the-art non-private model. For expediency in this tutorial, we will train for just 100 rounds, sacrificing some quality in order to demonstrate how to train with high privacy. If we used more training rounds, we could certainly have a somewhat higher-accuracy private model, but not as high as a model trained without DP.

Before we begin

First, let us make sure the notebook is connected to a backend that has the relevant components compiled.

# @test {"skip": true}pip install --quiet --upgrade dp-accountingpip install --quiet --upgrade tensorflow-federated

Some imports we will need for the tutorial. We will use tensorflow_federated, the open-source framework for machine learning and other computations on decentralized data, as well as dp_accounting, an open-source library for analyzing differentially private algorithms.

import collections

import dp_accounting

import numpy as np

import pandas as pd

import tensorflow as tf

import tensorflow_federated as tff

Run the following "Hello World" example to make sure the TFF environment is correctly setup. If it doesn't work, please refer to the Installation guide for instructions.

@tff.tensorflow_computation

def hello_world():

return 'Hello, World!'

hello_world()

b'Hello, World!'

Download and preprocess the federated EMNIST dataset.

def get_emnist_dataset():

emnist_train, emnist_test = tff.simulation.datasets.emnist.load_data(

only_digits=True

)

def element_fn(element):

return collections.OrderedDict(

x=tf.expand_dims(element['pixels'], -1), y=element['label']

)

def preprocess_train_dataset(dataset):

# Use buffer_size same as the maximum client dataset size,

# 418 for Federated EMNIST

return (

dataset.map(element_fn)

.shuffle(buffer_size=418)

.repeat(1)

.batch(32, drop_remainder=False)

)

def preprocess_test_dataset(dataset):

return dataset.map(element_fn).batch(128, drop_remainder=False)

emnist_train = emnist_train.preprocess(preprocess_train_dataset)

emnist_test = preprocess_test_dataset(

emnist_test.create_tf_dataset_from_all_clients()

)

return emnist_train, emnist_test

train_data, test_data = get_emnist_dataset()

Define our model.

def my_model_fn():

model = tf.keras.models.Sequential([

tf.keras.layers.Reshape(input_shape=(28, 28, 1), target_shape=(28 * 28,)),

tf.keras.layers.Dense(200, activation=tf.nn.relu),

tf.keras.layers.Dense(200, activation=tf.nn.relu),

tf.keras.layers.Dense(10),

])

return tff.learning.models.from_keras_model(

keras_model=model,

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

input_spec=test_data.element_spec,

metrics=[tf.keras.metrics.SparseCategoricalAccuracy()],

)

Determine the noise sensitivity of the model.

To get user-level DP guarantees, we must change the basic Federated Averaging algorithm in two ways. First, the clients' model updates must be clipped before transmission to the server, bounding the maximum influence of any one client. Second, the server must add enough noise to the sum of user updates before averaging to obscure the worst-case client influence.

For clipping, we use the adaptive clipping method of Andrew et al. 2021, Differentially Private Learning with Adaptive Clipping, so no clipping norm needs to be explicitly set.

Adding noise will in general degrade the utility of the model, but we can control the amount of noise in the average update at each round with two knobs: the standard deviation of the Gaussian noise added to the sum, and the number of clients in the average. Our strategy will be to first determine how much noise the model can tolerate with a relatively small number of clients per round with acceptable loss to model utility. Then to train the final model, we can increase the amount of noise in the sum, while proportionally scaling up the number of clients per round (assuming the dataset is large enough to support that many clients per round). This is unlikely to significantly affect model quality, since the only effect is to decrease variance due to client sampling (indeed we will verify that it does not in our case).

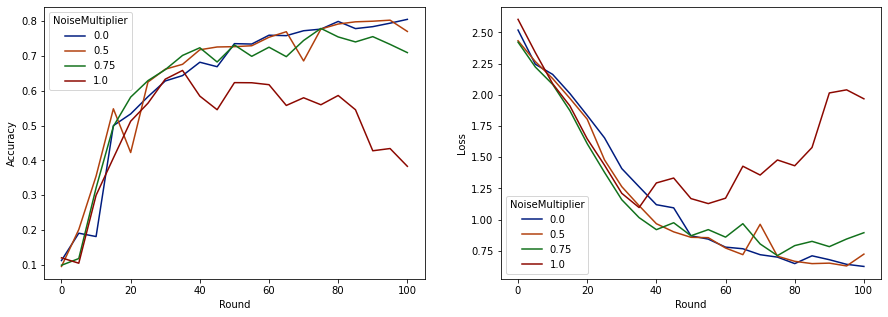

To that end, we first train a series of models with 50 clients per round, with increasing amounts of noise. Specifically, we increase the "noise_multiplier" which is the ratio of the noise standard deviation to the clipping norm. Since we are using adaptive clipping, this means that the actual magnitude of the noise changes from round to round.

total_clients = len(train_data.client_ids)

def train(rounds, noise_multiplier, clients_per_round, data_frame):

# Using the `dp_aggregator` here turns on differential privacy with adaptive

# clipping.

aggregation_factory = tff.learning.model_update_aggregator.dp_aggregator(

noise_multiplier, clients_per_round

)

# We use Poisson subsampling which gives slightly tighter privacy guarantees

# compared to having a fixed number of clients per round. The actual number of

# clients per round is stochastic with mean clients_per_round.

sampling_prob = clients_per_round / total_clients

# Build a federated averaging process.

# Typically a non-adaptive server optimizer is used because the noise in the

# updates can cause the second moment accumulators to become very large

# prematurely.

learning_process = tff.learning.algorithms.build_unweighted_fed_avg(

my_model_fn,

client_optimizer_fn=tff.learning.optimizers.build_sgdm(0.01),

server_optimizer_fn=tff.learning.optimizers.build_sgdm(1.0, momentum=0.9),

model_aggregator=aggregation_factory,

)

eval_process = tff.learning.build_federated_evaluation(my_model_fn)

# Training loop.

state = learning_process.initialize()

for round in range(rounds):

if round % 5 == 0:

model_weights = learning_process.get_model_weights(state)

metrics = eval_process(model_weights, [test_data])['eval']

if round < 25 or round % 25 == 0:

print(f'Round {round:3d}: {metrics}')

data_frame = data_frame.append(

{'Round': round, 'NoiseMultiplier': noise_multiplier, **metrics},

ignore_index=True,

)

# Sample clients for a round. Note that if your dataset is large and

# sampling_prob is small, it would be faster to use gap sampling.

x = np.random.uniform(size=total_clients)

sampled_clients = [

train_data.client_ids[i]

for i in range(total_clients)

if x[i] < sampling_prob

]

sampled_train_data = [

train_data.create_tf_dataset_for_client(client)

for client in sampled_clients

]

# Use selected clients for update.

result = learning_process.next(state, sampled_train_data)

state = result.state

metrics = result.metrics

model_weights = learning_process.get_model_weights(state)

metrics = eval_process(model_weights, [test_data])['eval']

print(f'Round {rounds:3d}: {metrics}')

data_frame = data_frame.append(

{'Round': rounds, 'NoiseMultiplier': noise_multiplier, **metrics},

ignore_index=True,

)

return data_frame

data_frame = pd.DataFrame()

rounds = 100

clients_per_round = 50

for noise_multiplier in [0.0, 0.5, 0.75, 1.0]:

print(f'Starting training with noise multiplier: {noise_multiplier}')

data_frame = train(rounds, noise_multiplier, clients_per_round, data_frame)

print()

Starting training with noise multiplier: 0.0

Round 0: OrderedDict([('sparse_categorical_accuracy', 0.112289384), ('loss', 2.5190482)])

Round 5: OrderedDict([('sparse_categorical_accuracy', 0.19075724), ('loss', 2.2449977)])

Round 10: OrderedDict([('sparse_categorical_accuracy', 0.18115693), ('loss', 2.163907)])

Round 15: OrderedDict([('sparse_categorical_accuracy', 0.49970612), ('loss', 2.01017)])

Round 20: OrderedDict([('sparse_categorical_accuracy', 0.5333317), ('loss', 1.8350543)])

Round 25: OrderedDict([('sparse_categorical_accuracy', 0.5828517), ('loss', 1.6551636)])

Round 50: OrderedDict([('sparse_categorical_accuracy', 0.7352077), ('loss', 0.8700141)])

Round 75: OrderedDict([('sparse_categorical_accuracy', 0.7769152), ('loss', 0.6992781)])

Round 100: OrderedDict([('sparse_categorical_accuracy', 0.8049814), ('loss', 0.62453026)])

Starting training with noise multiplier: 0.5

Round 0: OrderedDict([('sparse_categorical_accuracy', 0.09526841), ('loss', 2.4332638)])

Round 5: OrderedDict([('sparse_categorical_accuracy', 0.20128821), ('loss', 2.2664592)])

Round 10: OrderedDict([('sparse_categorical_accuracy', 0.35472178), ('loss', 2.130336)])

Round 15: OrderedDict([('sparse_categorical_accuracy', 0.5480995), ('loss', 1.9713942)])

Round 20: OrderedDict([('sparse_categorical_accuracy', 0.42246276), ('loss', 1.8045483)])

Round 25: OrderedDict([('sparse_categorical_accuracy', 0.624902), ('loss', 1.4785467)])

Round 50: OrderedDict([('sparse_categorical_accuracy', 0.7265625), ('loss', 0.85801566)])

Round 75: OrderedDict([('sparse_categorical_accuracy', 0.77720904), ('loss', 0.70615387)])

Round 100: OrderedDict([('sparse_categorical_accuracy', 0.7702537), ('loss', 0.72331005)])

Starting training with noise multiplier: 0.75

Round 0: OrderedDict([('sparse_categorical_accuracy', 0.098672606), ('loss', 2.422002)])

Round 5: OrderedDict([('sparse_categorical_accuracy', 0.11794671), ('loss', 2.2227976)])

Round 10: OrderedDict([('sparse_categorical_accuracy', 0.3208513), ('loss', 2.083766)])

Round 15: OrderedDict([('sparse_categorical_accuracy', 0.49752644), ('loss', 1.8728142)])

Round 20: OrderedDict([('sparse_categorical_accuracy', 0.5816761), ('loss', 1.6084186)])

Round 25: OrderedDict([('sparse_categorical_accuracy', 0.62896746), ('loss', 1.378527)])

Round 50: OrderedDict([('sparse_categorical_accuracy', 0.73153406), ('loss', 0.8705139)])

Round 75: OrderedDict([('sparse_categorical_accuracy', 0.7789724), ('loss', 0.7113147)])

Round 100: OrderedDict([('sparse_categorical_accuracy', 0.70944357), ('loss', 0.89495045)])

Starting training with noise multiplier: 1.0

Round 0: OrderedDict([('sparse_categorical_accuracy', 0.12002841), ('loss', 2.60482)])

Round 5: OrderedDict([('sparse_categorical_accuracy', 0.104574844), ('loss', 2.3388205)])

Round 10: OrderedDict([('sparse_categorical_accuracy', 0.29966694), ('loss', 2.089262)])

Round 15: OrderedDict([('sparse_categorical_accuracy', 0.4067398), ('loss', 1.9109797)])

Round 20: OrderedDict([('sparse_categorical_accuracy', 0.5123677), ('loss', 1.6472703)])

Round 25: OrderedDict([('sparse_categorical_accuracy', 0.56416535), ('loss', 1.4362282)])

Round 50: OrderedDict([('sparse_categorical_accuracy', 0.62323666), ('loss', 1.1682972)])

Round 75: OrderedDict([('sparse_categorical_accuracy', 0.55968356), ('loss', 1.4779186)])

Round 100: OrderedDict([('sparse_categorical_accuracy', 0.382837), ('loss', 1.9680436)])

Now we can visualize the evaluation set accuracy and loss of those runs.

import matplotlib.pyplot as plt

import seaborn as sns

def make_plot(data_frame):

plt.figure(figsize=(15, 5))

dff = data_frame.rename(

columns={'sparse_categorical_accuracy': 'Accuracy', 'loss': 'Loss'}

)

plt.subplot(121)

sns.lineplot(

data=dff, x='Round', y='Accuracy', hue='NoiseMultiplier', palette='dark'

)

plt.subplot(122)

sns.lineplot(

data=dff, x='Round', y='Loss', hue='NoiseMultiplier', palette='dark'

)

make_plot(data_frame)

It appears that with 50 expected clients per round, this model can tolerate a noise multiplier of up to 0.5 without degrading model quality. A noise multiplier of 0.75 seems to cause a bit of model degradation, and 1.0 makes the model diverge.

There is typically a tradeoff between model quality and privacy. The higher noise we use, the more privacy we can get for the same amount of training time and number of clients. Conversely, with less noise, we may have a more accurate model, but we'll have to train with more clients per round to reach our target privacy level.

With the experiment above, we might decide that the small amount of model deterioration at 0.75 is acceptable in order to train the final model faster, but let's assume we want to match the performance of the 0.5 noise-multiplier model.

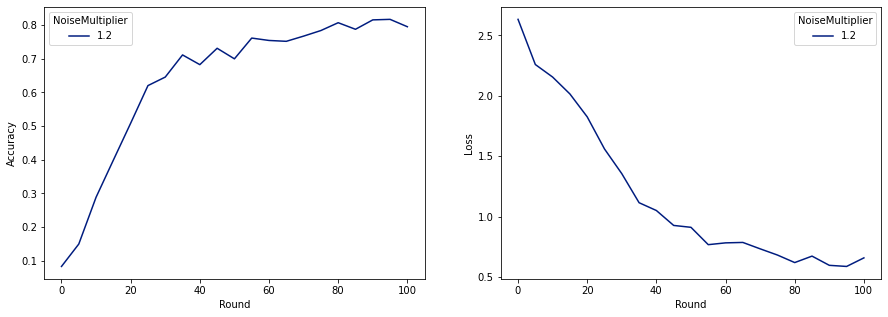

Now we can use dp_accounting functions to determine how many expected clients per round we would need to get acceptable privacy. Standard practice is to choose delta somewhat smaller than one over the number of records in the dataset. This dataset has 3383 total training users, so let's aim for (2, 1e-5)-DP.

We use dp_accounting.calibrate_dp_mechanism to search over the number of clients per round. The privacy accountant (RdpAccountant) we use to estimate privacy given a dp_accounting.DpEvent is based on Wang et al. (2018) and Mironov et al. (2019).

total_clients = 3383

noise_to_clients_ratio = 0.01

target_delta = 1e-5

target_eps = 2

# Initialize arguments to dp_accounting.calibrate_dp_mechanism.

# No-arg callable that returns a fresh accountant.

make_fresh_accountant = dp_accounting.rdp.RdpAccountant

# Create function that takes expected clients per round and returns a

# dp_accounting.DpEvent representing the full training process.

def make_event_from_param(clients_per_round):

q = clients_per_round / total_clients

noise_multiplier = clients_per_round * noise_to_clients_ratio

gaussian_event = dp_accounting.GaussianDpEvent(noise_multiplier)

sampled_event = dp_accounting.PoissonSampledDpEvent(q, gaussian_event)

composed_event = dp_accounting.SelfComposedDpEvent(sampled_event, rounds)

return composed_event

# Create object representing the search range [1, 3383].

bracket_interval = dp_accounting.ExplicitBracketInterval(1, total_clients)

# Perform search for smallest clients_per_round achieving the target privacy.

clients_per_round = dp_accounting.calibrate_dp_mechanism(

make_fresh_accountant,

make_event_from_param,

target_eps,

target_delta,

bracket_interval,

discrete=True,

)

noise_multiplier = clients_per_round * noise_to_clients_ratio

print(

f'To get ({target_eps}, {target_delta})-DP, use {clients_per_round} '

f'clients with noise multiplier {noise_multiplier}.'

)

To get (2, 1e-05)-DP, use 120 clients with noise multiplier 1.2.

Now we can train our final private model for release.

rounds = 100

noise_multiplier = 1.2

clients_per_round = 120

data_frame = pd.DataFrame()

data_frame = train(rounds, noise_multiplier, clients_per_round, data_frame)

make_plot(data_frame)

Round 0: OrderedDict([('sparse_categorical_accuracy', 0.08260678), ('loss', 2.6334999)])

Round 5: OrderedDict([('sparse_categorical_accuracy', 0.1492212), ('loss', 2.259542)])

Round 10: OrderedDict([('sparse_categorical_accuracy', 0.28847474), ('loss', 2.155699)])

Round 15: OrderedDict([('sparse_categorical_accuracy', 0.3989518), ('loss', 2.0156953)])

Round 20: OrderedDict([('sparse_categorical_accuracy', 0.5086697), ('loss', 1.8261365)])

Round 25: OrderedDict([('sparse_categorical_accuracy', 0.6204692), ('loss', 1.5602393)])

Round 50: OrderedDict([('sparse_categorical_accuracy', 0.70008814), ('loss', 0.91155165)])

Round 75: OrderedDict([('sparse_categorical_accuracy', 0.78421336), ('loss', 0.6820159)])

Round 100: OrderedDict([('sparse_categorical_accuracy', 0.7955525), ('loss', 0.6585961)])

As we can see, the final model has similar loss and accuracy to the model trained without noise, but this one satisfies (2, 1e-5)-DP.