View source on GitHub

View source on GitHub

|

Lattice layer.

tfl.layers.Lattice(

lattice_sizes,

units=1,

monotonicities=None,

unimodalities=None,

edgeworth_trusts=None,

trapezoid_trusts=None,

monotonic_dominances=None,

range_dominances=None,

joint_monotonicities=None,

joint_unimodalities=None,

output_min=None,

output_max=None,

num_projection_iterations=10,

monotonic_at_every_step=True,

clip_inputs=True,

interpolation='hypercube',

kernel_initializer='random_uniform_or_linear_initializer',

kernel_regularizer=None,

**kwargs

)

Used in the notebooks

| Used in the tutorials |

|---|

Layer performs interpolation using one of units d-dimensional lattices with

arbitrary number of keypoints per dimension. There are trainable weights

associated with lattice vertices. Input to this layer is considered to be a

d-dimensional point within the lattice. If point coincides with one of the

lattice vertex then interpolation result for this point is equal to weight

associated with that vertex. Otherwise, all surrounding vertices contribute to

the interpolation result inversely proportional to the distance from them.

For example lattice sizes: [2, 3] produce following lattice:

o---o---o

| | |

o---o---o

First coordinate of input tensor must be within [0, 1], and the second within [0, 2]. If coordinates are outside of this range they will be clipped into it.

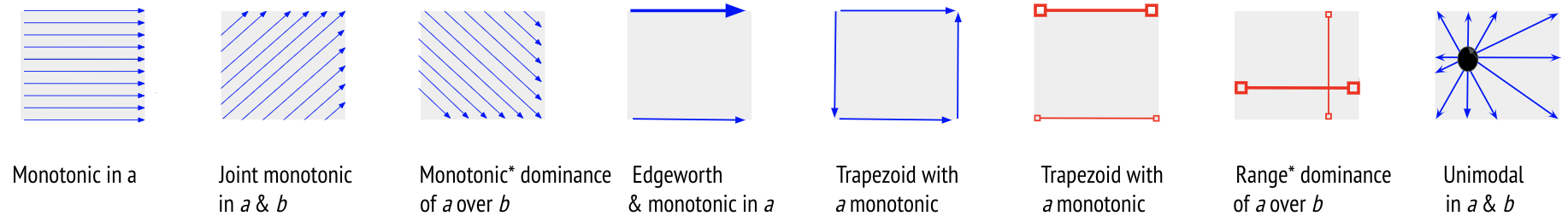

There are several types of constraints on the shape of the learned function that are either 1 or 2 dimensional:

- Monotonicity: constrains the function to be increasing in the

corresponding dimension. To achieve decreasing monotonicity, either pass the

inputs through a

tfl.layers.PWLCalibrationwithdecreasingmonotonicity, or manually reverse the inputs aslattice_size - 1 - inputs. - Unimodality: constrains the function to be unimodal in that dimension with minimum being in the center lattice vertex of that dimension. Single dimension can not be constrained to be both monotonic and unimodal. Unimodal dimensions must have at least 3 lattice vertices.

- Edgeworth Trust: constrains the function to be more responsive to a main feature as a secondary conditional feature increases or decreases. For example, we may want the model to rely more on average rating (main feature) when the number of reviews (conditional feature) is high. In particular, the constraint guarantees that a given change in the main feature's value will change the model output by more when a secondary feature indicates higher trust in the main feature. Note that the constraint only works when the model is monotonic in the main feature.

- Trapezoid Trust: conceptually similar to edgeworth trust, but this constraint guarantees that the range of possible outputs along the main feature dimension, when a conditional feature indicates low trust, is a subset of the range of outputs when a conditional feature indicates high trust. When lattices have 2 vertices in each constrained dimension, this implies edgeworth trust (which only constrains the size of the relevant ranges). With more than 2 lattice vertices per dimension, the two constraints diverge and are not necessarily 'weaker' or 'stronger' than each other - edgeworth trust acts throughout the lattice interior on delta shifts in the main feature, while trapezoid trust only acts on the min and max extremes of the main feature, constraining the overall range of outputs across the domain of the main feature. The two types of trust constraints can be applied jointly.

- Monotonic Dominance: constrains the function to require the effect (slope) in the direction of the dominant dimension to be greater than that of the weak dimension for any point in the lattice. Both dominant and weak dimensions must be monotonic. Note that this constraint might not be strictly satisified at the end of training. In such cases, increase the number of projection iterations.

- Range Dominance: constraints the function to require the range of possible outputs to be greater than if one varies the dominant dimension than if one varies the weak dimension for any point. Both dominant and weak dimensions must be monotonic. Note that this constraint might not be strictly satisified at the end of training. In such cases, increase the number of projection iterations.

- Joint Monotonicity: constrains the function to be monotonic along a diagonal direction of a two dimensional subspace when all other dimensions are fixed. For example, if our function is scoring the profit given A hotel guests and B hotel beds, it may be wrong to constrain the profit to be increasing in either hotel guests or hotel beds in-dependently, but along the diagonal (+ 1 guest and +1 bed), the profit should be monotonic. Note that this constraint might not be strictly satisified at the end of training. In such cases, increase the number of projection iterations.

There are upper and lower bound constraints on the output.

All units share the same layer configuration, but each has their separate set of trained parameters.

Input shape | |

|---|---|

A typical shape is: |

Output shape | |

|---|---|

Tensor of shape: (batch_size, ..., units)

|

Example:

lattice = tfl.layers.Lattice(

# Number of vertices along each dimension.

lattice_sizes=[2, 2, 3, 4, 2, 2, 3],

# You can specify monotonicity constraints.

monotonicities=['increasing', 'none', 'increasing', 'increasing',

'increasing', 'increasing', 'increasing'],

# You can specify trust constraints between pairs of features. Here we

# constrain the function to be more responsive to a main feature (index 4)

# as a secondary conditional feature (index 3) increases (positive

# direction).

edgeworth_trusts=(4, 3, 'positive'),

# Output can be bounded.

output_min=0.0,

output_max=1.0)

Args | |

|---|---|

lattice_sizes

|

List or tuple of length d of integers which represents number of lattice vertices per dimension (minimum is 2). Second dimension of input shape must match the number of elements in lattice sizes. |

units

|

Output dimension of the layer. See class comments for details. |

monotonicities

|

None or list or tuple of same length as lattice_sizes of {'none', 'increasing', 0, 1} which specifies if the model output should be monotonic in corresponding feature, using 'increasing' or 1 to indicate increasing monotonicity and 'none' or 0 to indicate no monotonicity constraints. |

unimodalities

|

None or list or tuple of same length as lattice_sizes of {'none', 'valley', 'peak', 0, 1, -1} which specifies if the model output should be unimodal in corresponding feature, using 'valley' or 1 to indicate that function first decreases then increases, using 'peak' or -1 to indicate that funciton first increases then decreases, using 'none' or 0 to indicate no unimodality constraints. |

edgeworth_trusts

|

None or three-element tuple or iterable of three-element tuples. First element is the index of the main (monotonic) feature. Second element is the index of the conditional feature. Third element is the direction of trust: 'positive' or 1 if higher values of the conditional feature should increase trust in the main feature and 'negative' or -1 otherwise. |

trapezoid_trusts

|

None or three-element tuple or iterable of three-element tuples. First element is the index of the main (monotonic) feature. Second element is the index of the conditional feature. Third element is the direction of trust: 'positive' or 1 if higher values of the conditional feature should increase trust in the main feature and 'negative' or -1 otherwise. |

monotonic_dominances

|

None or two-element tuple or iterable of two-element tuples. First element is the index of the dominant feature. Second element is the index of the weak feature. |

range_dominances

|

None or two-element tuple or iterable of two-element tuples. First element is the index of the dominant feature. Second element is the index of the weak feature. |

joint_monotonicities

|

None or two-element tuple or iterable of two-element tuples which represents indices of two features requiring joint monotonicity. |

joint_unimodalities

|

None or tuple or iterable of tuples. Each tuple contains 2 elements: iterable of indices of single group of jointly unimodal features followed by string 'valley' or 'peak', using 'valley' to indicate that function first decreases then increases, using 'peak' to indicate that funciton first increases then decreases. For example: ([0, 3, 4], 'valley'). |

output_min

|

None or lower bound of the output. |

output_max

|

None or upper bound of the output. |

num_projection_iterations

|

Number of iterations of Dykstra projections algorithm. Projection updates will be closer to a true projection (with respect to the L2 norm) with higher number of iterations. Increasing this number has diminishing return on projection precsion. Infinite number of iterations would yield perfect projection. Increasing this number might slightly improve convergence by cost of slightly increasing running time. Most likely you want this number to be proportional to number of lattice vertices in largest constrained dimension. |

monotonic_at_every_step

|

Whether to strictly enforce monotonicity and trust constraints after every gradient update by applying a final imprecise projection. Setting this parameter to True together with small num_projection_iterations parameter is likely to hurt convergence. |

clip_inputs

|

If inputs should be clipped to the input range of the lattice. |

interpolation

|

One of 'hypercube' or 'simplex' interpolation. For a

d-dimensional lattice, 'hypercube' interpolates 2^d parameters, whereas

'simplex' uses d+1 parameters and thus scales better. For details see

tfl.lattice_lib.evaluate_with_simplex_interpolation and

tfl.lattice_lib.evaluate_with_hypercube_interpolation.

|

kernel_initializer

|

None or one of:

|

kernel_regularizer

|

None or a single element or a list of following:

('torsion', l1, l2) where l1 and l2 represent corresponding

regularization amount for graph Torsion regularizer. l1 and l2 can

either be single floats or lists of floats to specify different

regularization amount for every dimension.('laplacian', l1, l2) where l1 and l2 represent corresponding

regularization amount for graph Laplacian regularizer. l1 and l2 can

either be single floats or lists of floats to specify different

regularization amount for every dimension. |

**kwargs

|

Other args passed to keras.layers.Layer initializer.

|

Raises | |

|---|---|

ValueError

|

If layer hyperparameters are invalid. |

Attributes | |

|---|---|

|

|

kernel

|

weights of the lattice. |

activity_regularizer

|

Optional regularizer function for the output of this layer. |

compute_dtype

|

The dtype of the layer's computations.

This is equivalent to Layers automatically cast their inputs to the compute dtype, which

causes computations and the output to be in the compute dtype as well.

This is done by the base Layer class in Layers often perform certain internal computations in higher precision

when |

dtype

|

The dtype of the layer weights.

This is equivalent to |

dtype_policy

|

The dtype policy associated with this layer.

This is an instance of a |

dynamic

|

Whether the layer is dynamic (eager-only); set in the constructor. |

input

|

Retrieves the input tensor(s) of a layer.

Only applicable if the layer has exactly one input, i.e. if it is connected to one incoming layer. |

input_spec

|

InputSpec instance(s) describing the input format for this layer.

When you create a layer subclass, you can set Now, if you try to call the layer on an input that isn't rank 4

(for instance, an input of shape Input checks that can be specified via

For more information, see |

losses

|

List of losses added using the add_loss() API.

Variable regularization tensors are created when this property is

accessed, so it is eager safe: accessing

|

metrics

|

List of metrics attached to the layer. |

name

|

Name of the layer (string), set in the constructor. |

name_scope

|

Returns a tf.name_scope instance for this class.

|

non_trainable_weights

|

List of all non-trainable weights tracked by this layer.

Non-trainable weights are not updated during training. They are

expected to be updated manually in |

output

|

Retrieves the output tensor(s) of a layer.

Only applicable if the layer has exactly one output, i.e. if it is connected to one incoming layer. |

submodules

|

Sequence of all sub-modules.

Submodules are modules which are properties of this module, or found as properties of modules which are properties of this module (and so on).

|

supports_masking

|

Whether this layer supports computing a mask using compute_mask.

|

trainable

|

|

trainable_weights

|

List of all trainable weights tracked by this layer.

Trainable weights are updated via gradient descent during training. |

variable_dtype

|

Alias of Layer.dtype, the dtype of the weights.

|

weights

|

Returns the list of all layer variables/weights. |

Methods

add_loss

add_loss(

losses, **kwargs

)

Add loss tensor(s), potentially dependent on layer inputs.

Some losses (for instance, activity regularization losses) may be

dependent on the inputs passed when calling a layer. Hence, when reusing

the same layer on different inputs a and b, some entries in

layer.losses may be dependent on a and some on b. This method

automatically keeps track of dependencies.

This method can be used inside a subclassed layer or model's call

function, in which case losses should be a Tensor or list of Tensors.

Example:

class MyLayer(tf.keras.layers.Layer):

def call(self, inputs):

self.add_loss(tf.abs(tf.reduce_mean(inputs)))

return inputs

The same code works in distributed training: the input to add_loss()

is treated like a regularization loss and averaged across replicas

by the training loop (both built-in Model.fit() and compliant custom

training loops).

The add_loss method can also be called directly on a Functional Model

during construction. In this case, any loss Tensors passed to this Model

must be symbolic and be able to be traced back to the model's Inputs.

These losses become part of the model's topology and are tracked in

get_config.

Example:

inputs = tf.keras.Input(shape=(10,))

x = tf.keras.layers.Dense(10)(inputs)

outputs = tf.keras.layers.Dense(1)(x)

model = tf.keras.Model(inputs, outputs)

# Activity regularization.

model.add_loss(tf.abs(tf.reduce_mean(x)))

If this is not the case for your loss (if, for example, your loss

references a Variable of one of the model's layers), you can wrap your

loss in a zero-argument lambda. These losses are not tracked as part of

the model's topology since they can't be serialized.

Example:

inputs = tf.keras.Input(shape=(10,))

d = tf.keras.layers.Dense(10)

x = d(inputs)

outputs = tf.keras.layers.Dense(1)(x)

model = tf.keras.Model(inputs, outputs)

# Weight regularization.

model.add_loss(lambda: tf.reduce_mean(d.kernel))

| Args | |

|---|---|

losses

|

Loss tensor, or list/tuple of tensors. Rather than tensors, losses may also be zero-argument callables which create a loss tensor. |

**kwargs

|

Used for backwards compatibility only. |

assert_constraints

assert_constraints(

eps=1e-06

)

Asserts that weights satisfy all constraints.

In graph mode builds and returns list of assertion ops. In eager mode directly executes assertions.

| Args | |

|---|---|

eps

|

allowed constraints violation. |

| Returns | |

|---|---|

| List of assertion ops in graph mode or immediately asserts in eager mode. |

build

build(

input_shape

)

Standard Keras build() method.

build_from_config

build_from_config(

config

)

Builds the layer's states with the supplied config dict.

By default, this method calls the build(config["input_shape"]) method,

which creates weights based on the layer's input shape in the supplied

config. If your config contains other information needed to load the

layer's state, you should override this method.

| Args | |

|---|---|

config

|

Dict containing the input shape associated with this layer. |

compute_mask

compute_mask(

inputs, mask=None

)

Computes an output mask tensor.

| Args | |

|---|---|

inputs

|

Tensor or list of tensors. |

mask

|

Tensor or list of tensors. |

| Returns | |

|---|---|

| None or a tensor (or list of tensors, one per output tensor of the layer). |

compute_output_shape

compute_output_shape(

input_shape

)

Standard Keras compute_output_shape() method.

count_params

count_params()

Count the total number of scalars composing the weights.

| Returns | |

|---|---|

| An integer count. |

| Raises | |

|---|---|

ValueError

|

if the layer isn't yet built (in which case its weights aren't yet defined). |

finalize_constraints

finalize_constraints()

Ensures layers weights strictly satisfy constraints.

Applies approximate projection to strictly satisfy specified constraints.

If monotonic_at_every_step == True there is no need to call this function.

| Returns | |

|---|---|

In eager mode directly updates weights and returns variable which stores

them. In graph mode returns assign_add op which has to be executed to

updates weights.

|

from_config

@classmethodfrom_config( config )

Creates a layer from its config.

This method is the reverse of get_config,

capable of instantiating the same layer from the config

dictionary. It does not handle layer connectivity

(handled by Network), nor weights (handled by set_weights).

| Args | |

|---|---|

config

|

A Python dictionary, typically the output of get_config. |

| Returns | |

|---|---|

| A layer instance. |

get_build_config

get_build_config()

Returns a dictionary with the layer's input shape.

This method returns a config dict that can be used by

build_from_config(config) to create all states (e.g. Variables and

Lookup tables) needed by the layer.

By default, the config only contains the input shape that the layer was built with. If you're writing a custom layer that creates state in an unusual way, you should override this method to make sure this state is already created when TF-Keras attempts to load its value upon model loading.

| Returns | |

|---|---|

| A dict containing the input shape associated with the layer. |

get_config

get_config()

Standard Keras config for serialization.

get_weights

get_weights()

Returns the current weights of the layer, as NumPy arrays.

The weights of a layer represent the state of the layer. This function returns both trainable and non-trainable weight values associated with this layer as a list of NumPy arrays, which can in turn be used to load state into similarly parameterized layers.

For example, a Dense layer returns a list of two values: the kernel

matrix and the bias vector. These can be used to set the weights of

another Dense layer:

layer_a = tf.keras.layers.Dense(1,kernel_initializer=tf.constant_initializer(1.))a_out = layer_a(tf.convert_to_tensor([[1., 2., 3.]]))layer_a.get_weights()[array([[1.],[1.],[1.]], dtype=float32), array([0.], dtype=float32)]layer_b = tf.keras.layers.Dense(1,kernel_initializer=tf.constant_initializer(2.))b_out = layer_b(tf.convert_to_tensor([[10., 20., 30.]]))layer_b.get_weights()[array([[2.],[2.],[2.]], dtype=float32), array([0.], dtype=float32)]layer_b.set_weights(layer_a.get_weights())layer_b.get_weights()[array([[1.],[1.],[1.]], dtype=float32), array([0.], dtype=float32)]

| Returns | |

|---|---|

| Weights values as a list of NumPy arrays. |

load_own_variables

load_own_variables(

store

)

Loads the state of the layer.

You can override this method to take full control of how the state of

the layer is loaded upon calling keras.models.load_model().

| Args | |

|---|---|

store

|

Dict from which the state of the model will be loaded. |

save_own_variables

save_own_variables(

store

)

Saves the state of the layer.

You can override this method to take full control of how the state of

the layer is saved upon calling model.save().

| Args | |

|---|---|

store

|

Dict where the state of the model will be saved. |

set_weights

set_weights(

weights

)

Sets the weights of the layer, from NumPy arrays.

The weights of a layer represent the state of the layer. This function sets the weight values from numpy arrays. The weight values should be passed in the order they are created by the layer. Note that the layer's weights must be instantiated before calling this function, by calling the layer.

For example, a Dense layer returns a list of two values: the kernel

matrix and the bias vector. These can be used to set the weights of

another Dense layer:

layer_a = tf.keras.layers.Dense(1,kernel_initializer=tf.constant_initializer(1.))a_out = layer_a(tf.convert_to_tensor([[1., 2., 3.]]))layer_a.get_weights()[array([[1.],[1.],[1.]], dtype=float32), array([0.], dtype=float32)]layer_b = tf.keras.layers.Dense(1,kernel_initializer=tf.constant_initializer(2.))b_out = layer_b(tf.convert_to_tensor([[10., 20., 30.]]))layer_b.get_weights()[array([[2.],[2.],[2.]], dtype=float32), array([0.], dtype=float32)]layer_b.set_weights(layer_a.get_weights())layer_b.get_weights()[array([[1.],[1.],[1.]], dtype=float32), array([0.], dtype=float32)]

| Args | |

|---|---|

weights

|

a list of NumPy arrays. The number

of arrays and their shape must match

number of the dimensions of the weights

of the layer (i.e. it should match the

output of get_weights).

|

| Raises | |

|---|---|

ValueError

|

If the provided weights list does not match the layer's specifications. |

with_name_scope

@classmethodwith_name_scope( method )

Decorator to automatically enter the module name scope.

class MyModule(tf.Module):@tf.Module.with_name_scopedef __call__(self, x):if not hasattr(self, 'w'):self.w = tf.Variable(tf.random.normal([x.shape[1], 3]))return tf.matmul(x, self.w)

Using the above module would produce tf.Variables and tf.Tensors whose

names included the module name:

mod = MyModule()mod(tf.ones([1, 2]))<tf.Tensor: shape=(1, 3), dtype=float32, numpy=..., dtype=float32)>mod.w<tf.Variable 'my_module/Variable:0' shape=(2, 3) dtype=float32,numpy=..., dtype=float32)>

| Args | |

|---|---|

method

|

The method to wrap. |

| Returns | |

|---|---|

| The original method wrapped such that it enters the module's name scope. |

__call__

__call__(

*args, **kwargs

)

Wraps call, applying pre- and post-processing steps.

| Args | |

|---|---|

*args

|

Positional arguments to be passed to self.call.

|

**kwargs

|

Keyword arguments to be passed to self.call.

|

| Returns | |

|---|---|

| Output tensor(s). |

| Note | |

|---|---|

|

| Raises | |

|---|---|

ValueError

|

if the layer's call method returns None (an invalid

value).

|

RuntimeError

|

if super().__init__() was not called in the

constructor.

|