Getting started

TensorFlow Hub is a comprehensive repository of pre-trained models ready for fine-tuning and deployable anywhere. Download the latest trained models with a minimal amount of code with the tensorflow_hub library.

The following tutorials should help you getting started with using and applying models from TF Hub for your needs. Interactive tutorials let you modify them and execute them with your changes. Click the Run in Google Colab button at the top of an interactive tutorial to tinker with it.

For beginners

If you are unfamiliar with machine learning and TensorFlow, you can start by getting an overview of how to classify images and text, detecting objects in images, or by stylizing your own pictures like famous artwork:

Image Classification

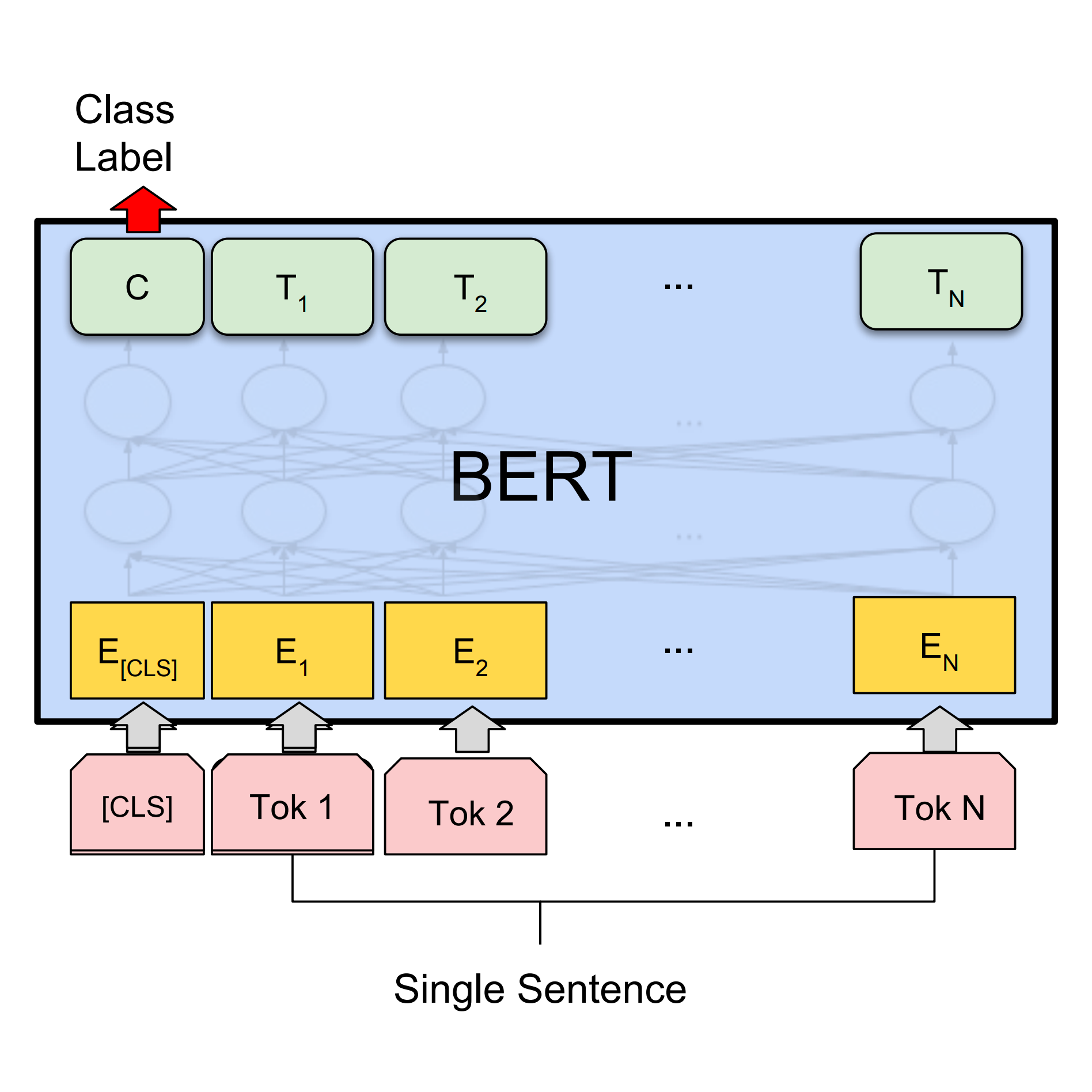

Build a Keras model on top of a pre-trained image classifier to distinguish flowers.Classify Text with BERT

Use BERT to build a Keras model to solve a text classificaton sentiment analysis task.Style Transfer

Let a neural network redraw an image in the style of Picasso, van Gogh or like your own style image.Object Detection

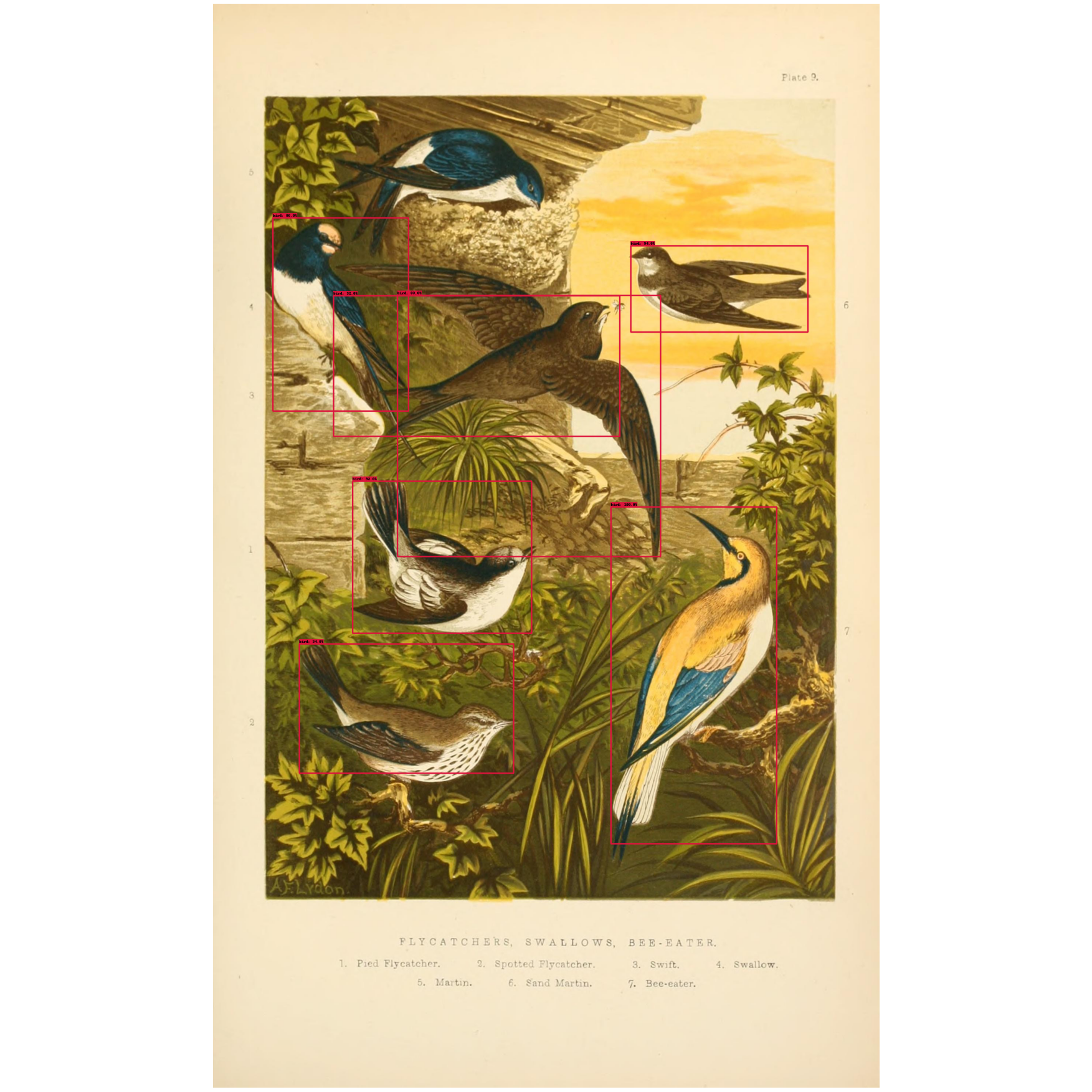

Detect objects in images using models like FasterRCNN or SSD.For experienced developers

Check out more advanced tutorials for how to use NLP, images, audio, and video models from TensorFlow Hub.

NLP tutorials

Solve common NLP tasks with models from TensorFlow Hub. View all available NLP tutorials in the left nav.

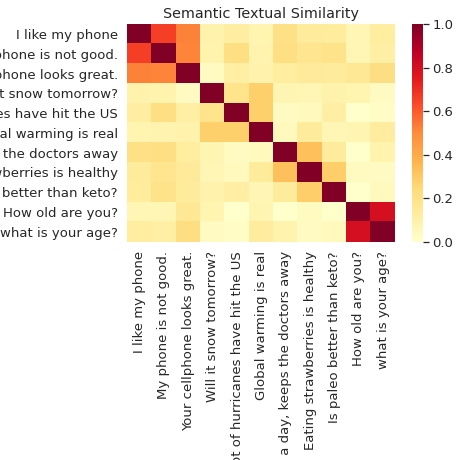

Semantic Similarity

Classify and semantically compare sentences with the Universal Sentence Encoder.BERT on TPU

Use BERT to solve GLUE benchmark tasks running on TPU.Multilingual Universal Sentence Encoder Q&A

Answer cross-lingual questions from the SQuAD dataset using the multilingual universal sentence encoder Q&A model.Image tutorials

Explore how to use GANs, super resolution models and more. View all available image tutorials in the left nav.

GANS for Image Generation

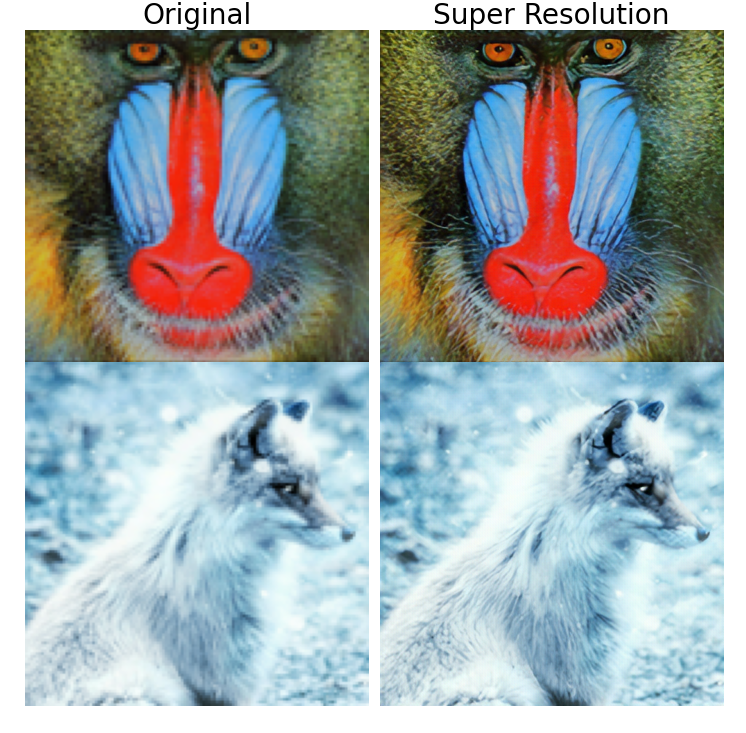

Generate artificial faces and interpolate between them using GANs.Super Resolution

Enhance the resolution of downsampled images.Image Extension

Fill the masked part of given images.Audio tutorials

Explore tutorials using trained models for audio data including pitch recognition and sound classification.

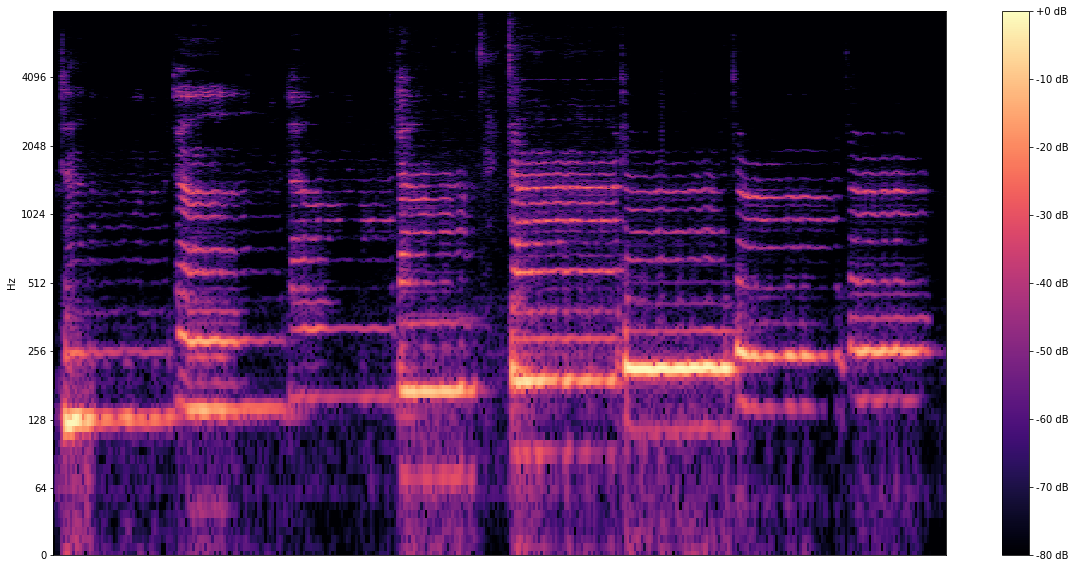

Pitch recognition

Record yourself singing and detect the pitch of your voice using the SPICE model.Sound classification

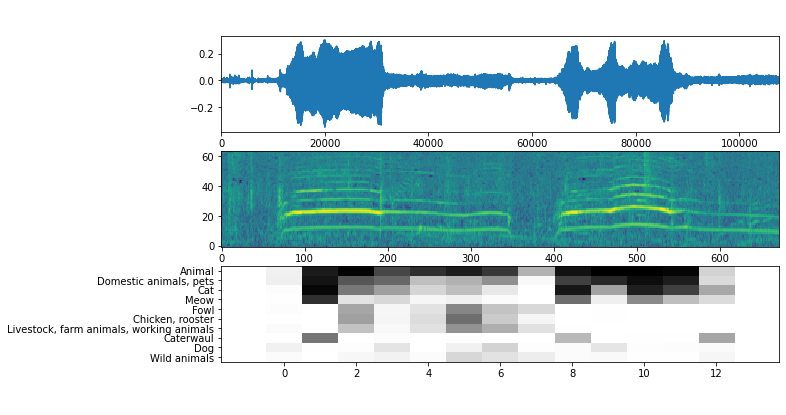

Use the YAMNet model to classify sounds as 521 audio event classes from the AudioSet-YouTube corpus.Video tutorials

Try out trained ML models for video data for action recognition, video interpolation, and more.