TensorFlow.org पर देखें TensorFlow.org पर देखें |  Google Colab में चलाएं Google Colab में चलाएं |  GitHub पर स्रोत देखें GitHub पर स्रोत देखें |  नोटबुक डाउनलोड करें नोटबुक डाउनलोड करें |

अवलोकन

इस कोडलैब में आप CIFAR10 डेटासेट पर एक साधारण छवि वर्गीकरण मॉडल को प्रशिक्षित करेंगे, और फिर इस मॉडल के खिलाफ "सदस्यता अनुमान हमले" का उपयोग यह आकलन करने के लिए करेंगे कि क्या हमलावर "अनुमान" करने में सक्षम है कि प्रशिक्षण सेट में कोई विशेष नमूना मौजूद था या नहीं। . आप कई मॉडलों और मॉडल चौकियों से परिणामों की कल्पना करने के लिए TF गोपनीयता रिपोर्ट का उपयोग करेंगे।

सेट अप

import numpy as np

from typing import Tuple

from scipy import special

from sklearn import metrics

import tensorflow as tf

import tensorflow_datasets as tfds

# Set verbosity.

tf.compat.v1.logging.set_verbosity(tf.compat.v1.logging.ERROR)

from sklearn.exceptions import ConvergenceWarning

import warnings

warnings.simplefilter(action="ignore", category=ConvergenceWarning)

warnings.simplefilter(action="ignore", category=FutureWarning)

TensorFlow गोपनीयता स्थापित करें।

pip install tensorflow_privacy

from tensorflow_privacy.privacy.privacy_tests.membership_inference_attack import membership_inference_attack as mia

from tensorflow_privacy.privacy.privacy_tests.membership_inference_attack.data_structures import AttackInputData

from tensorflow_privacy.privacy.privacy_tests.membership_inference_attack.data_structures import AttackResultsCollection

from tensorflow_privacy.privacy.privacy_tests.membership_inference_attack.data_structures import AttackType

from tensorflow_privacy.privacy.privacy_tests.membership_inference_attack.data_structures import PrivacyMetric

from tensorflow_privacy.privacy.privacy_tests.membership_inference_attack.data_structures import PrivacyReportMetadata

from tensorflow_privacy.privacy.privacy_tests.membership_inference_attack.data_structures import SlicingSpec

from tensorflow_privacy.privacy.privacy_tests.membership_inference_attack import privacy_report

import tensorflow_privacy

गोपनीयता मेट्रिक्स के साथ दो मॉडलों को प्रशिक्षित करें

यह खंड की एक जोड़ी गाड़ियों keras.Model पर classifiers CIFAR-10 डाटासेट। प्रशिक्षण प्रक्रिया के दौरान यह गोपनीयता मेट्रिक्स एकत्र करता है, जिसका उपयोग अगले अनुभाग में रिपोर्ट तैयार करने के लिए किया जाएगा।

कुछ हाइपरपैरामीटर को परिभाषित करने के लिए पहला कदम है:

dataset = 'cifar10'

num_classes = 10

activation = 'relu'

num_conv = 3

batch_size=50

epochs_per_report = 2

total_epochs = 50

lr = 0.001

इसके बाद, डेटासेट लोड करें। इस कोड में गोपनीयता-विशिष्ट कुछ भी नहीं है।

print('Loading the dataset.')

train_ds = tfds.as_numpy(

tfds.load(dataset, split=tfds.Split.TRAIN, batch_size=-1))

test_ds = tfds.as_numpy(

tfds.load(dataset, split=tfds.Split.TEST, batch_size=-1))

x_train = train_ds['image'].astype('float32') / 255.

y_train_indices = train_ds['label'][:, np.newaxis]

x_test = test_ds['image'].astype('float32') / 255.

y_test_indices = test_ds['label'][:, np.newaxis]

# Convert class vectors to binary class matrices.

y_train = tf.keras.utils.to_categorical(y_train_indices, num_classes)

y_test = tf.keras.utils.to_categorical(y_test_indices, num_classes)

input_shape = x_train.shape[1:]

assert x_train.shape[0] % batch_size == 0, "The tensorflow_privacy optimizer doesn't handle partial batches"

Loading the dataset.

अगला मॉडल बनाने के लिए एक फ़ंक्शन को परिभाषित करें।

def small_cnn(input_shape: Tuple[int],

num_classes: int,

num_conv: int,

activation: str = 'relu') -> tf.keras.models.Sequential:

"""Setup a small CNN for image classification.

Args:

input_shape: Integer tuple for the shape of the images.

num_classes: Number of prediction classes.

num_conv: Number of convolutional layers.

activation: The activation function to use for conv and dense layers.

Returns:

The Keras model.

"""

model = tf.keras.models.Sequential()

model.add(tf.keras.layers.Input(shape=input_shape))

# Conv layers

for _ in range(num_conv):

model.add(tf.keras.layers.Conv2D(32, (3, 3), activation=activation))

model.add(tf.keras.layers.MaxPooling2D())

model.add(tf.keras.layers.Flatten())

model.add(tf.keras.layers.Dense(64, activation=activation))

model.add(tf.keras.layers.Dense(num_classes))

model.compile(

loss=tf.keras.losses.CategoricalCrossentropy(from_logits=True),

optimizer=tf.keras.optimizers.Adam(learning_rate=lr),

metrics=['accuracy'])

return model

उस फ़ंक्शन का उपयोग करके दो तीन-परत CNN मॉडल बनाएं।

एक भिन्न निजी अनुकूलक (उपयोग करने के लिए एक बुनियादी SGD अनुकूलक का उपयोग करने के लिए पहले, एक दूसरे को कॉन्फ़िगर tf_privacy.DPKerasAdamOptimizer ,) ताकि आप परिणामों की तुलना कर सकते हैं।

model_2layers = small_cnn(

input_shape, num_classes, num_conv=2, activation=activation)

model_3layers = small_cnn(

input_shape, num_classes, num_conv=3, activation=activation)

गोपनीयता मेट्रिक्स एकत्र करने के लिए कॉलबैक को परिभाषित करें

अगला एक परिभाषित keras.callbacks.Callback periorically मॉडल के खिलाफ कुछ गोपनीयता हमलों को चलाने के लिए, और परिणाम लॉग इन करें।

Keras fit विधि कॉल करेंगे on_epoch_end प्रत्येक प्रशिक्षण युग के बाद विधि। n तर्क (0-आधारित) युग संख्या है।

आप एक पाश है कि बार-बार कॉल लिख कर इस प्रक्रिया को लागू कर सकता है Model.fit(..., epochs=epochs_per_report) और हमले कोड चलाता है। कॉलबैक का उपयोग यहां सिर्फ इसलिए किया जाता है क्योंकि यह प्रशिक्षण तर्क और गोपनीयता मूल्यांकन तर्क के बीच स्पष्ट अलगाव देता है।

class PrivacyMetrics(tf.keras.callbacks.Callback):

def __init__(self, epochs_per_report, model_name):

self.epochs_per_report = epochs_per_report

self.model_name = model_name

self.attack_results = []

def on_epoch_end(self, epoch, logs=None):

epoch = epoch+1

if epoch % self.epochs_per_report != 0:

return

print(f'\nRunning privacy report for epoch: {epoch}\n')

logits_train = self.model.predict(x_train, batch_size=batch_size)

logits_test = self.model.predict(x_test, batch_size=batch_size)

prob_train = special.softmax(logits_train, axis=1)

prob_test = special.softmax(logits_test, axis=1)

# Add metadata to generate a privacy report.

privacy_report_metadata = PrivacyReportMetadata(

# Show the validation accuracy on the plot

# It's what you send to train_accuracy that gets plotted.

accuracy_train=logs['val_accuracy'],

accuracy_test=logs['val_accuracy'],

epoch_num=epoch,

model_variant_label=self.model_name)

attack_results = mia.run_attacks(

AttackInputData(

labels_train=y_train_indices[:, 0],

labels_test=y_test_indices[:, 0],

probs_train=prob_train,

probs_test=prob_test),

SlicingSpec(entire_dataset=True, by_class=True),

attack_types=(AttackType.THRESHOLD_ATTACK,

AttackType.LOGISTIC_REGRESSION),

privacy_report_metadata=privacy_report_metadata)

self.attack_results.append(attack_results)

मॉडलों को प्रशिक्षित करें

अगला कोड ब्लॉक दो मॉडलों को प्रशिक्षित करता है। all_reports सूची सभी मॉडलों का प्रशिक्षण रन के सभी परिणामों को एकत्र करने के लिए प्रयोग किया जाता है। अलग-अलग रिपोर्ट witht टैग किए गए हैं model_name , इसलिए वहाँ कोई भ्रम के बारे में जो भी मॉडल जेनरेट जो रिपोर्ट है।

all_reports = []

callback = PrivacyMetrics(epochs_per_report, "2 Layers")

history = model_2layers.fit(

x_train,

y_train,

batch_size=batch_size,

epochs=total_epochs,

validation_data=(x_test, y_test),

callbacks=[callback],

shuffle=True)

all_reports.extend(callback.attack_results)

Epoch 1/50 1000/1000 [==============================] - 13s 4ms/step - loss: 1.5146 - accuracy: 0.4573 - val_loss: 1.2374 - val_accuracy: 0.5660 Epoch 2/50 1000/1000 [==============================] - 3s 3ms/step - loss: 1.1933 - accuracy: 0.5811 - val_loss: 1.1873 - val_accuracy: 0.5851 Running privacy report for epoch: 2 Epoch 3/50 1000/1000 [==============================] - 3s 3ms/step - loss: 1.0694 - accuracy: 0.6246 - val_loss: 1.0526 - val_accuracy: 0.6310 Epoch 4/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.9911 - accuracy: 0.6548 - val_loss: 0.9906 - val_accuracy: 0.6549 Running privacy report for epoch: 4 Epoch 5/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.9348 - accuracy: 0.6743 - val_loss: 0.9712 - val_accuracy: 0.6617 Epoch 6/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.8881 - accuracy: 0.6912 - val_loss: 0.9595 - val_accuracy: 0.6671 Running privacy report for epoch: 6 Epoch 7/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.8495 - accuracy: 0.7024 - val_loss: 0.9574 - val_accuracy: 0.6684 Epoch 8/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.8147 - accuracy: 0.7161 - val_loss: 0.9397 - val_accuracy: 0.6740 Running privacy report for epoch: 8 Epoch 9/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7820 - accuracy: 0.7263 - val_loss: 0.9325 - val_accuracy: 0.6837 Epoch 10/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7533 - accuracy: 0.7362 - val_loss: 0.9431 - val_accuracy: 0.6843 Running privacy report for epoch: 10 Epoch 11/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7169 - accuracy: 0.7477 - val_loss: 0.9310 - val_accuracy: 0.6795 Epoch 12/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6892 - accuracy: 0.7569 - val_loss: 0.9043 - val_accuracy: 0.6975 Running privacy report for epoch: 12 Epoch 13/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6677 - accuracy: 0.7663 - val_loss: 0.9401 - val_accuracy: 0.6796 Epoch 14/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6401 - accuracy: 0.7741 - val_loss: 0.9464 - val_accuracy: 0.6880 Running privacy report for epoch: 14 Epoch 15/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6177 - accuracy: 0.7821 - val_loss: 0.9359 - val_accuracy: 0.6930 Epoch 16/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5978 - accuracy: 0.7913 - val_loss: 0.9634 - val_accuracy: 0.6896 Running privacy report for epoch: 16 Epoch 17/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5745 - accuracy: 0.7973 - val_loss: 0.9941 - val_accuracy: 0.6932 Epoch 18/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5553 - accuracy: 0.8036 - val_loss: 0.9790 - val_accuracy: 0.6974 Running privacy report for epoch: 18 Epoch 19/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5376 - accuracy: 0.8103 - val_loss: 0.9989 - val_accuracy: 0.6907 Epoch 20/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5152 - accuracy: 0.8192 - val_loss: 1.0245 - val_accuracy: 0.6878 Running privacy report for epoch: 20 Epoch 21/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5048 - accuracy: 0.8208 - val_loss: 1.0223 - val_accuracy: 0.6852 Epoch 22/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.4847 - accuracy: 0.8284 - val_loss: 1.0498 - val_accuracy: 0.6866 Running privacy report for epoch: 22 Epoch 23/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.4722 - accuracy: 0.8325 - val_loss: 1.0610 - val_accuracy: 0.6899 Epoch 24/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.4562 - accuracy: 0.8387 - val_loss: 1.0973 - val_accuracy: 0.6771 Running privacy report for epoch: 24 Epoch 25/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.4392 - accuracy: 0.8447 - val_loss: 1.1141 - val_accuracy: 0.6865 Epoch 26/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.4269 - accuracy: 0.8485 - val_loss: 1.1928 - val_accuracy: 0.6771 Running privacy report for epoch: 26 Epoch 27/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.4135 - accuracy: 0.8533 - val_loss: 1.1945 - val_accuracy: 0.6758 Epoch 28/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.4053 - accuracy: 0.8569 - val_loss: 1.2244 - val_accuracy: 0.6716 Running privacy report for epoch: 28 Epoch 29/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3880 - accuracy: 0.8611 - val_loss: 1.2362 - val_accuracy: 0.6789 Epoch 30/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3805 - accuracy: 0.8630 - val_loss: 1.2815 - val_accuracy: 0.6805 Running privacy report for epoch: 30 Epoch 31/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3756 - accuracy: 0.8656 - val_loss: 1.2973 - val_accuracy: 0.6762 Epoch 32/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3565 - accuracy: 0.8719 - val_loss: 1.3022 - val_accuracy: 0.6810 Running privacy report for epoch: 32 Epoch 33/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3494 - accuracy: 0.8749 - val_loss: 1.3248 - val_accuracy: 0.6756 Epoch 34/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3371 - accuracy: 0.8790 - val_loss: 1.3941 - val_accuracy: 0.6806 Running privacy report for epoch: 34 Epoch 35/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3248 - accuracy: 0.8839 - val_loss: 1.4391 - val_accuracy: 0.6666 Epoch 36/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3233 - accuracy: 0.8833 - val_loss: 1.5060 - val_accuracy: 0.6692 Running privacy report for epoch: 36 Epoch 37/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3109 - accuracy: 0.8882 - val_loss: 1.4624 - val_accuracy: 0.6724 Epoch 38/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.3057 - accuracy: 0.8900 - val_loss: 1.5133 - val_accuracy: 0.6644 Running privacy report for epoch: 38 Epoch 39/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2929 - accuracy: 0.8949 - val_loss: 1.5465 - val_accuracy: 0.6618 Epoch 40/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2868 - accuracy: 0.8970 - val_loss: 1.5882 - val_accuracy: 0.6696 Running privacy report for epoch: 40 Epoch 41/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2778 - accuracy: 0.8982 - val_loss: 1.6317 - val_accuracy: 0.6713 Epoch 42/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2782 - accuracy: 0.8999 - val_loss: 1.6993 - val_accuracy: 0.6630 Running privacy report for epoch: 42 Epoch 43/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2675 - accuracy: 0.9039 - val_loss: 1.7294 - val_accuracy: 0.6645 Epoch 44/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2587 - accuracy: 0.9068 - val_loss: 1.7614 - val_accuracy: 0.6561 Running privacy report for epoch: 44 Epoch 45/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2528 - accuracy: 0.9076 - val_loss: 1.7835 - val_accuracy: 0.6564 Epoch 46/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2410 - accuracy: 0.9129 - val_loss: 1.8550 - val_accuracy: 0.6648 Running privacy report for epoch: 46 Epoch 47/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2409 - accuracy: 0.9106 - val_loss: 1.8705 - val_accuracy: 0.6572 Epoch 48/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2328 - accuracy: 0.9146 - val_loss: 1.9110 - val_accuracy: 0.6593 Running privacy report for epoch: 48 Epoch 49/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2299 - accuracy: 0.9164 - val_loss: 1.9468 - val_accuracy: 0.6634 Epoch 50/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.2250 - accuracy: 0.9178 - val_loss: 2.0154 - val_accuracy: 0.6610 Running privacy report for epoch: 50

callback = PrivacyMetrics(epochs_per_report, "3 Layers")

history = model_3layers.fit(

x_train,

y_train,

batch_size=batch_size,

epochs=total_epochs,

validation_data=(x_test, y_test),

callbacks=[callback],

shuffle=True)

all_reports.extend(callback.attack_results)

Epoch 1/50 1000/1000 [==============================] - 4s 4ms/step - loss: 1.6838 - accuracy: 0.3772 - val_loss: 1.4805 - val_accuracy: 0.4552 Epoch 2/50 1000/1000 [==============================] - 3s 3ms/step - loss: 1.3938 - accuracy: 0.4969 - val_loss: 1.3291 - val_accuracy: 0.5276 Running privacy report for epoch: 2 Epoch 3/50 1000/1000 [==============================] - 3s 3ms/step - loss: 1.2564 - accuracy: 0.5510 - val_loss: 1.2313 - val_accuracy: 0.5627 Epoch 4/50 1000/1000 [==============================] - 3s 3ms/step - loss: 1.1610 - accuracy: 0.5884 - val_loss: 1.1251 - val_accuracy: 0.6039 Running privacy report for epoch: 4 Epoch 5/50 1000/1000 [==============================] - 3s 3ms/step - loss: 1.1034 - accuracy: 0.6105 - val_loss: 1.1168 - val_accuracy: 0.6063 Epoch 6/50 1000/1000 [==============================] - 3s 3ms/step - loss: 1.0476 - accuracy: 0.6319 - val_loss: 1.0716 - val_accuracy: 0.6248 Running privacy report for epoch: 6 Epoch 7/50 1000/1000 [==============================] - 3s 3ms/step - loss: 1.0107 - accuracy: 0.6461 - val_loss: 1.0264 - val_accuracy: 0.6407 Epoch 8/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.9731 - accuracy: 0.6597 - val_loss: 1.0216 - val_accuracy: 0.6447 Running privacy report for epoch: 8 Epoch 9/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.9437 - accuracy: 0.6712 - val_loss: 1.0016 - val_accuracy: 0.6467 Epoch 10/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.9191 - accuracy: 0.6790 - val_loss: 0.9845 - val_accuracy: 0.6553 Running privacy report for epoch: 10 Epoch 11/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.8923 - accuracy: 0.6877 - val_loss: 0.9560 - val_accuracy: 0.6670 Epoch 12/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.8722 - accuracy: 0.6959 - val_loss: 0.9518 - val_accuracy: 0.6686 Running privacy report for epoch: 12 Epoch 13/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.8495 - accuracy: 0.7029 - val_loss: 0.9427 - val_accuracy: 0.6787 Epoch 14/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.8305 - accuracy: 0.7116 - val_loss: 0.9247 - val_accuracy: 0.6814 Running privacy report for epoch: 14 Epoch 15/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.8164 - accuracy: 0.7157 - val_loss: 0.9263 - val_accuracy: 0.6797 Epoch 16/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7973 - accuracy: 0.7220 - val_loss: 0.9151 - val_accuracy: 0.6850 Running privacy report for epoch: 16 Epoch 17/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7830 - accuracy: 0.7277 - val_loss: 0.9139 - val_accuracy: 0.6842 Epoch 18/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7704 - accuracy: 0.7294 - val_loss: 0.9384 - val_accuracy: 0.6774 Running privacy report for epoch: 18 Epoch 19/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7539 - accuracy: 0.7366 - val_loss: 0.9508 - val_accuracy: 0.6761 Epoch 20/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7445 - accuracy: 0.7412 - val_loss: 0.9108 - val_accuracy: 0.6908 Running privacy report for epoch: 20 Epoch 21/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7343 - accuracy: 0.7418 - val_loss: 0.9161 - val_accuracy: 0.6855 Epoch 22/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7213 - accuracy: 0.7458 - val_loss: 0.9754 - val_accuracy: 0.6724 Running privacy report for epoch: 22 Epoch 23/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7133 - accuracy: 0.7487 - val_loss: 0.8936 - val_accuracy: 0.6984 Epoch 24/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.7072 - accuracy: 0.7504 - val_loss: 0.8872 - val_accuracy: 0.7002 Running privacy report for epoch: 24 Epoch 25/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6932 - accuracy: 0.7570 - val_loss: 0.9732 - val_accuracy: 0.6769 Epoch 26/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6883 - accuracy: 0.7578 - val_loss: 0.9332 - val_accuracy: 0.6798 Running privacy report for epoch: 26 Epoch 27/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6766 - accuracy: 0.7614 - val_loss: 0.9069 - val_accuracy: 0.6998 Epoch 28/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6656 - accuracy: 0.7662 - val_loss: 0.8879 - val_accuracy: 0.7011 Running privacy report for epoch: 28 Epoch 29/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6594 - accuracy: 0.7674 - val_loss: 0.8988 - val_accuracy: 0.7037 Epoch 30/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6499 - accuracy: 0.7700 - val_loss: 0.9086 - val_accuracy: 0.7001 Running privacy report for epoch: 30 Epoch 31/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6420 - accuracy: 0.7746 - val_loss: 0.8985 - val_accuracy: 0.7034 Epoch 32/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6354 - accuracy: 0.7742 - val_loss: 0.9089 - val_accuracy: 0.7018 Running privacy report for epoch: 32 Epoch 33/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6293 - accuracy: 0.7759 - val_loss: 0.9258 - val_accuracy: 0.6947 Epoch 34/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6192 - accuracy: 0.7851 - val_loss: 0.9326 - val_accuracy: 0.6976 Running privacy report for epoch: 34 Epoch 35/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6157 - accuracy: 0.7831 - val_loss: 0.9240 - val_accuracy: 0.6973 Epoch 36/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6063 - accuracy: 0.7853 - val_loss: 0.9504 - val_accuracy: 0.6971 Running privacy report for epoch: 36 Epoch 37/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.6036 - accuracy: 0.7867 - val_loss: 0.9025 - val_accuracy: 0.7094 Epoch 38/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5958 - accuracy: 0.7877 - val_loss: 0.9290 - val_accuracy: 0.6976 Running privacy report for epoch: 38 Epoch 39/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5900 - accuracy: 0.7919 - val_loss: 0.9379 - val_accuracy: 0.6963 Epoch 40/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5856 - accuracy: 0.7928 - val_loss: 0.9911 - val_accuracy: 0.6896 Running privacy report for epoch: 40 Epoch 41/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5772 - accuracy: 0.7944 - val_loss: 0.9093 - val_accuracy: 0.7059 Epoch 42/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5752 - accuracy: 0.7940 - val_loss: 0.9275 - val_accuracy: 0.7061 Running privacy report for epoch: 42 Epoch 43/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5645 - accuracy: 0.7998 - val_loss: 0.9208 - val_accuracy: 0.7025 Epoch 44/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5632 - accuracy: 0.8000 - val_loss: 0.9746 - val_accuracy: 0.6976 Running privacy report for epoch: 44 Epoch 45/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5557 - accuracy: 0.8045 - val_loss: 0.9211 - val_accuracy: 0.7098 Epoch 46/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5469 - accuracy: 0.8073 - val_loss: 0.9357 - val_accuracy: 0.7055 Running privacy report for epoch: 46 Epoch 47/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5438 - accuracy: 0.8062 - val_loss: 0.9495 - val_accuracy: 0.7025 Epoch 48/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5437 - accuracy: 0.8069 - val_loss: 0.9509 - val_accuracy: 0.6994 Running privacy report for epoch: 48 Epoch 49/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5414 - accuracy: 0.8066 - val_loss: 0.9780 - val_accuracy: 0.6939 Epoch 50/50 1000/1000 [==============================] - 3s 3ms/step - loss: 0.5321 - accuracy: 0.8108 - val_loss: 1.0109 - val_accuracy: 0.6846 Running privacy report for epoch: 50

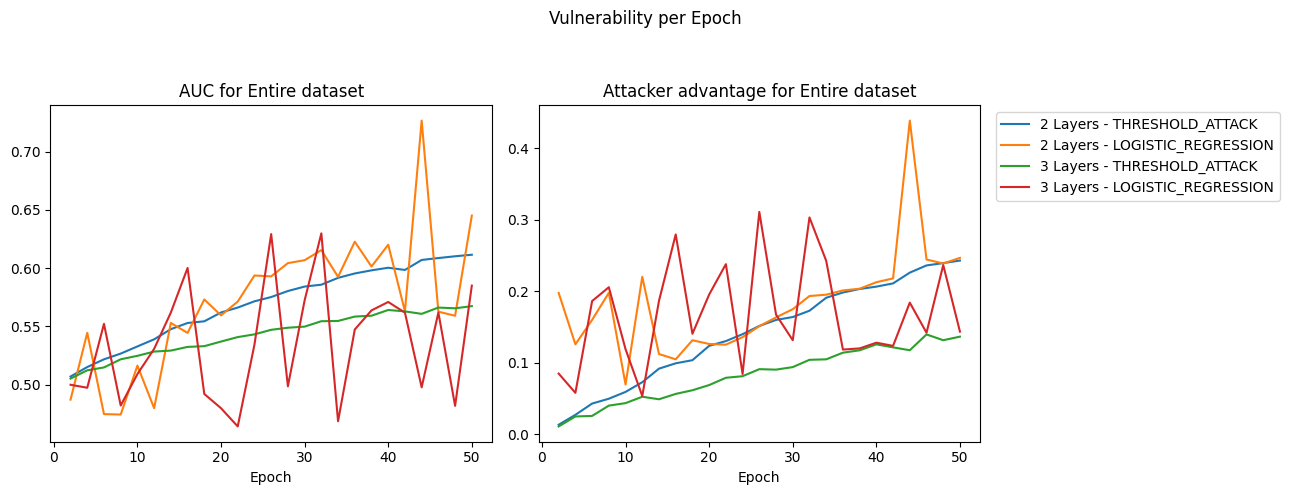

युग भूखंड

आप कल्पना कर सकते हैं कि गोपनीयता जोखिम कैसे होता है जब आप मॉडल को समय-समय पर (उदाहरण के लिए प्रत्येक 5 युग) जांच करके मॉडल को प्रशिक्षित करते हैं, तो आप सर्वश्रेष्ठ प्रदर्शन/गोपनीयता व्यापार-बंद के साथ समय पर बिंदु चुन सकते हैं।

उत्पन्न करने के लिए TF गोपनीयता सदस्यता निष्कर्ष हमला मॉड्यूल का उपयोग करें AttackResults । ये AttackResults एक में संयुक्त हो AttackResultsCollection । TF गोपनीयता रिपोर्ट प्रदान की विश्लेषण करने के लिए डिज़ाइन किया गया है AttackResultsCollection ।

results = AttackResultsCollection(all_reports)

privacy_metrics = (PrivacyMetric.AUC, PrivacyMetric.ATTACKER_ADVANTAGE)

epoch_plot = privacy_report.plot_by_epochs(

results, privacy_metrics=privacy_metrics)

देखें कि एक नियम के रूप में, जैसे-जैसे युगों की संख्या बढ़ती जाती है, गोपनीयता की भेद्यता बढ़ती जाती है। यह मॉडल वेरिएंट के साथ-साथ विभिन्न हमलावर प्रकारों के लिए भी सही है।

दो परत मॉडल (कम दृढ़ परतों के साथ) आम तौर पर उनके तीन परत मॉडल समकक्षों की तुलना में अधिक कमजोर होते हैं।

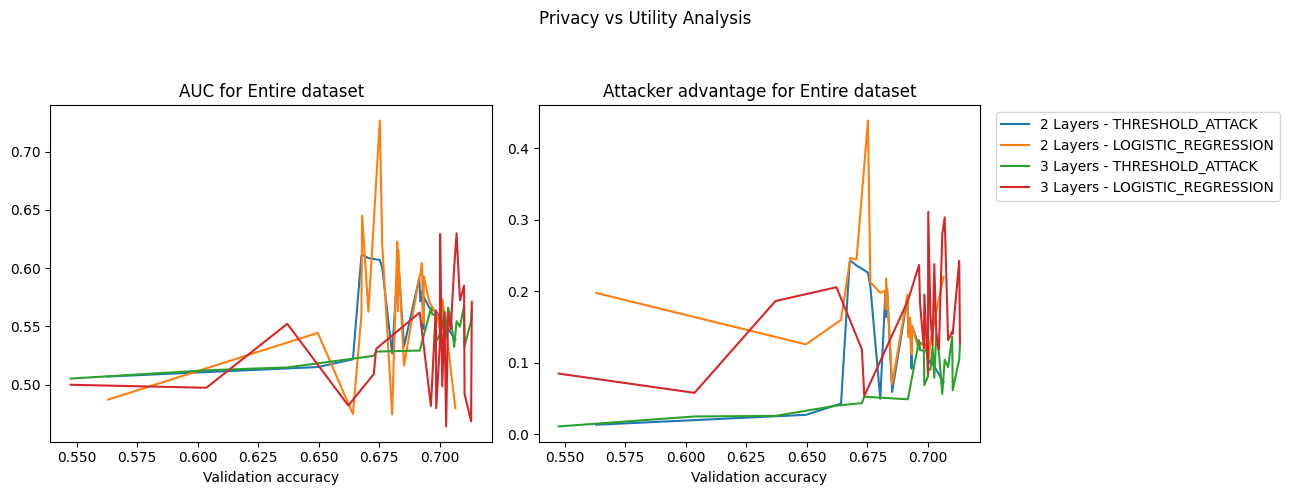

अब देखते हैं कि गोपनीयता जोखिम के संबंध में मॉडल का प्रदर्शन कैसे बदलता है।

गोपनीयता बनाम उपयोगिता

privacy_metrics = (PrivacyMetric.AUC, PrivacyMetric.ATTACKER_ADVANTAGE)

utility_privacy_plot = privacy_report.plot_privacy_vs_accuracy(

results, privacy_metrics=privacy_metrics)

for axis in utility_privacy_plot.axes:

axis.set_xlabel('Validation accuracy')

तीन परत मॉडल (शायद बहुत अधिक मापदंडों के कारण) केवल 0.85 की ट्रेन सटीकता प्राप्त करते हैं। दो परत मॉडल गोपनीयता जोखिम के उस स्तर के लिए लगभग समान प्रदर्शन प्राप्त करते हैं लेकिन वे बेहतर सटीकता प्राप्त करना जारी रखते हैं।

आप यह भी देख सकते हैं कि दो परत मॉडल के लिए लाइन कैसे तेज हो जाती है। इसका मतलब यह है कि ट्रेन की सटीकता में अतिरिक्त मामूली लाभ विशाल गोपनीयता कमजोरियों की कीमत पर आता है।

यह ट्यूटोरियल का अंत है। अपने स्वयं के परिणामों का विश्लेषण करने के लिए स्वतंत्र महसूस करें।