TensorFlow.org에서 보기 TensorFlow.org에서 보기 |

Google Colab에서 실행하기 Google Colab에서 실행하기 |

GitHub에서 소스 GitHub에서 소스 |

노트북 다운로드 노트북 다운로드 |

이 튜토리얼에서는 한 클래스의 예시의 수가 다른 클래스보다 훨씬 많은 매우 불균형적인 데이터세트를 분류하는 방법을 소개합니다. Kaggle에서 호스팅되는 신용 카드 부정 행위 탐지 데이터세트를 사용하여 작업해 보겠습니다. 총 284,807건의 거래에서 492건의 부정 거래를 탐지하는 것을 목표로 합니다. Keras를 사용하여 모델 및 클래스 가중치를 정의하여 불균형 데이터에서 모델을 학습시켜 보겠습니다.

이 튜토리얼에는 다음을 수행하기 위한 완전한 코드가 포함되어 있습니다.

- Pandas를 사용하여 CSV 파일 로드.

- 학습, 검증 및 테스트세트 작성.

- Keras를 사용하여 모델을 정의하고 학습(클래스 가중치 설정 포함)

- 다양한 측정 기준(정밀도 및 재현율 포함)을 사용하여 모델 평가

- 다음과 같은 불균형 데이터를 처리하기 위한 일반적인 기술 사용

- 클래스 가중치

- 오버샘플링

설정

import tensorflow as tf

from tensorflow import keras

import os

import tempfile

import matplotlib as mpl

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import seaborn as sns

import sklearn

from sklearn.metrics import confusion_matrix

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

2022-12-14 22:49:51.125111: W tensorflow/compiler/xla/stream_executor/platform/default/dso_loader.cc:64] Could not load dynamic library 'libnvinfer.so.7'; dlerror: libnvinfer.so.7: cannot open shared object file: No such file or directory 2022-12-14 22:49:51.125206: W tensorflow/compiler/xla/stream_executor/platform/default/dso_loader.cc:64] Could not load dynamic library 'libnvinfer_plugin.so.7'; dlerror: libnvinfer_plugin.so.7: cannot open shared object file: No such file or directory 2022-12-14 22:49:51.125215: W tensorflow/compiler/tf2tensorrt/utils/py_utils.cc:38] TF-TRT Warning: Cannot dlopen some TensorRT libraries. If you would like to use Nvidia GPU with TensorRT, please make sure the missing libraries mentioned above are installed properly.

mpl.rcParams['figure.figsize'] = (12, 10)

colors = plt.rcParams['axes.prop_cycle'].by_key()['color']

데이터 처리 및 탐색

Kaggle 신용 카드 부정 행위 데이터 세트

Pandas는 구조적 데이터를 로드하고 처리하는 데 유용한 여러 유틸리티가 포함된 Python 라이브러리입니다. CSV를 Pandas 데이터 프레임으로 다운로드하는 데 사용할 수 있습니다.

참고: 이 데이터세트는 빅데이터 마이닝 및 부정 행위 감지에 대한 Worldline과 ULB(Université Libre de Bruxelles) Machine Learning Group의 연구 협업을 통해 수집 및 분석되었습니다. 관련 주제에 관한 현재 및 과거 프로젝트에 대한 자세한 내용은 여기를 참조하거나 DefeatFraud 프로젝트 페이지에서 확인할 수 있습니다.

file = tf.keras.utils

raw_df = pd.read_csv('https://storage.googleapis.com/download.tensorflow.org/data/creditcard.csv')

raw_df.head()

raw_df[['Time', 'V1', 'V2', 'V3', 'V4', 'V5', 'V26', 'V27', 'V28', 'Amount', 'Class']].describe()

클래스 레이블 불균형 검사

데이터세트 불균형을 살펴보겠습니다.:

neg, pos = np.bincount(raw_df['Class'])

total = neg + pos

print('Examples:\n Total: {}\n Positive: {} ({:.2f}% of total)\n'.format(

total, pos, 100 * pos / total))

Examples:

Total: 284807

Positive: 492 (0.17% of total)

이를 통해 양성 샘플 일부를 확인할 수 있습니다.

데이터 정리, 분할 및 정규화

원시 데이터에는 몇 가지 문제가 있습니다. 먼저 Time 및 Amount 열이 매우 가변적이므로 직접 사용할 수 없습니다. (의미가 명확하지 않으므로) Time 열을 삭제하고 Amount 열의 로그를 가져와 범위를 줄입니다.

cleaned_df = raw_df.copy()

# You don't want the `Time` column.

cleaned_df.pop('Time')

# The `Amount` column covers a huge range. Convert to log-space.

eps = 0.001 # 0 => 0.1¢

cleaned_df['Log Amount'] = np.log(cleaned_df.pop('Amount')+eps)

데이터세트를 학습, 검증 및 테스트 세트로 분할합니다. 검증 세트는 모델 피팅 중에 사용되어 손실 및 메트릭을 평가하지만 해당 모델은 이 데이터에 적합하지 않습니다. 테스트 세트는 훈련 단계에서는 전혀 사용되지 않으며 마지막에만 사용되어 모델이 새 데이터로 일반화되는 정도를 평가합니다. 이는 훈련 데이터가 부족하여 과대적합이 크게 문제가 되는 불균형 데이터세트에서 특히 중요합니다.

# Use a utility from sklearn to split and shuffle your dataset.

train_df, test_df = train_test_split(cleaned_df, test_size=0.2)

train_df, val_df = train_test_split(train_df, test_size=0.2)

# Form np arrays of labels and features.

train_labels = np.array(train_df.pop('Class'))

bool_train_labels = train_labels != 0

val_labels = np.array(val_df.pop('Class'))

test_labels = np.array(test_df.pop('Class'))

train_features = np.array(train_df)

val_features = np.array(val_df)

test_features = np.array(test_df)

sklearn StandardScaler를 사용하여 입력 특성을 정규화하면 평균은 0으로, 표준 편차는 1로 설정됩니다.

참고: StandardScaler는 모델이 유효성 검사 또는 테스트 세트를 참고하는지 여부를 확인하기 위해 train_features를 사용하는 경우에만 적합합니다.

scaler = StandardScaler()

train_features = scaler.fit_transform(train_features)

val_features = scaler.transform(val_features)

test_features = scaler.transform(test_features)

train_features = np.clip(train_features, -5, 5)

val_features = np.clip(val_features, -5, 5)

test_features = np.clip(test_features, -5, 5)

print('Training labels shape:', train_labels.shape)

print('Validation labels shape:', val_labels.shape)

print('Test labels shape:', test_labels.shape)

print('Training features shape:', train_features.shape)

print('Validation features shape:', val_features.shape)

print('Test features shape:', test_features.shape)

Training labels shape: (182276,) Validation labels shape: (45569,) Test labels shape: (56962,) Training features shape: (182276, 29) Validation features shape: (45569, 29) Test features shape: (56962, 29)

주의: 모델을 배포하려면 전처리 계산을 유지하는 것이 중요합니다. 따라서 레이어로 구현하고 내보내기 전에 모델에 연결하는 것이 가장 쉬운 방법입니다.

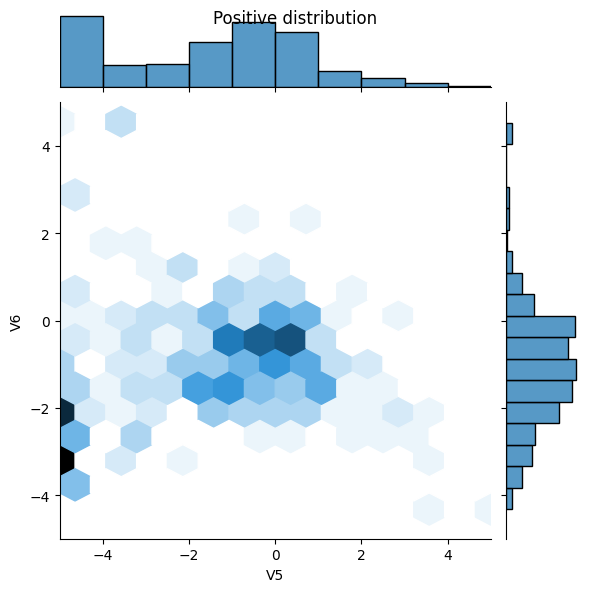

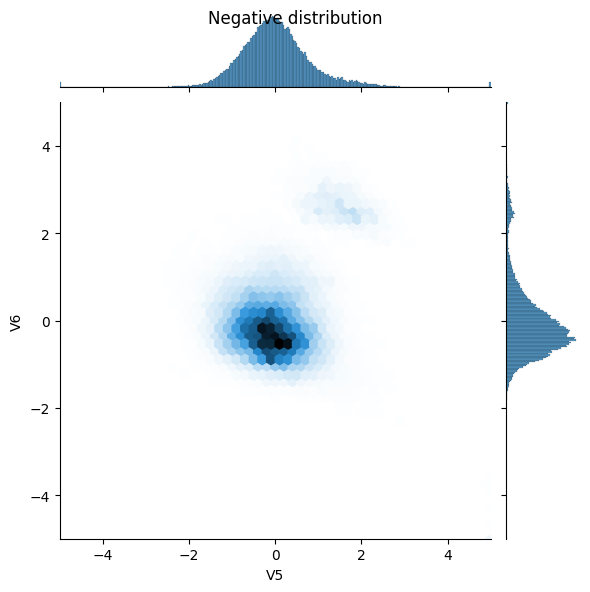

데이터 분포 살펴보기

다음으로 몇 가지 특성에 대한 양 및 음의 예시 분포를 비교해 보겠습니다. 이 때 스스로 검토할 사항은 다음과 같습니다.

- 이와 같은 분포가 합리적인가?

- 예, 이미 입력을 정규화했으며 대부분

+/- 2범위에 집중되어 있습니다.

- 예, 이미 입력을 정규화했으며 대부분

- 분포 간 차이를 알 수 있습니까?

- 예, 양의 예에는 극단적 값의 비율이 훨씬 높습니다.

pos_df = pd.DataFrame(train_features[ bool_train_labels], columns=train_df.columns)

neg_df = pd.DataFrame(train_features[~bool_train_labels], columns=train_df.columns)

sns.jointplot(x=pos_df['V5'], y=pos_df['V6'],

kind='hex', xlim=(-5,5), ylim=(-5,5))

plt.suptitle("Positive distribution")

sns.jointplot(x=neg_df['V5'], y=neg_df['V6'],

kind='hex', xlim=(-5,5), ylim=(-5,5))

_ = plt.suptitle("Negative distribution")

모델 및 메트릭 정의

조밀하게 연결된 숨겨진 레이어, 과대적합을 줄이기 위한 드롭아웃 레이어, 거래 사기 가능성을 반환하는 시그모이드 출력 레이어로 간단한 신경망을 생성하는 함수를 정의합니다.

METRICS = [

keras.metrics.TruePositives(name='tp'),

keras.metrics.FalsePositives(name='fp'),

keras.metrics.TrueNegatives(name='tn'),

keras.metrics.FalseNegatives(name='fn'),

keras.metrics.BinaryAccuracy(name='accuracy'),

keras.metrics.Precision(name='precision'),

keras.metrics.Recall(name='recall'),

keras.metrics.AUC(name='auc'),

keras.metrics.AUC(name='prc', curve='PR'), # precision-recall curve

]

def make_model(metrics=METRICS, output_bias=None):

if output_bias is not None:

output_bias = tf.keras.initializers.Constant(output_bias)

model = keras.Sequential([

keras.layers.Dense(

16, activation='relu',

input_shape=(train_features.shape[-1],)),

keras.layers.Dropout(0.5),

keras.layers.Dense(1, activation='sigmoid',

bias_initializer=output_bias),

])

model.compile(

optimizer=keras.optimizers.Adam(learning_rate=1e-3),

loss=keras.losses.BinaryCrossentropy(),

metrics=metrics)

return model

유용한 메트릭 이해하기

위에서 정의한 몇 가지 메트릭은 모델을 통해 계산할 수 있으며 성능을 평가할 때 유용합니다.

- 허위 음성과 허위 양성은 잘못 분류된 샘플입니다.

- 실제 음성과 실제 양성은 올바로 분류된 샘플입니다.

- 정확도는 올바로 분류된 예의 비율입니다.

\(\frac{\text{true samples} }{\text{total samples} }\)

- 정밀도는 올바르게 분류된 예측 양성의 비율입니다.

\(\frac{\text{true positives} }{\text{true positives + false positives} }\)

- 재현율은 올바르게 분류된 실제 양성의 비율입니다.

\(\frac{\text{true positives} }{\text{true positives + false negatives} }\)

- AUC는 ROC-AUC(Area Under the Curve of a Receiver Operating Characteristic) 곡선을 의미합니다. 이 메트릭은 분류자가 임의의 양성 샘플 순위를 임의의 음성 샘플 순위보다 높게 지정할 확률과 같습니다.

- AUPRC는 PR curve AUC를 의미합니다. 이 메트릭은 다양한 확률 임계값에 대한 정밀도-재현율 쌍을 계산합니다.

참고: 정확도는 이 작업에 유용한 메트릭이 아닙니다. 항상 False를 예측해야 이 작업에서 99.8% 이상의 정확도를 얻을 수 있습니다.

더 읽어보기:

기준 모델

모델 구축

이제 앞서 정의한 함수를 사용하여 모델을 만들고 학습해 보겠습니다. 모델은 기본 배치 크기인 2048보다 큰 배치 크기를 사용하는 것이 좋습니다. 각 배치에서 양성 샘플을 일부 포함시켜 적절한 기회를 얻는 것이 중요합니다. 배치 크기가 너무 작으면 부정 거래 예시를 제대로 학습할 수 없습니다.

참고: 이 모델은 클래스의 불균형을 잘 다루지 못합니다. 이를 이 튜토리얼의 뒷부분에서 개선하게 될 겁니다.

EPOCHS = 100

BATCH_SIZE = 2048

early_stopping = tf.keras.callbacks.EarlyStopping(

monitor='val_prc',

verbose=1,

patience=10,

mode='max',

restore_best_weights=True)

model = make_model()

model.summary()

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

dense (Dense) (None, 16) 480

dropout (Dropout) (None, 16) 0

dense_1 (Dense) (None, 1) 17

=================================================================

Total params: 497

Trainable params: 497

Non-trainable params: 0

_________________________________________________________________

모델을 실행하여 테스트해보겠습니다.

model.predict(train_features[:10])

1/1 [==============================] - 0s 409ms/step

array([[0.31587434],

[0.22539072],

[0.68509597],

[0.19135255],

[0.46167344],

[0.70078653],

[0.40190262],

[0.2730636 ],

[0.7196849 ],

[0.30625975]], dtype=float32)

선택사항: 초기 바이어스를 올바로 설정합니다.

이와 같은 초기 추측은 적절하지 않습니다. 데이터세트가 불균형하다는 것을 알고 있으니까요. 출력 레이어의 바이어스를 설정하여 해당 데이터세트를 반영하면(참조: 신경망 훈련 방법: "init well") 초기 수렴에 유용할 수 있습니다.

기본 바이어스 초기화를 사용하면 손실은 약 math.log(2) = 0.69314

results = model.evaluate(train_features, train_labels, batch_size=BATCH_SIZE, verbose=0)

print("Loss: {:0.4f}".format(results[0]))

Loss: 0.7212

올바른 바이어스 설정은 다음에서 가능합니다.

\( p_0 = pos/(pos + neg) = 1/(1+e^{-b_0}) \) \( b_0 = -log_e(1/p_0 - 1) \) \[ b_0 = log_e(pos/neg)\]

initial_bias = np.log([pos/neg])

initial_bias

array([-6.35935934])

이를 초기 바이어스로 설정하면 모델은 훨씬 더 합리적으로 초기 추측을 할 수 있습니다.

pos/total = 0.0018에 가까울 것입니다.

model = make_model(output_bias=initial_bias)

model.predict(train_features[:10])

1/1 [==============================] - 0s 48ms/step

array([[0.00121839],

[0.00060421],

[0.0047377 ],

[0.00021598],

[0.00130969],

[0.00171389],

[0.00158324],

[0.00084963],

[0.0011057 ],

[0.00022113]], dtype=float32)

이 초기화를 통해서 초기 손실은 대략 다음과 같아야합니다.:

\[-p_0log(p_0)-(1-p_0)log(1-p_0) = 0.01317\]

results = model.evaluate(train_features, train_labels, batch_size=BATCH_SIZE, verbose=0)

print("Loss: {:0.4f}".format(results[0]))

Loss: 0.0141

이 초기 손실은 단순한 상태의 초기화에서 발생했을 때 보다 약 50배 적습니다.

이런 식으로 모델은 처음 몇 epoch를 쓰며 양성 예시가 거의 없다는 것을 학습할 필요는 없습니다. 이렇게 하면 학습을 하면서 손실된 플롯을 더 쉽게 파악할 수 있습니다.

초기 가중치 체크 포인트

다양한 학습 과정을 비교하려면 이 초기 모델의 가중치를 체크포인트 파일에 보관하고 학습 전에 각 모델에 로드합니다.

initial_weights = os.path.join(tempfile.mkdtemp(), 'initial_weights')

model.save_weights(initial_weights)

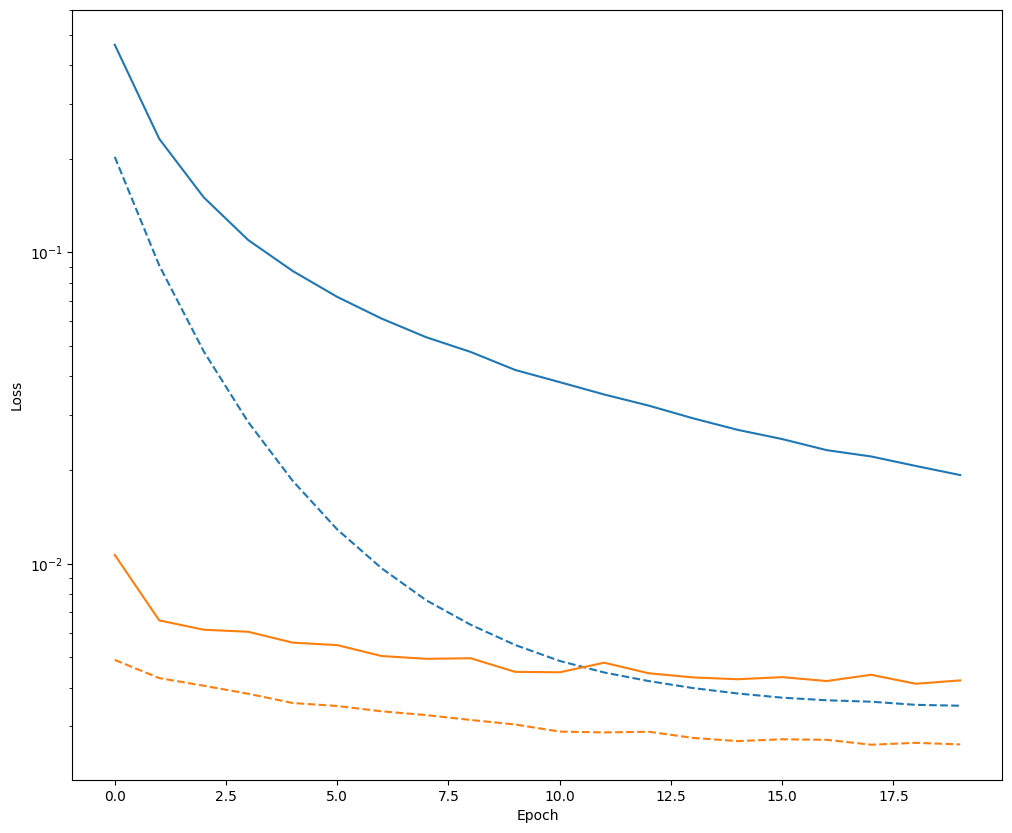

바이어스 수정이 도움이 되는지 확인하기

계속 진행하기 전에 조심스러운 바이어스 초기화가 실제로 도움이 되었는지 빠르게 확인하십시오

정교한 초기화를 한 모델과 하지 않은 모델을 20 epoch 학습시키고 손실을 비교합니다.

model = make_model()

model.load_weights(initial_weights)

model.layers[-1].bias.assign([0.0])

zero_bias_history = model.fit(

train_features,

train_labels,

batch_size=BATCH_SIZE,

epochs=20,

validation_data=(val_features, val_labels),

verbose=0)

model = make_model()

model.load_weights(initial_weights)

careful_bias_history = model.fit(

train_features,

train_labels,

batch_size=BATCH_SIZE,

epochs=20,

validation_data=(val_features, val_labels),

verbose=0)

def plot_loss(history, label, n):

# Use a log scale on y-axis to show the wide range of values.

plt.semilogy(history.epoch, history.history['loss'],

color=colors[n], label='Train ' + label)

plt.semilogy(history.epoch, history.history['val_loss'],

color=colors[n], label='Val ' + label,

linestyle="--")

plt.xlabel('Epoch')

plt.ylabel('Loss')

plot_loss(zero_bias_history, "Zero Bias", 0)

plot_loss(careful_bias_history, "Careful Bias", 1)

위의 그림에서 명확히 알 수 있듯이, 검증 손실 측면에서 이와 같은 정교한 초기화에는 분명한 이점이 있습니다.

모델 학습

model = make_model()

model.load_weights(initial_weights)

baseline_history = model.fit(

train_features,

train_labels,

batch_size=BATCH_SIZE,

epochs=EPOCHS,

callbacks=[early_stopping],

validation_data=(val_features, val_labels))

Epoch 1/100 90/90 [==============================] - 2s 11ms/step - loss: 0.0109 - tp: 127.0000 - fp: 63.0000 - tn: 227394.0000 - fn: 261.0000 - accuracy: 0.9986 - precision: 0.6684 - recall: 0.3273 - auc: 0.8005 - prc: 0.3543 - val_loss: 0.0048 - val_tp: 36.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 38.0000 - val_accuracy: 0.9990 - val_precision: 0.8000 - val_recall: 0.4865 - val_auc: 0.9185 - val_prc: 0.7113 Epoch 2/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0068 - tp: 146.0000 - fp: 27.0000 - tn: 181935.0000 - fn: 168.0000 - accuracy: 0.9989 - precision: 0.8439 - recall: 0.4650 - auc: 0.8609 - prc: 0.5527 - val_loss: 0.0042 - val_tp: 50.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 24.0000 - val_accuracy: 0.9993 - val_precision: 0.8333 - val_recall: 0.6757 - val_auc: 0.9187 - val_prc: 0.7042 Epoch 3/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0056 - tp: 173.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 141.0000 - accuracy: 0.9991 - precision: 0.8522 - recall: 0.5510 - auc: 0.8975 - prc: 0.6468 - val_loss: 0.0040 - val_tp: 53.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 21.0000 - val_accuracy: 0.9993 - val_precision: 0.8413 - val_recall: 0.7162 - val_auc: 0.9187 - val_prc: 0.6656 Epoch 4/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0059 - tp: 159.0000 - fp: 26.0000 - tn: 181936.0000 - fn: 155.0000 - accuracy: 0.9990 - precision: 0.8595 - recall: 0.5064 - auc: 0.8886 - prc: 0.6378 - val_loss: 0.0038 - val_tp: 53.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 21.0000 - val_accuracy: 0.9993 - val_precision: 0.8413 - val_recall: 0.7162 - val_auc: 0.9255 - val_prc: 0.7243 Epoch 5/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0057 - tp: 155.0000 - fp: 26.0000 - tn: 181936.0000 - fn: 159.0000 - accuracy: 0.9990 - precision: 0.8564 - recall: 0.4936 - auc: 0.8904 - prc: 0.6365 - val_loss: 0.0037 - val_tp: 56.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 18.0000 - val_accuracy: 0.9994 - val_precision: 0.8358 - val_recall: 0.7568 - val_auc: 0.9255 - val_prc: 0.7067 Epoch 6/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0053 - tp: 165.0000 - fp: 26.0000 - tn: 181936.0000 - fn: 149.0000 - accuracy: 0.9990 - precision: 0.8639 - recall: 0.5255 - auc: 0.8793 - prc: 0.6565 - val_loss: 0.0035 - val_tp: 58.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 16.0000 - val_accuracy: 0.9994 - val_precision: 0.8406 - val_recall: 0.7838 - val_auc: 0.9322 - val_prc: 0.7496 Epoch 7/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0050 - tp: 173.0000 - fp: 33.0000 - tn: 181929.0000 - fn: 141.0000 - accuracy: 0.9990 - precision: 0.8398 - recall: 0.5510 - auc: 0.9049 - prc: 0.6720 - val_loss: 0.0034 - val_tp: 58.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 16.0000 - val_accuracy: 0.9994 - val_precision: 0.8406 - val_recall: 0.7838 - val_auc: 0.9322 - val_prc: 0.7574 Epoch 8/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0052 - tp: 173.0000 - fp: 25.0000 - tn: 181937.0000 - fn: 141.0000 - accuracy: 0.9991 - precision: 0.8737 - recall: 0.5510 - auc: 0.8938 - prc: 0.6419 - val_loss: 0.0033 - val_tp: 59.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 15.0000 - val_accuracy: 0.9994 - val_precision: 0.8429 - val_recall: 0.7973 - val_auc: 0.9389 - val_prc: 0.7669 Epoch 9/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0044 - tp: 190.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 124.0000 - accuracy: 0.9992 - precision: 0.8636 - recall: 0.6051 - auc: 0.9178 - prc: 0.7096 - val_loss: 0.0032 - val_tp: 59.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 15.0000 - val_accuracy: 0.9994 - val_precision: 0.8429 - val_recall: 0.7973 - val_auc: 0.9389 - val_prc: 0.7802 Epoch 10/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0047 - tp: 170.0000 - fp: 34.0000 - tn: 181928.0000 - fn: 144.0000 - accuracy: 0.9990 - precision: 0.8333 - recall: 0.5414 - auc: 0.9130 - prc: 0.6804 - val_loss: 0.0032 - val_tp: 60.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 14.0000 - val_accuracy: 0.9995 - val_precision: 0.8451 - val_recall: 0.8108 - val_auc: 0.9457 - val_prc: 0.7949 Epoch 11/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0048 - tp: 187.0000 - fp: 37.0000 - tn: 181925.0000 - fn: 127.0000 - accuracy: 0.9991 - precision: 0.8348 - recall: 0.5955 - auc: 0.9130 - prc: 0.6679 - val_loss: 0.0030 - val_tp: 59.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 15.0000 - val_accuracy: 0.9994 - val_precision: 0.8429 - val_recall: 0.7973 - val_auc: 0.9457 - val_prc: 0.8003 Epoch 12/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0046 - tp: 185.0000 - fp: 37.0000 - tn: 181925.0000 - fn: 129.0000 - accuracy: 0.9991 - precision: 0.8333 - recall: 0.5892 - auc: 0.9130 - prc: 0.6696 - val_loss: 0.0029 - val_tp: 59.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 15.0000 - val_accuracy: 0.9994 - val_precision: 0.8429 - val_recall: 0.7973 - val_auc: 0.9457 - val_prc: 0.8113 Epoch 13/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0042 - tp: 189.0000 - fp: 29.0000 - tn: 181933.0000 - fn: 125.0000 - accuracy: 0.9992 - precision: 0.8670 - recall: 0.6019 - auc: 0.9244 - prc: 0.7167 - val_loss: 0.0029 - val_tp: 60.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 14.0000 - val_accuracy: 0.9994 - val_precision: 0.8333 - val_recall: 0.8108 - val_auc: 0.9457 - val_prc: 0.8130 Epoch 14/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0042 - tp: 189.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 125.0000 - accuracy: 0.9991 - precision: 0.8630 - recall: 0.6019 - auc: 0.9227 - prc: 0.7123 - val_loss: 0.0029 - val_tp: 61.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8356 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8147 Epoch 15/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0041 - tp: 197.0000 - fp: 33.0000 - tn: 181929.0000 - fn: 117.0000 - accuracy: 0.9992 - precision: 0.8565 - recall: 0.6274 - auc: 0.9212 - prc: 0.7157 - val_loss: 0.0028 - val_tp: 60.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 14.0000 - val_accuracy: 0.9994 - val_precision: 0.8333 - val_recall: 0.8108 - val_auc: 0.9457 - val_prc: 0.8243 Epoch 16/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0042 - tp: 180.0000 - fp: 26.0000 - tn: 181936.0000 - fn: 134.0000 - accuracy: 0.9991 - precision: 0.8738 - recall: 0.5732 - auc: 0.9291 - prc: 0.7122 - val_loss: 0.0028 - val_tp: 61.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8356 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8259 Epoch 17/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0041 - tp: 188.0000 - fp: 28.0000 - tn: 181934.0000 - fn: 126.0000 - accuracy: 0.9992 - precision: 0.8704 - recall: 0.5987 - auc: 0.9243 - prc: 0.7179 - val_loss: 0.0027 - val_tp: 61.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8356 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8291 Epoch 18/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0043 - tp: 192.0000 - fp: 26.0000 - tn: 181936.0000 - fn: 122.0000 - accuracy: 0.9992 - precision: 0.8807 - recall: 0.6115 - auc: 0.9226 - prc: 0.6985 - val_loss: 0.0027 - val_tp: 61.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8356 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8330 Epoch 19/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0039 - tp: 201.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 113.0000 - accuracy: 0.9992 - precision: 0.8701 - recall: 0.6401 - auc: 0.9211 - prc: 0.7305 - val_loss: 0.0027 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8351 Epoch 20/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0041 - tp: 194.0000 - fp: 31.0000 - tn: 181931.0000 - fn: 120.0000 - accuracy: 0.9992 - precision: 0.8622 - recall: 0.6178 - auc: 0.9243 - prc: 0.7319 - val_loss: 0.0027 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8345 Epoch 21/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0037 - tp: 206.0000 - fp: 28.0000 - tn: 181934.0000 - fn: 108.0000 - accuracy: 0.9993 - precision: 0.8803 - recall: 0.6561 - auc: 0.9244 - prc: 0.7550 - val_loss: 0.0027 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8368 Epoch 22/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0040 - tp: 191.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 123.0000 - accuracy: 0.9992 - precision: 0.8643 - recall: 0.6083 - auc: 0.9275 - prc: 0.7185 - val_loss: 0.0027 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8337 Epoch 23/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0040 - tp: 199.0000 - fp: 32.0000 - tn: 181930.0000 - fn: 115.0000 - accuracy: 0.9992 - precision: 0.8615 - recall: 0.6338 - auc: 0.9243 - prc: 0.7164 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8394 Epoch 24/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0039 - tp: 199.0000 - fp: 29.0000 - tn: 181933.0000 - fn: 115.0000 - accuracy: 0.9992 - precision: 0.8728 - recall: 0.6338 - auc: 0.9259 - prc: 0.7225 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8409 Epoch 25/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0037 - tp: 197.0000 - fp: 28.0000 - tn: 181934.0000 - fn: 117.0000 - accuracy: 0.9992 - precision: 0.8756 - recall: 0.6274 - auc: 0.9260 - prc: 0.7478 - val_loss: 0.0028 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8391 Epoch 26/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0036 - tp: 200.0000 - fp: 24.0000 - tn: 181938.0000 - fn: 114.0000 - accuracy: 0.9992 - precision: 0.8929 - recall: 0.6369 - auc: 0.9308 - prc: 0.7609 - val_loss: 0.0027 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8384 Epoch 27/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0038 - tp: 203.0000 - fp: 28.0000 - tn: 181934.0000 - fn: 111.0000 - accuracy: 0.9992 - precision: 0.8788 - recall: 0.6465 - auc: 0.9308 - prc: 0.7457 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8446 Epoch 28/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0036 - tp: 199.0000 - fp: 29.0000 - tn: 181933.0000 - fn: 115.0000 - accuracy: 0.9992 - precision: 0.8728 - recall: 0.6338 - auc: 0.9308 - prc: 0.7587 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8427 Epoch 29/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0038 - tp: 197.0000 - fp: 33.0000 - tn: 181929.0000 - fn: 117.0000 - accuracy: 0.9992 - precision: 0.8565 - recall: 0.6274 - auc: 0.9340 - prc: 0.7425 - val_loss: 0.0027 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8402 Epoch 30/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0037 - tp: 198.0000 - fp: 27.0000 - tn: 181935.0000 - fn: 116.0000 - accuracy: 0.9992 - precision: 0.8800 - recall: 0.6306 - auc: 0.9308 - prc: 0.7488 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8474 Epoch 31/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0037 - tp: 205.0000 - fp: 34.0000 - tn: 181928.0000 - fn: 109.0000 - accuracy: 0.9992 - precision: 0.8577 - recall: 0.6529 - auc: 0.9308 - prc: 0.7448 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8473 Epoch 32/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0037 - tp: 193.0000 - fp: 26.0000 - tn: 181936.0000 - fn: 121.0000 - accuracy: 0.9992 - precision: 0.8813 - recall: 0.6146 - auc: 0.9197 - prc: 0.7402 - val_loss: 0.0027 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8456 Epoch 33/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0036 - tp: 213.0000 - fp: 31.0000 - tn: 181931.0000 - fn: 101.0000 - accuracy: 0.9993 - precision: 0.8730 - recall: 0.6783 - auc: 0.9340 - prc: 0.7625 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8464 Epoch 34/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0037 - tp: 202.0000 - fp: 33.0000 - tn: 181929.0000 - fn: 112.0000 - accuracy: 0.9992 - precision: 0.8596 - recall: 0.6433 - auc: 0.9356 - prc: 0.7433 - val_loss: 0.0025 - val_tp: 61.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8714 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8510 Epoch 35/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0037 - tp: 205.0000 - fp: 38.0000 - tn: 181924.0000 - fn: 109.0000 - accuracy: 0.9992 - precision: 0.8436 - recall: 0.6529 - auc: 0.9420 - prc: 0.7504 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8505 Epoch 36/100 90/90 [==============================] - 0s 6ms/step - loss: 0.0039 - tp: 193.0000 - fp: 35.0000 - tn: 181927.0000 - fn: 121.0000 - accuracy: 0.9991 - precision: 0.8465 - recall: 0.6146 - auc: 0.9260 - prc: 0.7359 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8499 Epoch 37/100 90/90 [==============================] - 0s 6ms/step - loss: 0.0034 - tp: 204.0000 - fp: 25.0000 - tn: 181937.0000 - fn: 110.0000 - accuracy: 0.9993 - precision: 0.8908 - recall: 0.6497 - auc: 0.9436 - prc: 0.7690 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8453 Epoch 38/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 214.0000 - fp: 36.0000 - tn: 181926.0000 - fn: 100.0000 - accuracy: 0.9993 - precision: 0.8560 - recall: 0.6815 - auc: 0.9436 - prc: 0.7767 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8513 Epoch 39/100 90/90 [==============================] - 0s 6ms/step - loss: 0.0035 - tp: 213.0000 - fp: 23.0000 - tn: 181939.0000 - fn: 101.0000 - accuracy: 0.9993 - precision: 0.9025 - recall: 0.6783 - auc: 0.9388 - prc: 0.7605 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8500 Epoch 40/100 90/90 [==============================] - 0s 6ms/step - loss: 0.0036 - tp: 199.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 115.0000 - accuracy: 0.9992 - precision: 0.8690 - recall: 0.6338 - auc: 0.9484 - prc: 0.7462 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8510 Epoch 41/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0037 - tp: 191.0000 - fp: 35.0000 - tn: 181927.0000 - fn: 123.0000 - accuracy: 0.9991 - precision: 0.8451 - recall: 0.6083 - auc: 0.9324 - prc: 0.7398 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8511 Epoch 42/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0035 - tp: 197.0000 - fp: 28.0000 - tn: 181934.0000 - fn: 117.0000 - accuracy: 0.9992 - precision: 0.8756 - recall: 0.6274 - auc: 0.9357 - prc: 0.7594 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8611 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8475 Epoch 43/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0035 - tp: 206.0000 - fp: 27.0000 - tn: 181935.0000 - fn: 108.0000 - accuracy: 0.9993 - precision: 0.8841 - recall: 0.6561 - auc: 0.9468 - prc: 0.7608 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8515 Epoch 44/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0033 - tp: 213.0000 - fp: 27.0000 - tn: 181935.0000 - fn: 101.0000 - accuracy: 0.9993 - precision: 0.8875 - recall: 0.6783 - auc: 0.9373 - prc: 0.7850 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8481 Epoch 45/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 207.0000 - fp: 27.0000 - tn: 181935.0000 - fn: 107.0000 - accuracy: 0.9993 - precision: 0.8846 - recall: 0.6592 - auc: 0.9372 - prc: 0.7685 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8515 Epoch 46/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0035 - tp: 197.0000 - fp: 23.0000 - tn: 181939.0000 - fn: 117.0000 - accuracy: 0.9992 - precision: 0.8955 - recall: 0.6274 - auc: 0.9340 - prc: 0.7717 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8503 Epoch 47/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 214.0000 - fp: 31.0000 - tn: 181931.0000 - fn: 100.0000 - accuracy: 0.9993 - precision: 0.8735 - recall: 0.6815 - auc: 0.9468 - prc: 0.7765 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8506 Epoch 48/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0036 - tp: 200.0000 - fp: 29.0000 - tn: 181933.0000 - fn: 114.0000 - accuracy: 0.9992 - precision: 0.8734 - recall: 0.6369 - auc: 0.9324 - prc: 0.7516 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8530 Epoch 49/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0033 - tp: 209.0000 - fp: 23.0000 - tn: 181939.0000 - fn: 105.0000 - accuracy: 0.9993 - precision: 0.9009 - recall: 0.6656 - auc: 0.9421 - prc: 0.7801 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8479 Epoch 50/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 206.0000 - fp: 32.0000 - tn: 181930.0000 - fn: 108.0000 - accuracy: 0.9992 - precision: 0.8655 - recall: 0.6561 - auc: 0.9436 - prc: 0.7699 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 8.0000 - val_tn: 45487.0000 - val_fn: 12.0000 - val_accuracy: 0.9996 - val_precision: 0.8857 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8484 Epoch 51/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 207.0000 - fp: 29.0000 - tn: 181933.0000 - fn: 107.0000 - accuracy: 0.9993 - precision: 0.8771 - recall: 0.6592 - auc: 0.9372 - prc: 0.7687 - val_loss: 0.0025 - val_tp: 62.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8732 - val_recall: 0.8378 - val_auc: 0.9458 - val_prc: 0.8520 Epoch 52/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0033 - tp: 203.0000 - fp: 23.0000 - tn: 181939.0000 - fn: 111.0000 - accuracy: 0.9993 - precision: 0.8982 - recall: 0.6465 - auc: 0.9389 - prc: 0.7772 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 12.0000 - val_tn: 45483.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8378 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8505 Epoch 53/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 206.0000 - fp: 32.0000 - tn: 181930.0000 - fn: 108.0000 - accuracy: 0.9992 - precision: 0.8655 - recall: 0.6561 - auc: 0.9420 - prc: 0.7719 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8611 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8494 Epoch 54/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0035 - tp: 206.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 108.0000 - accuracy: 0.9992 - precision: 0.8729 - recall: 0.6561 - auc: 0.9356 - prc: 0.7599 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8493 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8502 Epoch 55/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 206.0000 - fp: 27.0000 - tn: 181935.0000 - fn: 108.0000 - accuracy: 0.9993 - precision: 0.8841 - recall: 0.6561 - auc: 0.9341 - prc: 0.7673 - val_loss: 0.0025 - val_tp: 61.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8714 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8521 Epoch 56/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0032 - tp: 210.0000 - fp: 29.0000 - tn: 181933.0000 - fn: 104.0000 - accuracy: 0.9993 - precision: 0.8787 - recall: 0.6688 - auc: 0.9389 - prc: 0.7840 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8482 Epoch 57/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0033 - tp: 206.0000 - fp: 28.0000 - tn: 181934.0000 - fn: 108.0000 - accuracy: 0.9993 - precision: 0.8803 - recall: 0.6561 - auc: 0.9468 - prc: 0.7722 - val_loss: 0.0025 - val_tp: 61.0000 - val_fp: 8.0000 - val_tn: 45487.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8841 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8533 Epoch 58/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 200.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 114.0000 - accuracy: 0.9992 - precision: 0.8696 - recall: 0.6369 - auc: 0.9403 - prc: 0.7666 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8611 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8517 Epoch 59/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 211.0000 - fp: 25.0000 - tn: 181937.0000 - fn: 103.0000 - accuracy: 0.9993 - precision: 0.8941 - recall: 0.6720 - auc: 0.9372 - prc: 0.7704 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8611 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8503 Epoch 60/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0032 - tp: 210.0000 - fp: 22.0000 - tn: 181940.0000 - fn: 104.0000 - accuracy: 0.9993 - precision: 0.9052 - recall: 0.6688 - auc: 0.9372 - prc: 0.7833 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8611 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8525 Epoch 61/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0033 - tp: 205.0000 - fp: 26.0000 - tn: 181936.0000 - fn: 109.0000 - accuracy: 0.9993 - precision: 0.8874 - recall: 0.6529 - auc: 0.9451 - prc: 0.7705 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8611 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8507 Epoch 62/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0033 - tp: 200.0000 - fp: 32.0000 - tn: 181930.0000 - fn: 114.0000 - accuracy: 0.9992 - precision: 0.8621 - recall: 0.6369 - auc: 0.9420 - prc: 0.7737 - val_loss: 0.0026 - val_tp: 61.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8714 - val_recall: 0.8243 - val_auc: 0.9458 - val_prc: 0.8488 Epoch 63/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 205.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 109.0000 - accuracy: 0.9992 - precision: 0.8723 - recall: 0.6529 - auc: 0.9452 - prc: 0.7794 - val_loss: 0.0025 - val_tp: 61.0000 - val_fp: 8.0000 - val_tn: 45487.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8841 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8510 Epoch 64/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0035 - tp: 202.0000 - fp: 29.0000 - tn: 181933.0000 - fn: 112.0000 - accuracy: 0.9992 - precision: 0.8745 - recall: 0.6433 - auc: 0.9340 - prc: 0.7590 - val_loss: 0.0026 - val_tp: 62.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8611 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8469 Epoch 65/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0033 - tp: 206.0000 - fp: 27.0000 - tn: 181935.0000 - fn: 108.0000 - accuracy: 0.9993 - precision: 0.8841 - recall: 0.6561 - auc: 0.9436 - prc: 0.7802 - val_loss: 0.0027 - val_tp: 62.0000 - val_fp: 10.0000 - val_tn: 45485.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8611 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8467 Epoch 66/100 90/90 [==============================] - 1s 6ms/step - loss: 0.0034 - tp: 196.0000 - fp: 31.0000 - tn: 181931.0000 - fn: 118.0000 - accuracy: 0.9992 - precision: 0.8634 - recall: 0.6242 - auc: 0.9403 - prc: 0.7677 - val_loss: 0.0028 - val_tp: 62.0000 - val_fp: 13.0000 - val_tn: 45482.0000 - val_fn: 12.0000 - val_accuracy: 0.9995 - val_precision: 0.8267 - val_recall: 0.8378 - val_auc: 0.9457 - val_prc: 0.8340 Epoch 67/100 86/90 [===========================>..] - ETA: 0s - loss: 0.0032 - tp: 207.0000 - fp: 30.0000 - tn: 175793.0000 - fn: 98.0000 - accuracy: 0.9993 - precision: 0.8734 - recall: 0.6787 - auc: 0.9452 - prc: 0.7839Restoring model weights from the end of the best epoch: 57. 90/90 [==============================] - 1s 6ms/step - loss: 0.0032 - tp: 212.0000 - fp: 30.0000 - tn: 181932.0000 - fn: 102.0000 - accuracy: 0.9993 - precision: 0.8760 - recall: 0.6752 - auc: 0.9452 - prc: 0.7846 - val_loss: 0.0026 - val_tp: 61.0000 - val_fp: 9.0000 - val_tn: 45486.0000 - val_fn: 13.0000 - val_accuracy: 0.9995 - val_precision: 0.8714 - val_recall: 0.8243 - val_auc: 0.9457 - val_prc: 0.8517 Epoch 67: early stopping

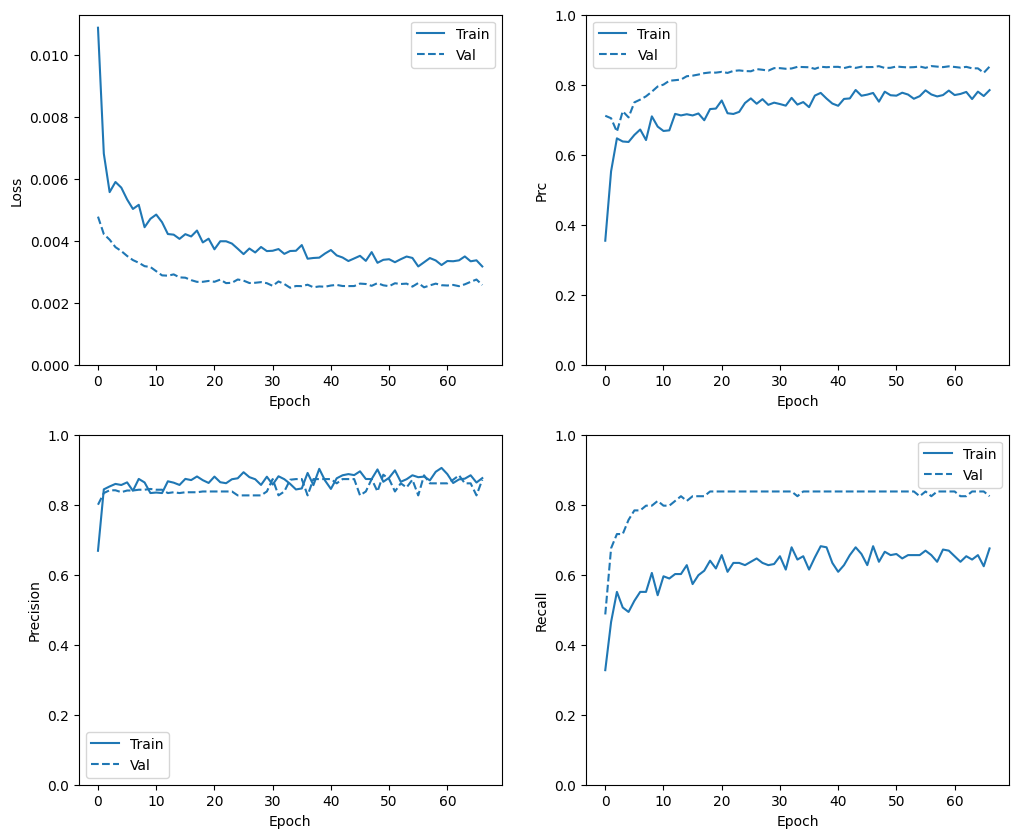

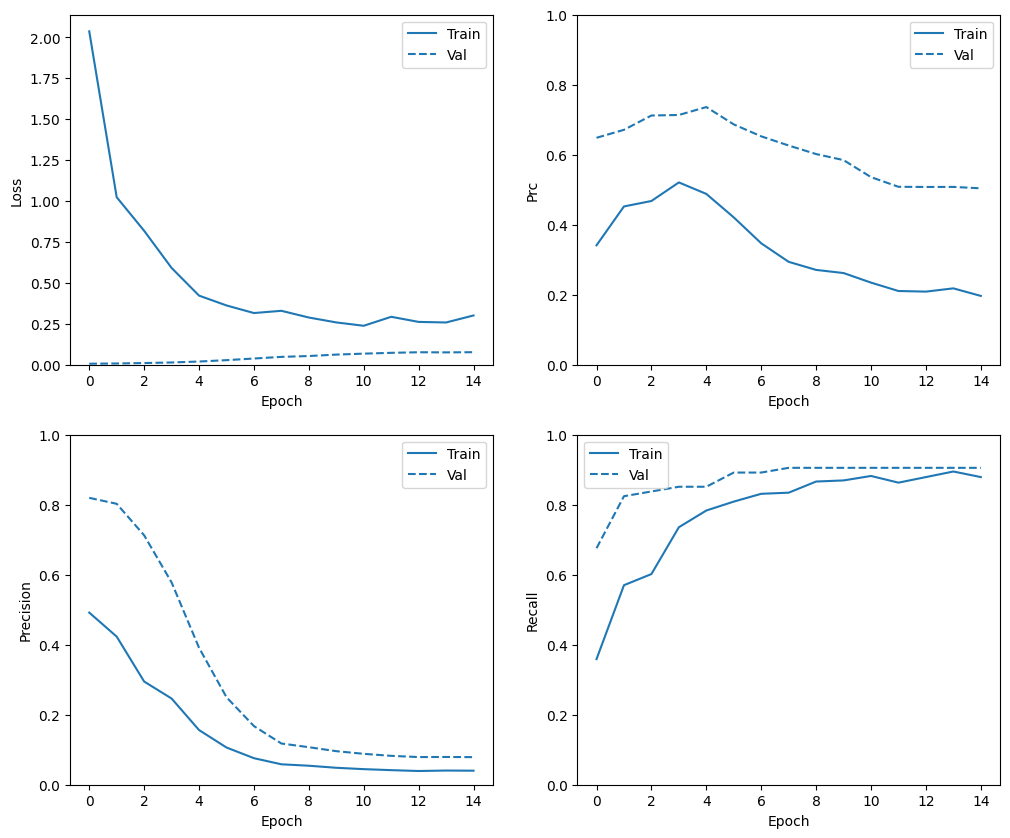

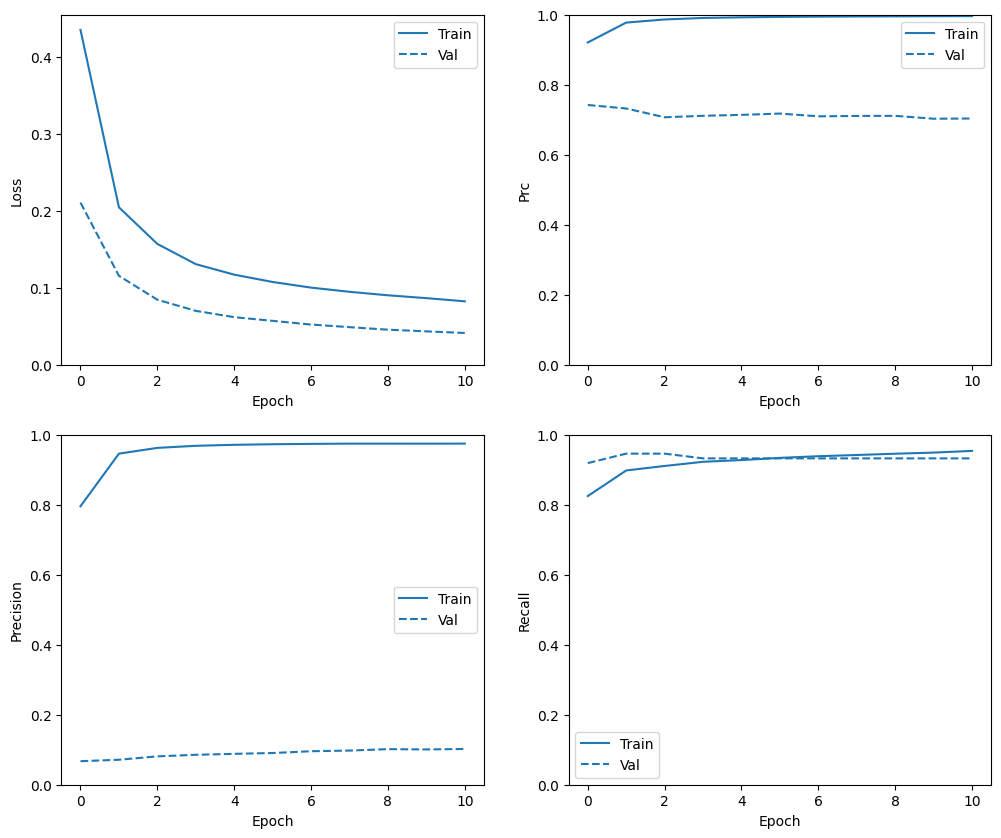

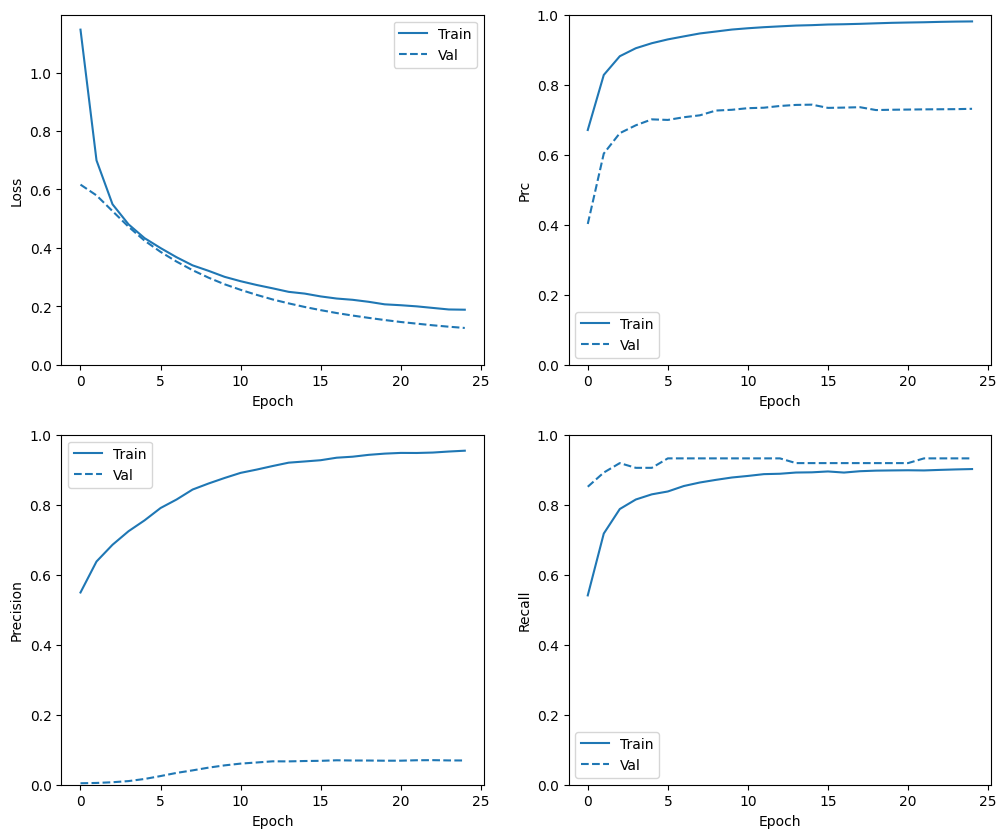

학습 이력 확인

이 섹션에서는 훈련 및 검증 세트에서 모델의 정확도 및 손실에 대한 플롯을 생성합니다. 이는 과대적합 확인에 유용하며 과대적합 및 과소적합 튜토리얼에서 자세히 알아볼 수 있습니다.

또한, 위에서 만든 모든 메트릭에 대해 다음과 같은 플롯을 생성할 수 있습니다. 거짓 음성이 예시에 포함되어 있습니다.

def plot_metrics(history):

metrics = ['loss', 'prc', 'precision', 'recall']

for n, metric in enumerate(metrics):

name = metric.replace("_"," ").capitalize()

plt.subplot(2,2,n+1)

plt.plot(history.epoch, history.history[metric], color=colors[0], label='Train')

plt.plot(history.epoch, history.history['val_'+metric],

color=colors[0], linestyle="--", label='Val')

plt.xlabel('Epoch')

plt.ylabel(name)

if metric == 'loss':

plt.ylim([0, plt.ylim()[1]])

elif metric == 'auc':

plt.ylim([0.8,1])

else:

plt.ylim([0,1])

plt.legend()

plot_metrics(baseline_history)

참고: 검증 곡선은 일반적으로 훈련 곡선보다 성능이 좋습니다. 이는 주로 모델을 평가할 때 drop out 레이어가 활성화 되지 않았기 때문에 발생합니다.

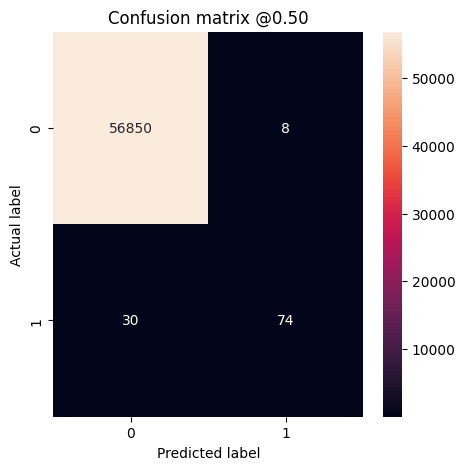

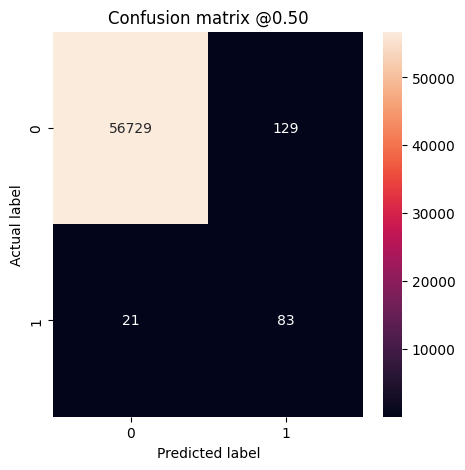

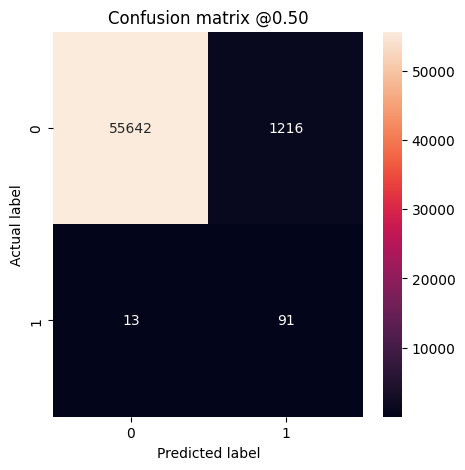

메트릭 평가

혼동 행렬을 사용하여 실제 레이블과 예측 레이블을 요약할 수 있습니다. 여기서 X축은 예측 레이블이고 Y축은 실제 레이블입니다.

train_predictions_baseline = model.predict(train_features, batch_size=BATCH_SIZE)

test_predictions_baseline = model.predict(test_features, batch_size=BATCH_SIZE)

90/90 [==============================] - 0s 1ms/step 28/28 [==============================] - 0s 1ms/step

def plot_cm(labels, predictions, p=0.5):

cm = confusion_matrix(labels, predictions > p)

plt.figure(figsize=(5,5))

sns.heatmap(cm, annot=True, fmt="d")

plt.title('Confusion matrix @{:.2f}'.format(p))

plt.ylabel('Actual label')

plt.xlabel('Predicted label')

print('Legitimate Transactions Detected (True Negatives): ', cm[0][0])

print('Legitimate Transactions Incorrectly Detected (False Positives): ', cm[0][1])

print('Fraudulent Transactions Missed (False Negatives): ', cm[1][0])

print('Fraudulent Transactions Detected (True Positives): ', cm[1][1])

print('Total Fraudulent Transactions: ', np.sum(cm[1]))

테스트 데이터세트에서 모델을 평가하고 위에서 생성한 메트릭 결과를 표시합니다.

baseline_results = model.evaluate(test_features, test_labels,

batch_size=BATCH_SIZE, verbose=0)

for name, value in zip(model.metrics_names, baseline_results):

print(name, ': ', value)

print()

plot_cm(test_labels, test_predictions_baseline)

loss : 0.003468161914497614 tp : 74.0 fp : 8.0 tn : 56850.0 fn : 30.0 accuracy : 0.9993329048156738 precision : 0.9024389982223511 recall : 0.7115384340286255 auc : 0.9323335289955139 prc : 0.8059106469154358 Legitimate Transactions Detected (True Negatives): 56850 Legitimate Transactions Incorrectly Detected (False Positives): 8 Fraudulent Transactions Missed (False Negatives): 30 Fraudulent Transactions Detected (True Positives): 74 Total Fraudulent Transactions: 104

만약 모델이 모두 완벽하게 예측했다면 대각행렬이 되어 예측 오류를 보여주며 대각선 값은 0이 됩니다. 이와 같은 경우, 매트릭에 거짓 양성이 상대적으로 낮음을 확인할 수 있으며 이를 통해 플래그가 잘못 지정된 합법적인 거래가 상대적으로 적다는 것을 알 수 있습니다. 그러나 거짓 양성 수를 늘리더라도 거짓 음성을 더 낮추고 싶을 수 있습니다. 거짓 음성은 부정 거래가 발생할 수 있지만, 거짓 양성은 고객에게 이메일을 보내 카드 활동 확인을 요청할 수 있기 때문에 거짓 음성을 낮추는 것이 더 바람직할 수 있기 때문입니다.

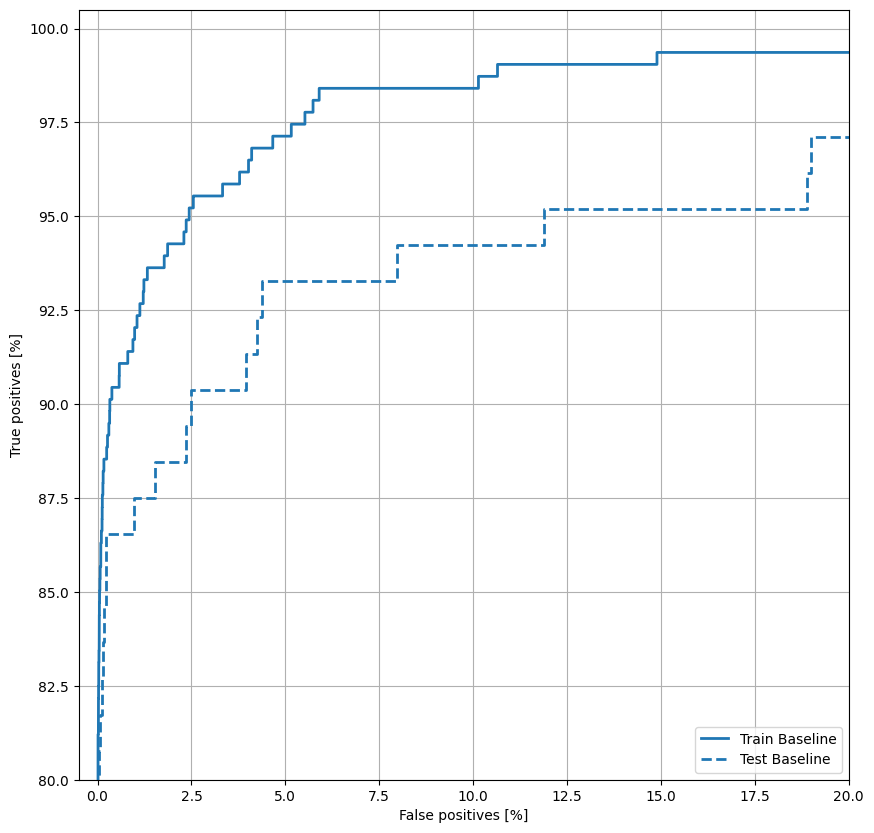

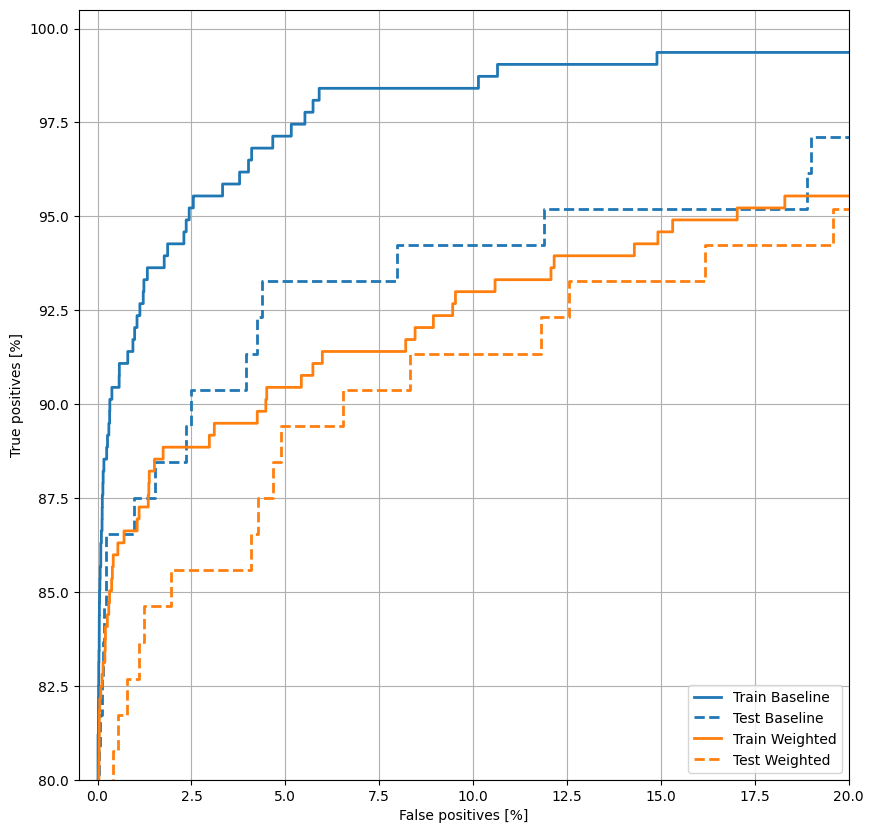

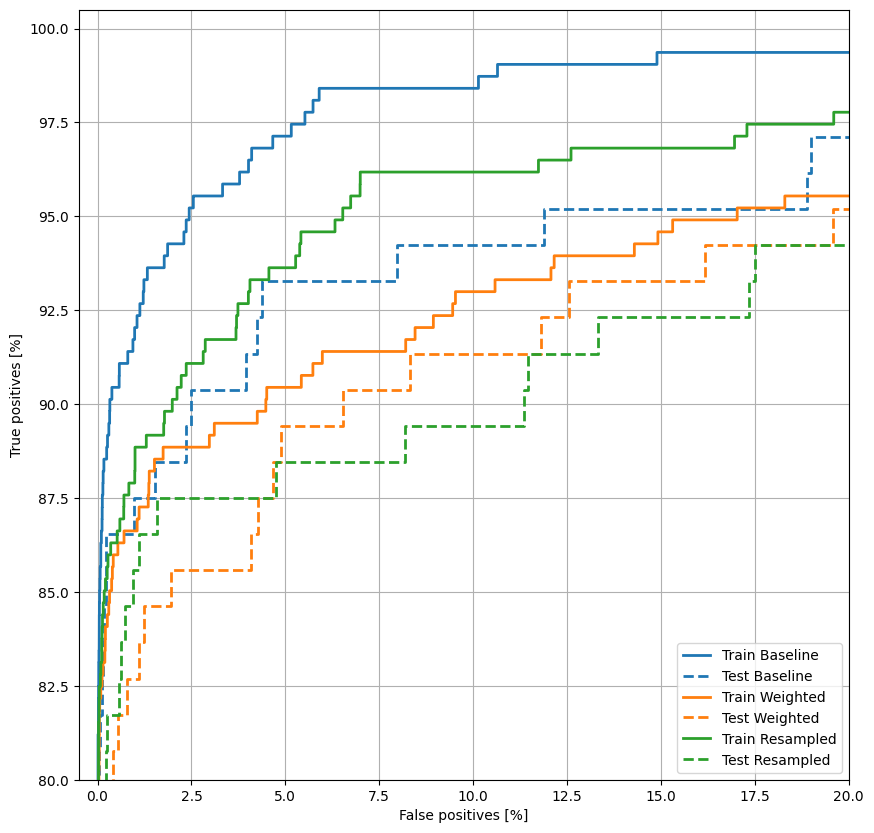

ROC 플로팅

이제 ROC을 플로팅 하십시오. 이 그래프는 출력 임계값을 조정하기만 해도 모델이 도달할 수 있는 성능 범위를 한눈에 보여주기 때문에 유용합니다.

def plot_roc(name, labels, predictions, **kwargs):

fp, tp, _ = sklearn.metrics.roc_curve(labels, predictions)

plt.plot(100*fp, 100*tp, label=name, linewidth=2, **kwargs)

plt.xlabel('False positives [%]')

plt.ylabel('True positives [%]')

plt.xlim([-0.5,20])

plt.ylim([80,100.5])

plt.grid(True)

ax = plt.gca()

ax.set_aspect('equal')

plot_roc("Train Baseline", train_labels, train_predictions_baseline, color=colors[0])

plot_roc("Test Baseline", test_labels, test_predictions_baseline, color=colors[0], linestyle='--')

plt.legend(loc='lower right');

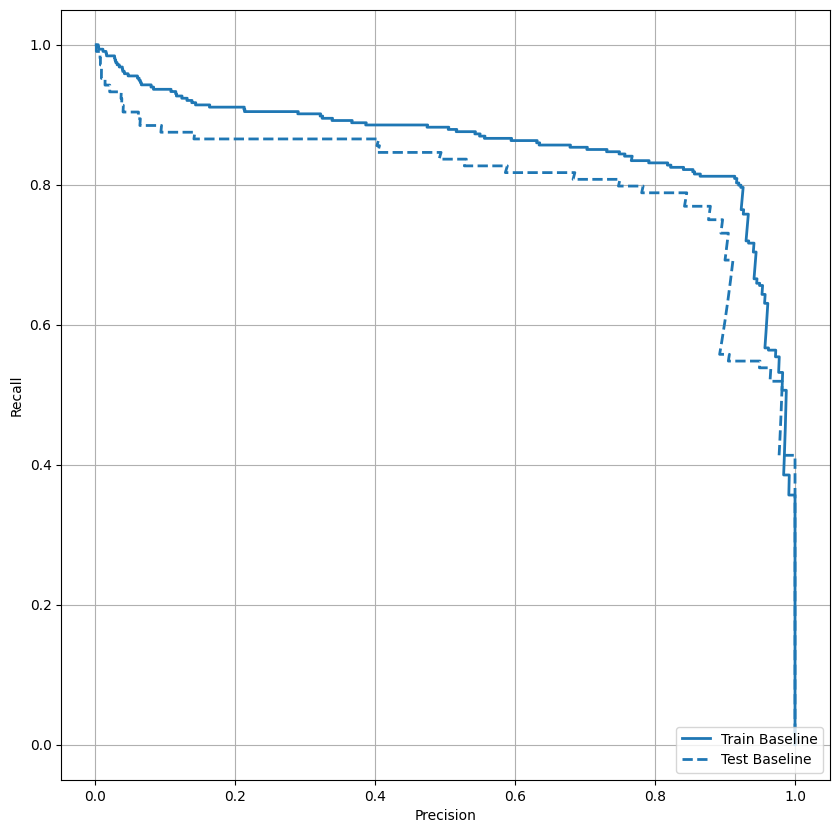

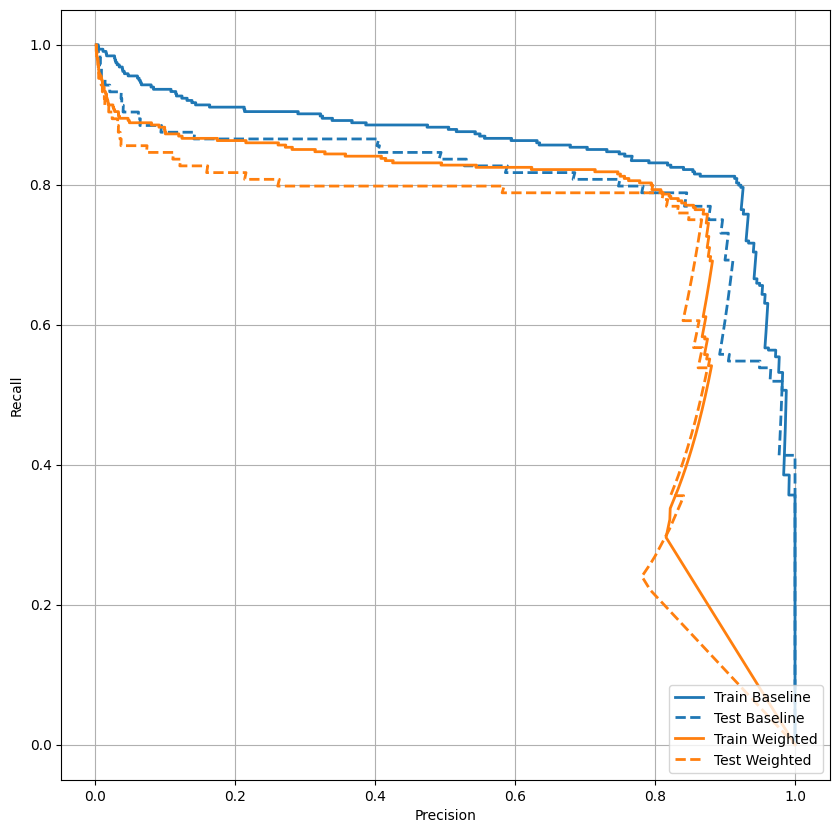

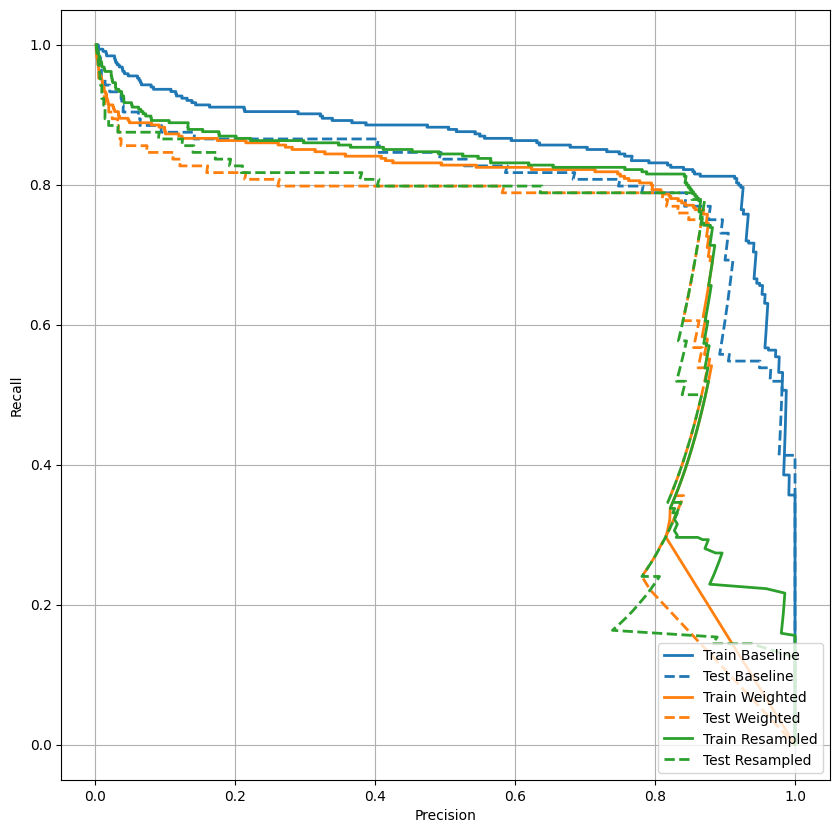

AUPRC 플로팅

이제 AUPRC를 플로팅하겠습니다. 보간된 정밀도-재현율 곡선 아래 영역으로, 분류 임계값의 여러 값에 대한 (재현율, 정밀도) 점을 플로팅하여 얻습니다. 계산 방법에 따라 PR AUC는 모델의 평균 정밀도와 동일할 수 있습니다.

def plot_prc(name, labels, predictions, **kwargs):

precision, recall, _ = sklearn.metrics.precision_recall_curve(labels, predictions)

plt.plot(precision, recall, label=name, linewidth=2, **kwargs)

plt.xlabel('Precision')

plt.ylabel('Recall')

plt.grid(True)

ax = plt.gca()

ax.set_aspect('equal')

plot_prc("Train Baseline", train_labels, train_predictions_baseline, color=colors[0])

plot_prc("Test Baseline", test_labels, test_predictions_baseline, color=colors[0], linestyle='--')

plt.legend(loc='lower right');

정밀도가 비교적 높은 것 같지만 재현율과 ROC 곡선(AUC) 아래 면적이 높지 않습니다. 분류자가 정밀도와 재현율 모두를 최대화하려고 하면 종종 어려움에 직면하는데, 불균형 데이터세트로 작업할 때 특히 그렇습니다. 관심있는 문제의 맥락에서 다른 유형의 오류 비용을 고려하는 것이 중요합니다. 이 예시에서 거짓 음성(부정 거래를 놓친 경우)은 금전적 비용이 들 수 있지만 , 거짓 양성(거래가 사기 행위로 잘못 표시됨)은 사용자 만족도를 감소시킬 수 있습니다.

클래스 가중치

클래스 가중치 계산

목표는 부정 거래를 식별하는 것이지만, 작업할 수 있는 양성 샘플이 많지 않지 않기 때문에 분류자는 이용 가능한 몇 가지 예에 가중치를 두고자 할 것입니다. 매개 변수를 통해 각 클래스에 대한 Keras 가중치를 전달한다면 이 작업을 수행할 수 있습니다. 이로 인해 모델은 더 적은 클래스 예시에 "더 많은 관심을 기울일" 수 있습니다.

# Scaling by total/2 helps keep the loss to a similar magnitude.

# The sum of the weights of all examples stays the same.

weight_for_0 = (1 / neg) * (total / 2.0)

weight_for_1 = (1 / pos) * (total / 2.0)

class_weight = {0: weight_for_0, 1: weight_for_1}

print('Weight for class 0: {:.2f}'.format(weight_for_0))

print('Weight for class 1: {:.2f}'.format(weight_for_1))

Weight for class 0: 0.50 Weight for class 1: 289.44

클래스 가중치로 모델 교육

이제 해당 모델이 예측에 어떤 영향을 미치는지 확인하기 위하여 클래스 가중치로 모델을 재 교육하고 평가해 보십시오.

참고: class_weights를 사용하면 손실 범위가 변경됩니다. 이는 옵티마이저에 따라 훈련의 안정성에 영향을 미칠 수 있습니다. tf.keras.optimizers.SGD와 같이 단계 크기가 그래디언트의 크기에 따라 달라지는 옵티마이저는 실패할 수 있습니다. 여기서 사용된 옵티마이저인 tf.keras.optimizers.Adam은 스케일링 변경의 영향을 받지 않습니다. 또한, 가중치로 인해 총 손실은 두 모델 간에 비교할 수 없습니다.

weighted_model = make_model()

weighted_model.load_weights(initial_weights)

weighted_history = weighted_model.fit(

train_features,

train_labels,

batch_size=BATCH_SIZE,

epochs=EPOCHS,

callbacks=[early_stopping],

validation_data=(val_features, val_labels),

# The class weights go here

class_weight=class_weight)

Epoch 1/100 90/90 [==============================] - 3s 11ms/step - loss: 2.0359 - tp: 150.0000 - fp: 155.0000 - tn: 238665.0000 - fn: 268.0000 - accuracy: 0.9982 - precision: 0.4918 - recall: 0.3589 - auc: 0.8151 - prc: 0.3412 - val_loss: 0.0062 - val_tp: 50.0000 - val_fp: 11.0000 - val_tn: 45484.0000 - val_fn: 24.0000 - val_accuracy: 0.9992 - val_precision: 0.8197 - val_recall: 0.6757 - val_auc: 0.9237 - val_prc: 0.6484 Epoch 2/100 90/90 [==============================] - 1s 6ms/step - loss: 1.0229 - tp: 179.0000 - fp: 244.0000 - tn: 181718.0000 - fn: 135.0000 - accuracy: 0.9979 - precision: 0.4232 - recall: 0.5701 - auc: 0.8656 - prc: 0.4522 - val_loss: 0.0076 - val_tp: 61.0000 - val_fp: 15.0000 - val_tn: 45480.0000 - val_fn: 13.0000 - val_accuracy: 0.9994 - val_precision: 0.8026 - val_recall: 0.8243 - val_auc: 0.9462 - val_prc: 0.6712 Epoch 3/100 90/90 [==============================] - 1s 6ms/step - loss: 0.8179 - tp: 189.0000 - fp: 452.0000 - tn: 181510.0000 - fn: 125.0000 - accuracy: 0.9968 - precision: 0.2949 - recall: 0.6019 - auc: 0.8968 - prc: 0.4678 - val_loss: 0.0103 - val_tp: 62.0000 - val_fp: 25.0000 - val_tn: 45470.0000 - val_fn: 12.0000 - val_accuracy: 0.9992 - val_precision: 0.7126 - val_recall: 0.8378 - val_auc: 0.9649 - val_prc: 0.7120 Epoch 4/100 90/90 [==============================] - 1s 6ms/step - loss: 0.5910 - tp: 231.0000 - fp: 707.0000 - tn: 181255.0000 - fn: 83.0000 - accuracy: 0.9957 - precision: 0.2463 - recall: 0.7357 - auc: 0.9136 - prc: 0.5209 - val_loss: 0.0137 - val_tp: 63.0000 - val_fp: 46.0000 - val_tn: 45449.0000 - val_fn: 11.0000 - val_accuracy: 0.9987 - val_precision: 0.5780 - val_recall: 0.8514 - val_auc: 0.9720 - val_prc: 0.7136 Epoch 5/100 90/90 [==============================] - 1s 6ms/step - loss: 0.4221 - tp: 246.0000 - fp: 1329.0000 - tn: 180633.0000 - fn: 68.0000 - accuracy: 0.9923 - precision: 0.1562 - recall: 0.7834 - auc: 0.9458 - prc: 0.4880 - val_loss: 0.0196 - val_tp: 63.0000 - val_fp: 98.0000 - val_tn: 45397.0000 - val_fn: 11.0000 - val_accuracy: 0.9976 - val_precision: 0.3913 - val_recall: 0.8514 - val_auc: 0.9780 - val_prc: 0.7362 Epoch 6/100 90/90 [==============================] - 1s 6ms/step - loss: 0.3619 - tp: 254.0000 - fp: 2135.0000 - tn: 179827.0000 - fn: 60.0000 - accuracy: 0.9880 - precision: 0.1063 - recall: 0.8089 - auc: 0.9538 - prc: 0.4209 - val_loss: 0.0279 - val_tp: 66.0000 - val_fp: 198.0000 - val_tn: 45297.0000 - val_fn: 8.0000 - val_accuracy: 0.9955 - val_precision: 0.2500 - val_recall: 0.8919 - val_auc: 0.9854 - val_prc: 0.6866 Epoch 7/100 90/90 [==============================] - 1s 6ms/step - loss: 0.3156 - tp: 261.0000 - fp: 3182.0000 - tn: 178780.0000 - fn: 53.0000 - accuracy: 0.9823 - precision: 0.0758 - recall: 0.8312 - auc: 0.9560 - prc: 0.3469 - val_loss: 0.0379 - val_tp: 66.0000 - val_fp: 328.0000 - val_tn: 45167.0000 - val_fn: 8.0000 - val_accuracy: 0.9926 - val_precision: 0.1675 - val_recall: 0.8919 - val_auc: 0.9900 - val_prc: 0.6526 Epoch 8/100 90/90 [==============================] - 1s 6ms/step - loss: 0.3293 - tp: 262.0000 - fp: 4228.0000 - tn: 177734.0000 - fn: 52.0000 - accuracy: 0.9765 - precision: 0.0584 - recall: 0.8344 - auc: 0.9488 - prc: 0.2940 - val_loss: 0.0480 - val_tp: 67.0000 - val_fp: 502.0000 - val_tn: 44993.0000 - val_fn: 7.0000 - val_accuracy: 0.9888 - val_precision: 0.1178 - val_recall: 0.9054 - val_auc: 0.9919 - val_prc: 0.6263 Epoch 9/100 90/90 [==============================] - 1s 6ms/step - loss: 0.2882 - tp: 272.0000 - fp: 4740.0000 - tn: 177222.0000 - fn: 42.0000 - accuracy: 0.9738 - precision: 0.0543 - recall: 0.8662 - auc: 0.9553 - prc: 0.2709 - val_loss: 0.0531 - val_tp: 67.0000 - val_fp: 558.0000 - val_tn: 44937.0000 - val_fn: 7.0000 - val_accuracy: 0.9876 - val_precision: 0.1072 - val_recall: 0.9054 - val_auc: 0.9927 - val_prc: 0.6019 Epoch 10/100 90/90 [==============================] - 1s 6ms/step - loss: 0.2583 - tp: 273.0000 - fp: 5368.0000 - tn: 176594.0000 - fn: 41.0000 - accuracy: 0.9703 - precision: 0.0484 - recall: 0.8694 - auc: 0.9612 - prc: 0.2619 - val_loss: 0.0619 - val_tp: 67.0000 - val_fp: 632.0000 - val_tn: 44863.0000 - val_fn: 7.0000 - val_accuracy: 0.9860 - val_precision: 0.0959 - val_recall: 0.9054 - val_auc: 0.9932 - val_prc: 0.5846 Epoch 11/100 90/90 [==============================] - 1s 6ms/step - loss: 0.2378 - tp: 277.0000 - fp: 5948.0000 - tn: 176014.0000 - fn: 37.0000 - accuracy: 0.9672 - precision: 0.0445 - recall: 0.8822 - auc: 0.9657 - prc: 0.2346 - val_loss: 0.0676 - val_tp: 67.0000 - val_fp: 692.0000 - val_tn: 44803.0000 - val_fn: 7.0000 - val_accuracy: 0.9847 - val_precision: 0.0883 - val_recall: 0.9054 - val_auc: 0.9930 - val_prc: 0.5360 Epoch 12/100 90/90 [==============================] - 1s 6ms/step - loss: 0.2927 - tp: 271.0000 - fp: 6239.0000 - tn: 175723.0000 - fn: 43.0000 - accuracy: 0.9655 - precision: 0.0416 - recall: 0.8631 - auc: 0.9521 - prc: 0.2106 - val_loss: 0.0726 - val_tp: 67.0000 - val_fp: 745.0000 - val_tn: 44750.0000 - val_fn: 7.0000 - val_accuracy: 0.9835 - val_precision: 0.0825 - val_recall: 0.9054 - val_auc: 0.9934 - val_prc: 0.5083 Epoch 13/100 90/90 [==============================] - 1s 6ms/step - loss: 0.2615 - tp: 276.0000 - fp: 6768.0000 - tn: 175194.0000 - fn: 38.0000 - accuracy: 0.9627 - precision: 0.0392 - recall: 0.8790 - auc: 0.9598 - prc: 0.2089 - val_loss: 0.0761 - val_tp: 67.0000 - val_fp: 781.0000 - val_tn: 44714.0000 - val_fn: 7.0000 - val_accuracy: 0.9827 - val_precision: 0.0790 - val_recall: 0.9054 - val_auc: 0.9934 - val_prc: 0.5079 Epoch 14/100 90/90 [==============================] - 1s 6ms/step - loss: 0.2580 - tp: 281.0000 - fp: 6655.0000 - tn: 175307.0000 - fn: 33.0000 - accuracy: 0.9633 - precision: 0.0405 - recall: 0.8949 - auc: 0.9594 - prc: 0.2182 - val_loss: 0.0754 - val_tp: 67.0000 - val_fp: 778.0000 - val_tn: 44717.0000 - val_fn: 7.0000 - val_accuracy: 0.9828 - val_precision: 0.0793 - val_recall: 0.9054 - val_auc: 0.9934 - val_prc: 0.5080 Epoch 15/100 85/90 [===========================>..] - ETA: 0s - loss: 0.3046 - tp: 265.0000 - fp: 6316.0000 - tn: 167463.0000 - fn: 36.0000 - accuracy: 0.9635 - precision: 0.0403 - recall: 0.8804 - auc: 0.9450 - prc: 0.1987Restoring model weights from the end of the best epoch: 5. 90/90 [==============================] - 1s 6ms/step - loss: 0.3009 - tp: 276.0000 - fp: 6610.0000 - tn: 175352.0000 - fn: 38.0000 - accuracy: 0.9635 - precision: 0.0401 - recall: 0.8790 - auc: 0.9465 - prc: 0.1966 - val_loss: 0.0765 - val_tp: 67.0000 - val_fp: 783.0000 - val_tn: 44712.0000 - val_fn: 7.0000 - val_accuracy: 0.9827 - val_precision: 0.0788 - val_recall: 0.9054 - val_auc: 0.9933 - val_prc: 0.5040 Epoch 15: early stopping

학습 이력 조회

plot_metrics(weighted_history)

매트릭 평가

train_predictions_weighted = weighted_model.predict(train_features, batch_size=BATCH_SIZE)

test_predictions_weighted = weighted_model.predict(test_features, batch_size=BATCH_SIZE)

90/90 [==============================] - 0s 1ms/step 28/28 [==============================] - 0s 1ms/step

weighted_results = weighted_model.evaluate(test_features, test_labels,

batch_size=BATCH_SIZE, verbose=0)

for name, value in zip(weighted_model.metrics_names, weighted_results):

print(name, ': ', value)

print()

plot_cm(test_labels, test_predictions_weighted)

loss : 0.019669152796268463 tp : 83.0 fp : 129.0 tn : 56729.0 fn : 21.0 accuracy : 0.9973666667938232 precision : 0.39150944352149963 recall : 0.7980769276618958 auc : 0.9632759094238281 prc : 0.6987218856811523 Legitimate Transactions Detected (True Negatives): 56729 Legitimate Transactions Incorrectly Detected (False Positives): 129 Fraudulent Transactions Missed (False Negatives): 21 Fraudulent Transactions Detected (True Positives): 83 Total Fraudulent Transactions: 104

여기서 클래스 가중치를 사용하면 거짓 양성이 더 많기 때문에 정확도와 정밀도는 더 낮지만, 반대로 참 양성이 많으므로 재현율과 AUC는 더 높다는 것을 알 수 있습니다. 정확도가 낮음에도 불구하고 이 모델은 재현율이 더 높습니다(더 많은 부정 거래 식별). 물론 두 가지 유형의 오류 모두 비용이 발생합니다(많은 합법 거래를 사기로 표시하여 사용자를 번거롭게 하는 것은 바람직하지 않으므로). 따라서, 여러 유형 오류 간 절충 사항을 신중하게 고려해야 합니다.

ROC 플로팅

plot_roc("Train Baseline", train_labels, train_predictions_baseline, color=colors[0])

plot_roc("Test Baseline", test_labels, test_predictions_baseline, color=colors[0], linestyle='--')

plot_roc("Train Weighted", train_labels, train_predictions_weighted, color=colors[1])

plot_roc("Test Weighted", test_labels, test_predictions_weighted, color=colors[1], linestyle='--')

plt.legend(loc='lower right');

AUPRC 플로팅

plot_prc("Train Baseline", train_labels, train_predictions_baseline, color=colors[0])

plot_prc("Test Baseline", test_labels, test_predictions_baseline, color=colors[0], linestyle='--')

plot_prc("Train Weighted", train_labels, train_predictions_weighted, color=colors[1])

plot_prc("Test Weighted", test_labels, test_predictions_weighted, color=colors[1], linestyle='--')

plt.legend(loc='lower right');

오버샘플링

소수 계급 과대 표본

관련된 접근 방식은 소수 클래스를 오버 샘플링 하여 데이터 세트를 리 샘플링 하는 것입니다.

pos_features = train_features[bool_train_labels]

neg_features = train_features[~bool_train_labels]

pos_labels = train_labels[bool_train_labels]

neg_labels = train_labels[~bool_train_labels]

NumPy 사용

긍정적인 예에서 적절한 수의 임의 인덱스를 선택하여 데이터 세트의 균형을 수동으로 조정할 수 있습니다.:

ids = np.arange(len(pos_features))

choices = np.random.choice(ids, len(neg_features))

res_pos_features = pos_features[choices]

res_pos_labels = pos_labels[choices]

res_pos_features.shape

(181962, 29)

resampled_features = np.concatenate([res_pos_features, neg_features], axis=0)

resampled_labels = np.concatenate([res_pos_labels, neg_labels], axis=0)

order = np.arange(len(resampled_labels))

np.random.shuffle(order)

resampled_features = resampled_features[order]

resampled_labels = resampled_labels[order]

resampled_features.shape

(363924, 29)

tf.data 사용

tf.data를 사용하는 경우 균형있는 예를 생성하는 가장 쉬운 방법은 positive와 negative 데이터세트로 시작하여 이들을 병합하는 것입니다. tf.data guide에서 더 많은 예를 참조하시기 바랍니다.

BUFFER_SIZE = 100000

def make_ds(features, labels):

ds = tf.data.Dataset.from_tensor_slices((features, labels))#.cache()

ds = ds.shuffle(BUFFER_SIZE).repeat()

return ds

pos_ds = make_ds(pos_features, pos_labels)

neg_ds = make_ds(neg_features, neg_labels)

각 데이터 세트는 (feature, label) 쌍으로 되어 있습니다.

for features, label in pos_ds.take(1):

print("Features:\n", features.numpy())

print()

print("Label: ", label.numpy())

Features: [-0.55467083 -0.97922813 0.59080477 0.59643053 -0.89905801 0.48952454 1.15946322 0.01864729 -0.12317742 -0.13615181 1.15007822 -0.79930697 -2.14706985 0.60117816 1.53918159 0.0180766 -0.13999689 0.98266206 0.58410867 1.57832521 0.83951491 1.15214517 1.86547002 -0.04804956 -0.57735273 1.44586154 -0.82327406 -0.51314852 1.63526419] Label: 1

tf.data.Dataset.sample_from_datasets를 사용하여 이 둘을 병합합니다.

resampled_ds = tf.data.Dataset.sample_from_datasets([pos_ds, neg_ds], weights=[0.5, 0.5])

resampled_ds = resampled_ds.batch(BATCH_SIZE).prefetch(2)

for features, label in resampled_ds.take(1):

print(label.numpy().mean())

0.5068359375

이 데이터 세트를 사용하려면 epoch당 스텝 수가 필요합니다.

이 경우 "epoch"의 정의는 명확하지 않습니다. 각 음성 예시를 한 번 볼 때 필요한 배치 수라고 해봅시다.

resampled_steps_per_epoch = np.ceil(2.0*neg/BATCH_SIZE)

resampled_steps_per_epoch

278.0

오버 샘플링 된 데이터에 대한 학습

이제 클래스 가중치를 사용하는 대신 리 샘플링 된 데이터 세트로 모델을 학습하여 이러한 방법이 어떻게 비교되는지 확인하십시오.

참고: 긍정적인 예를 복제하여 데이터가 균형을 이루었기 때문에 총 데이터 세트 크기가 더 크고 각 세대가 더 많은 학습 단계를 위해 실행됩니다.

resampled_model = make_model()

resampled_model.load_weights(initial_weights)

# Reset the bias to zero, since this dataset is balanced.

output_layer = resampled_model.layers[-1]

output_layer.bias.assign([0])

val_ds = tf.data.Dataset.from_tensor_slices((val_features, val_labels)).cache()

val_ds = val_ds.batch(BATCH_SIZE).prefetch(2)

resampled_history = resampled_model.fit(

resampled_ds,

epochs=EPOCHS,

steps_per_epoch=resampled_steps_per_epoch,

callbacks=[early_stopping],

validation_data=val_ds)

Epoch 1/100 278/278 [==============================] - 9s 25ms/step - loss: 0.4348 - tp: 235044.0000 - fp: 60383.0000 - tn: 280909.0000 - fn: 49970.0000 - accuracy: 0.8238 - precision: 0.7956 - recall: 0.8247 - auc: 0.9020 - prc: 0.9208 - val_loss: 0.2105 - val_tp: 68.0000 - val_fp: 944.0000 - val_tn: 44551.0000 - val_fn: 6.0000 - val_accuracy: 0.9792 - val_precision: 0.0672 - val_recall: 0.9189 - val_auc: 0.9713 - val_prc: 0.7421 Epoch 2/100 278/278 [==============================] - 7s 24ms/step - loss: 0.2044 - tp: 255378.0000 - fp: 14590.0000 - tn: 270323.0000 - fn: 29053.0000 - accuracy: 0.9233 - precision: 0.9460 - recall: 0.8979 - auc: 0.9713 - prc: 0.9775 - val_loss: 0.1154 - val_tp: 70.0000 - val_fp: 911.0000 - val_tn: 44584.0000 - val_fn: 4.0000 - val_accuracy: 0.9799 - val_precision: 0.0714 - val_recall: 0.9459 - val_auc: 0.9737 - val_prc: 0.7321 Epoch 3/100 278/278 [==============================] - 7s 24ms/step - loss: 0.1569 - tp: 259317.0000 - fp: 10136.0000 - tn: 274474.0000 - fn: 25417.0000 - accuracy: 0.9376 - precision: 0.9624 - recall: 0.9107 - auc: 0.9842 - prc: 0.9865 - val_loss: 0.0844 - val_tp: 70.0000 - val_fp: 794.0000 - val_tn: 44701.0000 - val_fn: 4.0000 - val_accuracy: 0.9825 - val_precision: 0.0810 - val_recall: 0.9459 - val_auc: 0.9722 - val_prc: 0.7070 Epoch 4/100 278/278 [==============================] - 7s 24ms/step - loss: 0.1306 - tp: 262548.0000 - fp: 8590.0000 - tn: 276185.0000 - fn: 22021.0000 - accuracy: 0.9462 - precision: 0.9683 - recall: 0.9226 - auc: 0.9899 - prc: 0.9907 - val_loss: 0.0699 - val_tp: 69.0000 - val_fp: 738.0000 - val_tn: 44757.0000 - val_fn: 5.0000 - val_accuracy: 0.9837 - val_precision: 0.0855 - val_recall: 0.9324 - val_auc: 0.9745 - val_prc: 0.7112 Epoch 5/100 278/278 [==============================] - 7s 24ms/step - loss: 0.1170 - tp: 263962.0000 - fp: 7829.0000 - tn: 276956.0000 - fn: 20597.0000 - accuracy: 0.9501 - precision: 0.9712 - recall: 0.9276 - auc: 0.9923 - prc: 0.9926 - val_loss: 0.0618 - val_tp: 69.0000 - val_fp: 713.0000 - val_tn: 44782.0000 - val_fn: 5.0000 - val_accuracy: 0.9842 - val_precision: 0.0882 - val_recall: 0.9324 - val_auc: 0.9763 - val_prc: 0.7139 Epoch 6/100 278/278 [==============================] - 7s 24ms/step - loss: 0.1074 - tp: 266098.0000 - fp: 7432.0000 - tn: 276960.0000 - fn: 18854.0000 - accuracy: 0.9538 - precision: 0.9728 - recall: 0.9338 - auc: 0.9937 - prc: 0.9938 - val_loss: 0.0570 - val_tp: 69.0000 - val_fp: 693.0000 - val_tn: 44802.0000 - val_fn: 5.0000 - val_accuracy: 0.9847 - val_precision: 0.0906 - val_recall: 0.9324 - val_auc: 0.9777 - val_prc: 0.7176 Epoch 7/100 278/278 [==============================] - 7s 24ms/step - loss: 0.1001 - tp: 267557.0000 - fp: 7203.0000 - tn: 277084.0000 - fn: 17500.0000 - accuracy: 0.9566 - precision: 0.9738 - recall: 0.9386 - auc: 0.9946 - prc: 0.9946 - val_loss: 0.0521 - val_tp: 69.0000 - val_fp: 652.0000 - val_tn: 44843.0000 - val_fn: 5.0000 - val_accuracy: 0.9856 - val_precision: 0.0957 - val_recall: 0.9324 - val_auc: 0.9790 - val_prc: 0.7097 Epoch 8/100 278/278 [==============================] - 7s 24ms/step - loss: 0.0947 - tp: 268874.0000 - fp: 7028.0000 - tn: 276906.0000 - fn: 16536.0000 - accuracy: 0.9586 - precision: 0.9745 - recall: 0.9421 - auc: 0.9952 - prc: 0.9950 - val_loss: 0.0488 - val_tp: 69.0000 - val_fp: 638.0000 - val_tn: 44857.0000 - val_fn: 5.0000 - val_accuracy: 0.9859 - val_precision: 0.0976 - val_recall: 0.9324 - val_auc: 0.9799 - val_prc: 0.7108 Epoch 9/100 278/278 [==============================] - 7s 25ms/step - loss: 0.0902 - tp: 269135.0000 - fp: 7035.0000 - tn: 277727.0000 - fn: 15447.0000 - accuracy: 0.9605 - precision: 0.9745 - recall: 0.9457 - auc: 0.9956 - prc: 0.9954 - val_loss: 0.0455 - val_tp: 69.0000 - val_fp: 610.0000 - val_tn: 44885.0000 - val_fn: 5.0000 - val_accuracy: 0.9865 - val_precision: 0.1016 - val_recall: 0.9324 - val_auc: 0.9807 - val_prc: 0.7111 Epoch 10/100 278/278 [==============================] - 7s 24ms/step - loss: 0.0865 - tp: 270473.0000 - fp: 7086.0000 - tn: 277235.0000 - fn: 14550.0000 - accuracy: 0.9620 - precision: 0.9745 - recall: 0.9490 - auc: 0.9959 - prc: 0.9957 - val_loss: 0.0433 - val_tp: 69.0000 - val_fp: 615.0000 - val_tn: 44880.0000 - val_fn: 5.0000 - val_accuracy: 0.9864 - val_precision: 0.1009 - val_recall: 0.9324 - val_auc: 0.9812 - val_prc: 0.7028 Epoch 11/100 276/278 [============================>.] - ETA: 0s - loss: 0.0824 - tp: 269530.0000 - fp: 6996.0000 - tn: 275642.0000 - fn: 13080.0000 - accuracy: 0.9645 - precision: 0.9747 - recall: 0.9537 - auc: 0.9963 - prc: 0.9961Restoring model weights from the end of the best epoch: 1. 278/278 [==============================] - 7s 24ms/step - loss: 0.0823 - tp: 271452.0000 - fp: 7062.0000 - tn: 277672.0000 - fn: 13158.0000 - accuracy: 0.9645 - precision: 0.9746 - recall: 0.9538 - auc: 0.9963 - prc: 0.9961 - val_loss: 0.0412 - val_tp: 69.0000 - val_fp: 607.0000 - val_tn: 44888.0000 - val_fn: 5.0000 - val_accuracy: 0.9866 - val_precision: 0.1021 - val_recall: 0.9324 - val_auc: 0.9816 - val_prc: 0.7035 Epoch 11: early stopping

만약 훈련 프로세스가 각 기울기 업데이트에서 전체 데이터 세트를 고려하는 경우, 이 오버 샘플링은 기본적으로 클래스 가중치와 동일합니다.

그러나 여기에서와 같이, 모델을 배치별로 훈련할 때 오버샘플링된 데이터는 더 부드러운 그래디언트 신호를 제공합니다. 각 양성 예시가 하나의 배치에서 큰 가중치를 가지기보다, 매번 여러 배치에서 작은 가중치를 갖기 때문입니다.

이 부드러운 기울기 신호는 모델을 더 쉽게 훈련 할 수 있습니다.

교육 이력 확인

학습 데이터의 분포가 검증 및 테스트 데이터와 완전히 다르기 때문에 여기서 측정 항목의 분포가 다를 수 있습니다.

plot_metrics(resampled_history)

재교육

균형 잡힌 데이터에 대한 훈련이 더 쉽기 때문에 위의 훈련 절차가 빠르게 과적합 될 수 있습니다.

epoch를 나누어 tf.keras.callbacks.EarlyStopping를 보다 세밀하게 제어하여 훈련 중단 시점을 정합니다.

resampled_model = make_model()

resampled_model.load_weights(initial_weights)

# Reset the bias to zero, since this dataset is balanced.

output_layer = resampled_model.layers[-1]

output_layer.bias.assign([0])

resampled_history = resampled_model.fit(

resampled_ds,

# These are not real epochs

steps_per_epoch=20,

epochs=10*EPOCHS,

callbacks=[early_stopping],

validation_data=(val_ds))

Epoch 1/1000 20/20 [==============================] - 3s 56ms/step - loss: 1.1473 - tp: 11103.0000 - fp: 9113.0000 - tn: 56891.0000 - fn: 9422.0000 - accuracy: 0.7858 - precision: 0.5492 - recall: 0.5410 - auc: 0.8163 - prc: 0.6707 - val_loss: 0.6167 - val_tp: 63.0000 - val_fp: 15152.0000 - val_tn: 30343.0000 - val_fn: 11.0000 - val_accuracy: 0.6673 - val_precision: 0.0041 - val_recall: 0.8514 - val_auc: 0.8796 - val_prc: 0.4022 Epoch 2/1000 20/20 [==============================] - 1s 28ms/step - loss: 0.6997 - tp: 14659.0000 - fp: 8326.0000 - tn: 12207.0000 - fn: 5768.0000 - accuracy: 0.6559 - precision: 0.6378 - recall: 0.7176 - auc: 0.7461 - prc: 0.8279 - val_loss: 0.5798 - val_tp: 66.0000 - val_fp: 12901.0000 - val_tn: 32594.0000 - val_fn: 8.0000 - val_accuracy: 0.7167 - val_precision: 0.0051 - val_recall: 0.8919 - val_auc: 0.9219 - val_prc: 0.6033 Epoch 3/1000 20/20 [==============================] - 1s 28ms/step - loss: 0.5494 - tp: 16095.0000 - fp: 7381.0000 - tn: 13146.0000 - fn: 4338.0000 - accuracy: 0.7139 - precision: 0.6856 - recall: 0.7877 - auc: 0.8236 - prc: 0.8814 - val_loss: 0.5260 - val_tp: 68.0000 - val_fp: 9533.0000 - val_tn: 35962.0000 - val_fn: 6.0000 - val_accuracy: 0.7907 - val_precision: 0.0071 - val_recall: 0.9189 - val_auc: 0.9387 - val_prc: 0.6616 Epoch 4/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.4807 - tp: 16677.0000 - fp: 6348.0000 - tn: 14147.0000 - fn: 3788.0000 - accuracy: 0.7525 - precision: 0.7243 - recall: 0.8149 - auc: 0.8587 - prc: 0.9043 - val_loss: 0.4725 - val_tp: 67.0000 - val_fp: 6386.0000 - val_tn: 39109.0000 - val_fn: 7.0000 - val_accuracy: 0.8597 - val_precision: 0.0104 - val_recall: 0.9054 - val_auc: 0.9500 - val_prc: 0.6841 Epoch 5/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.4335 - tp: 17000.0000 - fp: 5508.0000 - tn: 14962.0000 - fn: 3490.0000 - accuracy: 0.7803 - precision: 0.7553 - recall: 0.8297 - auc: 0.8805 - prc: 0.9186 - val_loss: 0.4249 - val_tp: 67.0000 - val_fp: 4010.0000 - val_tn: 41485.0000 - val_fn: 7.0000 - val_accuracy: 0.9118 - val_precision: 0.0164 - val_recall: 0.9054 - val_auc: 0.9557 - val_prc: 0.7010 Epoch 6/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.3995 - tp: 17406.0000 - fp: 4611.0000 - tn: 15574.0000 - fn: 3369.0000 - accuracy: 0.8052 - precision: 0.7906 - recall: 0.8378 - auc: 0.8946 - prc: 0.9295 - val_loss: 0.3864 - val_tp: 69.0000 - val_fp: 2720.0000 - val_tn: 42775.0000 - val_fn: 5.0000 - val_accuracy: 0.9402 - val_precision: 0.0247 - val_recall: 0.9324 - val_auc: 0.9581 - val_prc: 0.6994 Epoch 7/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.3680 - tp: 17413.0000 - fp: 3956.0000 - tn: 16601.0000 - fn: 2990.0000 - accuracy: 0.8304 - precision: 0.8149 - recall: 0.8535 - auc: 0.9117 - prc: 0.9381 - val_loss: 0.3531 - val_tp: 69.0000 - val_fp: 1974.0000 - val_tn: 43521.0000 - val_fn: 5.0000 - val_accuracy: 0.9566 - val_precision: 0.0338 - val_recall: 0.9324 - val_auc: 0.9591 - val_prc: 0.7071 Epoch 8/1000 20/20 [==============================] - 1s 30ms/step - loss: 0.3402 - tp: 17711.0000 - fp: 3290.0000 - tn: 17159.0000 - fn: 2800.0000 - accuracy: 0.8513 - precision: 0.8433 - recall: 0.8635 - auc: 0.9238 - prc: 0.9465 - val_loss: 0.3237 - val_tp: 69.0000 - val_fp: 1614.0000 - val_tn: 43881.0000 - val_fn: 5.0000 - val_accuracy: 0.9645 - val_precision: 0.0410 - val_recall: 0.9324 - val_auc: 0.9599 - val_prc: 0.7126 Epoch 9/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.3214 - tp: 17827.0000 - fp: 2886.0000 - tn: 17607.0000 - fn: 2640.0000 - accuracy: 0.8651 - precision: 0.8607 - recall: 0.8710 - auc: 0.9322 - prc: 0.9520 - val_loss: 0.2980 - val_tp: 69.0000 - val_fp: 1344.0000 - val_tn: 44151.0000 - val_fn: 5.0000 - val_accuracy: 0.9704 - val_precision: 0.0488 - val_recall: 0.9324 - val_auc: 0.9608 - val_prc: 0.7262 Epoch 10/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.3009 - tp: 18039.0000 - fp: 2543.0000 - tn: 17866.0000 - fn: 2512.0000 - accuracy: 0.8766 - precision: 0.8764 - recall: 0.8778 - auc: 0.9395 - prc: 0.9576 - val_loss: 0.2754 - val_tp: 69.0000 - val_fp: 1179.0000 - val_tn: 44316.0000 - val_fn: 5.0000 - val_accuracy: 0.9740 - val_precision: 0.0553 - val_recall: 0.9324 - val_auc: 0.9628 - val_prc: 0.7284 Epoch 11/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.2860 - tp: 18124.0000 - fp: 2217.0000 - tn: 18200.0000 - fn: 2419.0000 - accuracy: 0.8868 - precision: 0.8910 - recall: 0.8822 - auc: 0.9455 - prc: 0.9613 - val_loss: 0.2564 - val_tp: 69.0000 - val_fp: 1075.0000 - val_tn: 44420.0000 - val_fn: 5.0000 - val_accuracy: 0.9763 - val_precision: 0.0603 - val_recall: 0.9324 - val_auc: 0.9656 - val_prc: 0.7330 Epoch 12/1000 20/20 [==============================] - 1s 30ms/step - loss: 0.2730 - tp: 18217.0000 - fp: 2016.0000 - tn: 18415.0000 - fn: 2312.0000 - accuracy: 0.8943 - precision: 0.9004 - recall: 0.8874 - auc: 0.9504 - prc: 0.9644 - val_loss: 0.2392 - val_tp: 69.0000 - val_fp: 1017.0000 - val_tn: 44478.0000 - val_fn: 5.0000 - val_accuracy: 0.9776 - val_precision: 0.0635 - val_recall: 0.9324 - val_auc: 0.9680 - val_prc: 0.7343 Epoch 13/1000 20/20 [==============================] - 1s 31ms/step - loss: 0.2616 - tp: 18205.0000 - fp: 1788.0000 - tn: 18682.0000 - fn: 2285.0000 - accuracy: 0.9006 - precision: 0.9106 - recall: 0.8885 - auc: 0.9542 - prc: 0.9668 - val_loss: 0.2235 - val_tp: 69.0000 - val_fp: 963.0000 - val_tn: 44532.0000 - val_fn: 5.0000 - val_accuracy: 0.9788 - val_precision: 0.0669 - val_recall: 0.9324 - val_auc: 0.9710 - val_prc: 0.7393 Epoch 14/1000 20/20 [==============================] - 1s 33ms/step - loss: 0.2495 - tp: 18265.0000 - fp: 1585.0000 - tn: 18898.0000 - fn: 2212.0000 - accuracy: 0.9073 - precision: 0.9202 - recall: 0.8920 - auc: 0.9573 - prc: 0.9690 - val_loss: 0.2101 - val_tp: 68.0000 - val_fp: 952.0000 - val_tn: 44543.0000 - val_fn: 6.0000 - val_accuracy: 0.9790 - val_precision: 0.0667 - val_recall: 0.9189 - val_auc: 0.9729 - val_prc: 0.7423 Epoch 15/1000 20/20 [==============================] - 1s 31ms/step - loss: 0.2436 - tp: 18314.0000 - fp: 1515.0000 - tn: 18927.0000 - fn: 2204.0000 - accuracy: 0.9092 - precision: 0.9236 - recall: 0.8926 - auc: 0.9593 - prc: 0.9702 - val_loss: 0.1978 - val_tp: 68.0000 - val_fp: 936.0000 - val_tn: 44559.0000 - val_fn: 6.0000 - val_accuracy: 0.9793 - val_precision: 0.0677 - val_recall: 0.9189 - val_auc: 0.9748 - val_prc: 0.7431 Epoch 16/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.2339 - tp: 18312.0000 - fp: 1438.0000 - tn: 19063.0000 - fn: 2147.0000 - accuracy: 0.9125 - precision: 0.9272 - recall: 0.8951 - auc: 0.9626 - prc: 0.9720 - val_loss: 0.1869 - val_tp: 68.0000 - val_fp: 928.0000 - val_tn: 44567.0000 - val_fn: 6.0000 - val_accuracy: 0.9795 - val_precision: 0.0683 - val_recall: 0.9189 - val_auc: 0.9756 - val_prc: 0.7338 Epoch 17/1000 20/20 [==============================] - 1s 30ms/step - loss: 0.2266 - tp: 18101.0000 - fp: 1272.0000 - tn: 19393.0000 - fn: 2194.0000 - accuracy: 0.9154 - precision: 0.9343 - recall: 0.8919 - auc: 0.9644 - prc: 0.9728 - val_loss: 0.1770 - val_tp: 68.0000 - val_fp: 906.0000 - val_tn: 44589.0000 - val_fn: 6.0000 - val_accuracy: 0.9800 - val_precision: 0.0698 - val_recall: 0.9189 - val_auc: 0.9761 - val_prc: 0.7346 Epoch 18/1000 20/20 [==============================] - 1s 30ms/step - loss: 0.2223 - tp: 18367.0000 - fp: 1230.0000 - tn: 19225.0000 - fn: 2138.0000 - accuracy: 0.9178 - precision: 0.9372 - recall: 0.8957 - auc: 0.9655 - prc: 0.9740 - val_loss: 0.1683 - val_tp: 68.0000 - val_fp: 915.0000 - val_tn: 44580.0000 - val_fn: 6.0000 - val_accuracy: 0.9798 - val_precision: 0.0692 - val_recall: 0.9189 - val_auc: 0.9756 - val_prc: 0.7358 Epoch 19/1000 20/20 [==============================] - 1s 32ms/step - loss: 0.2154 - tp: 18395.0000 - fp: 1122.0000 - tn: 19340.0000 - fn: 2103.0000 - accuracy: 0.9213 - precision: 0.9425 - recall: 0.8974 - auc: 0.9678 - prc: 0.9755 - val_loss: 0.1604 - val_tp: 68.0000 - val_fp: 916.0000 - val_tn: 44579.0000 - val_fn: 6.0000 - val_accuracy: 0.9798 - val_precision: 0.0691 - val_recall: 0.9189 - val_auc: 0.9756 - val_prc: 0.7276 Epoch 20/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.2068 - tp: 18310.0000 - fp: 1045.0000 - tn: 19525.0000 - fn: 2080.0000 - accuracy: 0.9237 - precision: 0.9460 - recall: 0.8980 - auc: 0.9703 - prc: 0.9769 - val_loss: 0.1530 - val_tp: 68.0000 - val_fp: 923.0000 - val_tn: 44572.0000 - val_fn: 6.0000 - val_accuracy: 0.9796 - val_precision: 0.0686 - val_recall: 0.9189 - val_auc: 0.9748 - val_prc: 0.7285 Epoch 21/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.2037 - tp: 18369.0000 - fp: 1007.0000 - tn: 19508.0000 - fn: 2076.0000 - accuracy: 0.9247 - precision: 0.9480 - recall: 0.8985 - auc: 0.9714 - prc: 0.9777 - val_loss: 0.1463 - val_tp: 68.0000 - val_fp: 922.0000 - val_tn: 44573.0000 - val_fn: 6.0000 - val_accuracy: 0.9796 - val_precision: 0.0687 - val_recall: 0.9189 - val_auc: 0.9749 - val_prc: 0.7290 Epoch 22/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.1998 - tp: 18566.0000 - fp: 1022.0000 - tn: 19262.0000 - fn: 2110.0000 - accuracy: 0.9235 - precision: 0.9478 - recall: 0.8979 - auc: 0.9722 - prc: 0.9785 - val_loss: 0.1406 - val_tp: 69.0000 - val_fp: 918.0000 - val_tn: 44577.0000 - val_fn: 5.0000 - val_accuracy: 0.9797 - val_precision: 0.0699 - val_recall: 0.9324 - val_auc: 0.9752 - val_prc: 0.7296 Epoch 23/1000 20/20 [==============================] - 1s 28ms/step - loss: 0.1945 - tp: 18381.0000 - fp: 987.0000 - tn: 19539.0000 - fn: 2053.0000 - accuracy: 0.9258 - precision: 0.9490 - recall: 0.8995 - auc: 0.9745 - prc: 0.9796 - val_loss: 0.1350 - val_tp: 69.0000 - val_fp: 914.0000 - val_tn: 44581.0000 - val_fn: 5.0000 - val_accuracy: 0.9798 - val_precision: 0.0702 - val_recall: 0.9324 - val_auc: 0.9750 - val_prc: 0.7299 Epoch 24/1000 20/20 [==============================] - 1s 29ms/step - loss: 0.1892 - tp: 18437.0000 - fp: 930.0000 - tn: 19564.0000 - fn: 2029.0000 - accuracy: 0.9278 - precision: 0.9520 - recall: 0.9009 - auc: 0.9757 - prc: 0.9804 - val_loss: 0.1302 - val_tp: 69.0000 - val_fp: 925.0000 - val_tn: 44570.0000 - val_fn: 5.0000 - val_accuracy: 0.9796 - val_precision: 0.0694 - val_recall: 0.9324 - val_auc: 0.9746 - val_prc: 0.7302 Epoch 25/1000 18/20 [==========================>...] - ETA: 0s - loss: 0.1876 - tp: 16760.0000 - fp: 807.0000 - tn: 17477.0000 - fn: 1820.0000 - accuracy: 0.9287 - precision: 0.9541 - recall: 0.9020 - auc: 0.9762 - prc: 0.9811Restoring model weights from the end of the best epoch: 15. 20/20 [==============================] - 1s 32ms/step - loss: 0.1884 - tp: 18564.0000 - fp: 888.0000 - tn: 19489.0000 - fn: 2019.0000 - accuracy: 0.9290 - precision: 0.9543 - recall: 0.9019 - auc: 0.9761 - prc: 0.9809 - val_loss: 0.1259 - val_tp: 69.0000 - val_fp: 927.0000 - val_tn: 44568.0000 - val_fn: 5.0000 - val_accuracy: 0.9795 - val_precision: 0.0693 - val_recall: 0.9324 - val_auc: 0.9741 - val_prc: 0.7312 Epoch 25: early stopping

훈련 이력 재확인

plot_metrics(resampled_history)

메트릭 평가

train_predictions_resampled = resampled_model.predict(train_features, batch_size=BATCH_SIZE)

test_predictions_resampled = resampled_model.predict(test_features, batch_size=BATCH_SIZE)

90/90 [==============================] - 0s 1ms/step 28/28 [==============================] - 0s 1ms/step

resampled_results = resampled_model.evaluate(test_features, test_labels,

batch_size=BATCH_SIZE, verbose=0)

for name, value in zip(resampled_model.metrics_names, resampled_results):

print(name, ': ', value)

print()

plot_cm(test_labels, test_predictions_resampled)

loss : 0.1987897902727127 tp : 91.0 fp : 1216.0 tn : 55642.0 fn : 13.0 accuracy : 0.9784241914749146 precision : 0.06962509453296661 recall : 0.875 auc : 0.9669501185417175 prc : 0.7082945108413696 Legitimate Transactions Detected (True Negatives): 55642 Legitimate Transactions Incorrectly Detected (False Positives): 1216 Fraudulent Transactions Missed (False Negatives): 13 Fraudulent Transactions Detected (True Positives): 91 Total Fraudulent Transactions: 104

ROC 플로팅

plot_roc("Train Baseline", train_labels, train_predictions_baseline, color=colors[0])

plot_roc("Test Baseline", test_labels, test_predictions_baseline, color=colors[0], linestyle='--')

plot_roc("Train Weighted", train_labels, train_predictions_weighted, color=colors[1])

plot_roc("Test Weighted", test_labels, test_predictions_weighted, color=colors[1], linestyle='--')

plot_roc("Train Resampled", train_labels, train_predictions_resampled, color=colors[2])

plot_roc("Test Resampled", test_labels, test_predictions_resampled, color=colors[2], linestyle='--')

plt.legend(loc='lower right');

AUPRC 플로팅

plot_prc("Train Baseline", train_labels, train_predictions_baseline, color=colors[0])

plot_prc("Test Baseline", test_labels, test_predictions_baseline, color=colors[0], linestyle='--')

plot_prc("Train Weighted", train_labels, train_predictions_weighted, color=colors[1])

plot_prc("Test Weighted", test_labels, test_predictions_weighted, color=colors[1], linestyle='--')

plot_prc("Train Resampled", train_labels, train_predictions_resampled, color=colors[2])

plot_prc("Test Resampled", test_labels, test_predictions_resampled, color=colors[2], linestyle='--')

plt.legend(loc='lower right');

튜토리얼을 이 문제에 적용

불균형 데이터 분류는 학습 할 샘플이 너무 적기 때문에 본질적으로 어려운 작업입니다. 항상 데이터부터 시작하여 가능한 한 많은 샘플을 수집하고 모델이 소수 클래스를 최대한 활용할 수 있도록 어떤 기능이 관련 될 수 있는지에 대해 실질적인 생각을 하도록 최선을 다해야 합니다. 어떤 시점에서 모델은 원하는 결과를 개선하고 산출하는데 어려움을 겪을 수 있으므로 문제의 컨텍스트와 다양한 유형의 오류 간의 균형을 염두에 두는 것이 중요합니다.