Visualizza su TensorFlow.org Visualizza su TensorFlow.org |  Esegui in Google Colab Esegui in Google Colab |  Visualizza l'origine su GitHub Visualizza l'origine su GitHub |  Scarica quaderno Scarica quaderno |

Panoramica

L'API tf.distribute.Strategy fornisce un'astrazione per la distribuzione della formazione su più unità di elaborazione. Ti consente di svolgere formazione distribuita utilizzando modelli e codice di formazione esistenti con modifiche minime.

Questo tutorial mostra come usare tf.distribute.MirroredStrategy per eseguire la replica in-graph con training sincrono su molte GPU su una macchina . La strategia essenzialmente copia tutte le variabili del modello su ciascun processore. Quindi, utilizza tutto-riduci per combinare i gradienti di tutti i processori e applica il valore combinato a tutte le copie del modello.

Utilizzerai le API tf.keras per costruire il modello e Model.fit per addestrarlo. (Per informazioni sulla formazione distribuita con un ciclo di formazione personalizzato e MirroredStrategy , consulta questo tutorial .)

MirroredStrategy addestra il tuo modello su più GPU su una singola macchina. Per l'addestramento sincrono su molte GPU su più lavoratori , utilizzare tf.distribute.MultiWorkerMirroredStrategy con Keras Model.fit o un ciclo di addestramento personalizzato . Per altre opzioni, fare riferimento alla Guida alla formazione distribuita .

Per conoscere varie altre strategie, c'è la guida Distributed training with TensorFlow .

Impostare

import tensorflow_datasets as tfds

import tensorflow as tf

import os

# Load the TensorBoard notebook extension.

%load_ext tensorboard

print(tf.__version__)

2.8.0-rc1

Scarica il dataset

Carica il set di dati MNIST dai set di dati TensorFlow . Questo restituisce un set di dati nel formato tf.data .

L'impostazione dell'argomento with_info su True include i metadati per l'intero set di dati, che viene salvato qui in info . Tra le altre cose, questo oggetto di metadati include il numero di esempi di treni e test.

datasets, info = tfds.load(name='mnist', with_info=True, as_supervised=True)

mnist_train, mnist_test = datasets['train'], datasets['test']

Definire la strategia di distribuzione

Crea un oggetto MirroredStrategy . Questo gestirà la distribuzione e fornirà un gestore del contesto ( MirroredStrategy.scope ) per creare il tuo modello all'interno.

strategy = tf.distribute.MirroredStrategy()

INFO:tensorflow:Using MirroredStrategy with devices ('/job:localhost/replica:0/task:0/device:GPU:0',)

INFO:tensorflow:Using MirroredStrategy with devices ('/job:localhost/replica:0/task:0/device:GPU:0',)

print('Number of devices: {}'.format(strategy.num_replicas_in_sync))

Number of devices: 1

Configura la pipeline di input

Quando si esegue il training di un modello con più GPU, è possibile utilizzare la potenza di elaborazione aggiuntiva in modo efficace aumentando le dimensioni del batch. In generale, usa la dimensione batch più grande che si adatta alla memoria della GPU e ottimizza la velocità di apprendimento di conseguenza.

# You can also do info.splits.total_num_examples to get the total

# number of examples in the dataset.

num_train_examples = info.splits['train'].num_examples

num_test_examples = info.splits['test'].num_examples

BUFFER_SIZE = 10000

BATCH_SIZE_PER_REPLICA = 64

BATCH_SIZE = BATCH_SIZE_PER_REPLICA * strategy.num_replicas_in_sync

Definire una funzione che normalizza i valori dei pixel dell'immagine dall'intervallo [0, 255] all'intervallo [0, 1] ( ridimensionamento delle funzioni ):

def scale(image, label):

image = tf.cast(image, tf.float32)

image /= 255

return image, label

Applicare questa funzione di scale ai dati di addestramento e test, quindi utilizzare le API tf.data.Dataset per mescolare i dati di addestramento ( Dataset.shuffle ) e raggrupparli ( Dataset.batch ). Si noti che si mantiene anche una cache in memoria dei dati di addestramento per migliorare le prestazioni ( Dataset.cache ).

train_dataset = mnist_train.map(scale).cache().shuffle(BUFFER_SIZE).batch(BATCH_SIZE)

eval_dataset = mnist_test.map(scale).batch(BATCH_SIZE)

Crea il modello

Crea e compila il modello Keras nel contesto di Strategy.scope :

with strategy.scope():

model = tf.keras.Sequential([

tf.keras.layers.Conv2D(32, 3, activation='relu', input_shape=(28, 28, 1)),

tf.keras.layers.MaxPooling2D(),

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(64, activation='relu'),

tf.keras.layers.Dense(10)

])

model.compile(loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

optimizer=tf.keras.optimizers.Adam(),

metrics=['accuracy'])

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

Definisci le richiamate

Definisci i seguenti tf.keras.callbacks :

-

tf.keras.callbacks.TensorBoard: scrive un log per TensorBoard, che permette di visualizzare i grafici. -

tf.keras.callbacks.ModelCheckpoint: salva il modello a una certa frequenza, ad esempio dopo ogni epoca. -

tf.keras.callbacks.LearningRateScheduler: programma la velocità di apprendimento in modo che cambi dopo, ad esempio, ogni epoca/batch.

A scopo illustrativo, aggiungi una richiamata personalizzata denominata PrintLR per visualizzare la velocità di apprendimento nel blocco appunti.

# Define the checkpoint directory to store the checkpoints.

checkpoint_dir = './training_checkpoints'

# Define the name of the checkpoint files.

checkpoint_prefix = os.path.join(checkpoint_dir, "ckpt_{epoch}")

# Define a function for decaying the learning rate.

# You can define any decay function you need.

def decay(epoch):

if epoch < 3:

return 1e-3

elif epoch >= 3 and epoch < 7:

return 1e-4

else:

return 1e-5

# Define a callback for printing the learning rate at the end of each epoch.

class PrintLR(tf.keras.callbacks.Callback):

def on_epoch_end(self, epoch, logs=None):

print('\nLearning rate for epoch {} is {}'.format(epoch + 1,

model.optimizer.lr.numpy()))

# Put all the callbacks together.

callbacks = [

tf.keras.callbacks.TensorBoard(log_dir='./logs'),

tf.keras.callbacks.ModelCheckpoint(filepath=checkpoint_prefix,

save_weights_only=True),

tf.keras.callbacks.LearningRateScheduler(decay),

PrintLR()

]

Allenati e valuta

Ora, addestra il modello nel solito modo chiamando Model.fit sul modello e passando il set di dati creato all'inizio del tutorial. Questo passaggio è lo stesso indipendentemente dal fatto che tu stia distribuendo o meno la formazione.

EPOCHS = 12

model.fit(train_dataset, epochs=EPOCHS, callbacks=callbacks)

2022-01-26 05:38:28.865380: W tensorflow/core/grappler/optimizers/data/auto_shard.cc:547] The `assert_cardinality` transformation is currently not handled by the auto-shard rewrite and will be removed.

Epoch 1/12

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

933/938 [============================>.] - ETA: 0s - loss: 0.2029 - accuracy: 0.9399

Learning rate for epoch 1 is 0.0010000000474974513

938/938 [==============================] - 10s 4ms/step - loss: 0.2022 - accuracy: 0.9401 - lr: 0.0010

Epoch 2/12

930/938 [============================>.] - ETA: 0s - loss: 0.0654 - accuracy: 0.9813

Learning rate for epoch 2 is 0.0010000000474974513

938/938 [==============================] - 3s 3ms/step - loss: 0.0652 - accuracy: 0.9813 - lr: 0.0010

Epoch 3/12

931/938 [============================>.] - ETA: 0s - loss: 0.0453 - accuracy: 0.9864

Learning rate for epoch 3 is 0.0010000000474974513

938/938 [==============================] - 3s 3ms/step - loss: 0.0453 - accuracy: 0.9864 - lr: 0.0010

Epoch 4/12

923/938 [============================>.] - ETA: 0s - loss: 0.0246 - accuracy: 0.9933

Learning rate for epoch 4 is 9.999999747378752e-05

938/938 [==============================] - 3s 3ms/step - loss: 0.0244 - accuracy: 0.9934 - lr: 1.0000e-04

Epoch 5/12

929/938 [============================>.] - ETA: 0s - loss: 0.0211 - accuracy: 0.9944

Learning rate for epoch 5 is 9.999999747378752e-05

938/938 [==============================] - 3s 3ms/step - loss: 0.0212 - accuracy: 0.9944 - lr: 1.0000e-04

Epoch 6/12

930/938 [============================>.] - ETA: 0s - loss: 0.0192 - accuracy: 0.9950

Learning rate for epoch 6 is 9.999999747378752e-05

938/938 [==============================] - 3s 3ms/step - loss: 0.0194 - accuracy: 0.9950 - lr: 1.0000e-04

Epoch 7/12

927/938 [============================>.] - ETA: 0s - loss: 0.0179 - accuracy: 0.9953

Learning rate for epoch 7 is 9.999999747378752e-05

938/938 [==============================] - 3s 3ms/step - loss: 0.0179 - accuracy: 0.9953 - lr: 1.0000e-04

Epoch 8/12

938/938 [==============================] - ETA: 0s - loss: 0.0153 - accuracy: 0.9966

Learning rate for epoch 8 is 9.999999747378752e-06

938/938 [==============================] - 3s 3ms/step - loss: 0.0153 - accuracy: 0.9966 - lr: 1.0000e-05

Epoch 9/12

927/938 [============================>.] - ETA: 0s - loss: 0.0151 - accuracy: 0.9966

Learning rate for epoch 9 is 9.999999747378752e-06

938/938 [==============================] - 3s 3ms/step - loss: 0.0150 - accuracy: 0.9966 - lr: 1.0000e-05

Epoch 10/12

935/938 [============================>.] - ETA: 0s - loss: 0.0148 - accuracy: 0.9966

Learning rate for epoch 10 is 9.999999747378752e-06

938/938 [==============================] - 3s 3ms/step - loss: 0.0148 - accuracy: 0.9966 - lr: 1.0000e-05

Epoch 11/12

937/938 [============================>.] - ETA: 0s - loss: 0.0146 - accuracy: 0.9967

Learning rate for epoch 11 is 9.999999747378752e-06

938/938 [==============================] - 3s 3ms/step - loss: 0.0146 - accuracy: 0.9967 - lr: 1.0000e-05

Epoch 12/12

926/938 [============================>.] - ETA: 0s - loss: 0.0145 - accuracy: 0.9967

Learning rate for epoch 12 is 9.999999747378752e-06

938/938 [==============================] - 3s 3ms/step - loss: 0.0144 - accuracy: 0.9967 - lr: 1.0000e-05

<keras.callbacks.History at 0x7fad70067c10>

Verifica i checkpoint salvati:

# Check the checkpoint directory.ls {checkpoint_dir}

checkpoint ckpt_4.data-00000-of-00001 ckpt_1.data-00000-of-00001 ckpt_4.index ckpt_1.index ckpt_5.data-00000-of-00001 ckpt_10.data-00000-of-00001 ckpt_5.index ckpt_10.index ckpt_6.data-00000-of-00001 ckpt_11.data-00000-of-00001 ckpt_6.index ckpt_11.index ckpt_7.data-00000-of-00001 ckpt_12.data-00000-of-00001 ckpt_7.index ckpt_12.index ckpt_8.data-00000-of-00001 ckpt_2.data-00000-of-00001 ckpt_8.index ckpt_2.index ckpt_9.data-00000-of-00001 ckpt_3.data-00000-of-00001 ckpt_9.index ckpt_3.index

Per verificare le prestazioni del modello, caricare l'ultimo checkpoint e chiamare Model.evaluate sui dati del test:

model.load_weights(tf.train.latest_checkpoint(checkpoint_dir))

eval_loss, eval_acc = model.evaluate(eval_dataset)

print('Eval loss: {}, Eval accuracy: {}'.format(eval_loss, eval_acc))

2022-01-26 05:39:15.260539: W tensorflow/core/grappler/optimizers/data/auto_shard.cc:547] The `assert_cardinality` transformation is currently not handled by the auto-shard rewrite and will be removed. 157/157 [==============================] - 2s 4ms/step - loss: 0.0373 - accuracy: 0.9879 Eval loss: 0.03732967749238014, Eval accuracy: 0.9879000186920166

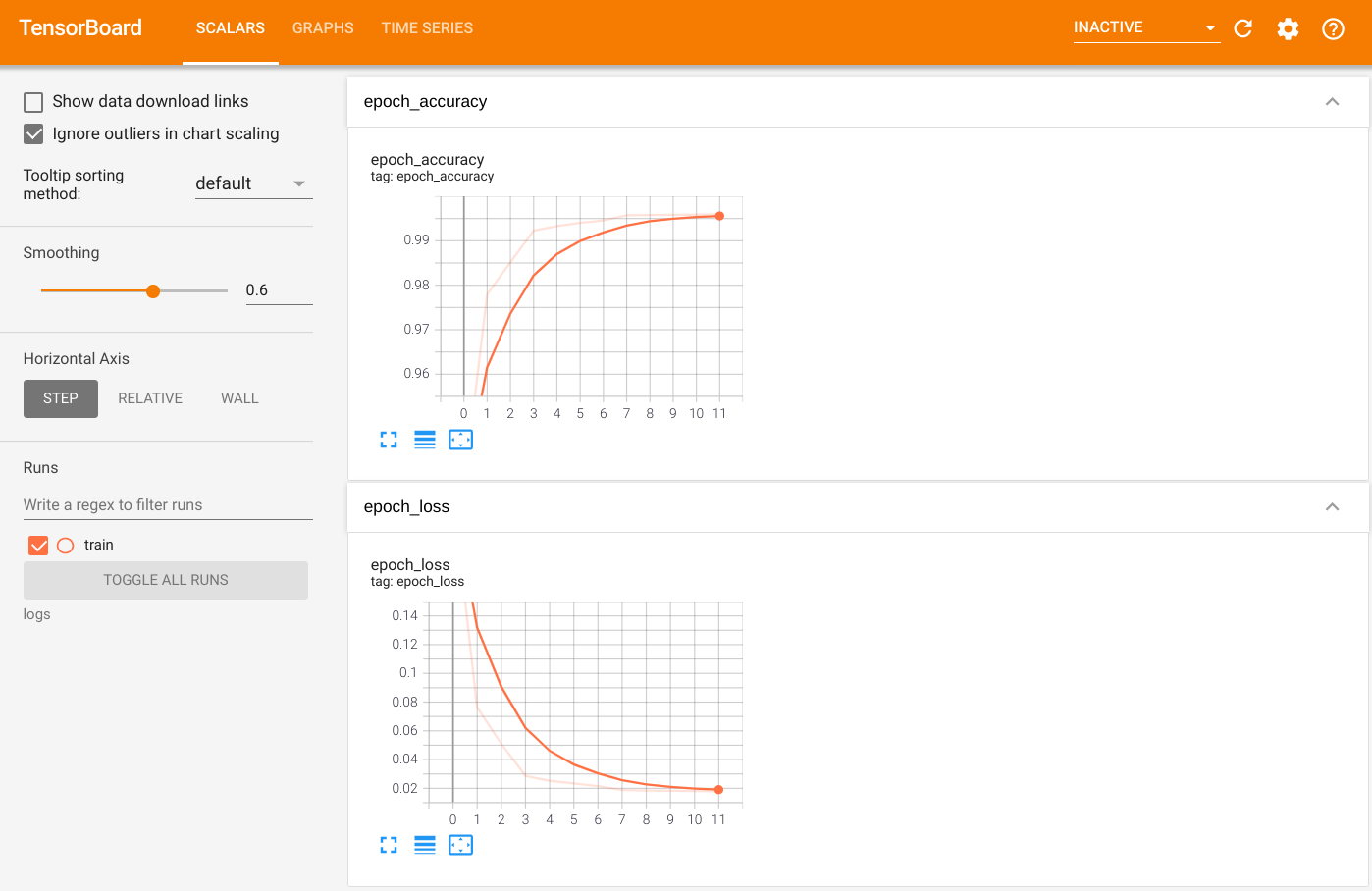

Per visualizzare l'output, avviare TensorBoard e visualizzare i log:

%tensorboard --logdir=logs

ls -sh ./logs

total 4.0K 4.0K train

Esporta in modello salvato

Esportare il grafico e le variabili nel formato SavedModel indipendente dalla piattaforma utilizzando Model.save . Dopo aver salvato il modello, puoi caricarlo con o senza Strategy.scope .

path = 'saved_model/'

model.save(path, save_format='tf')

2022-01-26 05:39:18.012847: W tensorflow/python/util/util.cc:368] Sets are not currently considered sequences, but this may change in the future, so consider avoiding using them. INFO:tensorflow:Assets written to: saved_model/assets INFO:tensorflow:Assets written to: saved_model/assets

Ora carica il modello senza Strategy.scope :

unreplicated_model = tf.keras.models.load_model(path)

unreplicated_model.compile(

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

optimizer=tf.keras.optimizers.Adam(),

metrics=['accuracy'])

eval_loss, eval_acc = unreplicated_model.evaluate(eval_dataset)

print('Eval loss: {}, Eval Accuracy: {}'.format(eval_loss, eval_acc))

157/157 [==============================] - 1s 2ms/step - loss: 0.0373 - accuracy: 0.9879 Eval loss: 0.03732967749238014, Eval Accuracy: 0.9879000186920166

Carica il modello con Strategy.scope :

with strategy.scope():

replicated_model = tf.keras.models.load_model(path)

replicated_model.compile(loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

optimizer=tf.keras.optimizers.Adam(),

metrics=['accuracy'])

eval_loss, eval_acc = replicated_model.evaluate(eval_dataset)

print ('Eval loss: {}, Eval Accuracy: {}'.format(eval_loss, eval_acc))

2022-01-26 05:39:19.489971: W tensorflow/core/grappler/optimizers/data/auto_shard.cc:547] The `assert_cardinality` transformation is currently not handled by the auto-shard rewrite and will be removed. 157/157 [==============================] - 3s 3ms/step - loss: 0.0373 - accuracy: 0.9879 Eval loss: 0.03732967749238014, Eval Accuracy: 0.9879000186920166

Risorse addizionali

Altri esempi che utilizzano diverse strategie di distribuzione con l'API Keras Model.fit :

- Il tutorial Risolvi attività GLUE utilizzando BERT su TPU utilizza

tf.distribute.MirroredStrategyper la formazione su GPU etf.distribute.TPUStrategy— su TPU. - L'esercitazione Salva e carica un modello utilizzando una strategia di distribuzione mostra come utilizzare le API SavedModel con

tf.distribute.Strategy. - I modelli ufficiali di TensorFlow possono essere configurati per eseguire più strategie di distribuzione.

Per saperne di più sulle strategie di distribuzione di TensorFlow:

- L'esercitazione Formazione personalizzata con tf.distribute.Strategy mostra come usare

tf.distribute.MirroredStrategyper la formazione per singolo lavoratore con un ciclo di formazione personalizzato. - L'esercitazione MultiWorker con Keras mostra come utilizzare

MultiWorkerMirroredStrategyconModel.fit. - L' esercitazione Ciclo di formazione personalizzato con Keras e MultiWorkerMirroredStrategy mostra come usare

MultiWorkerMirroredStrategycon Keras e un ciclo di formazione personalizzato. - La guida Formazione distribuita in TensorFlow fornisce una panoramica delle strategie di distribuzione disponibili.

- La guida Prestazioni migliori con tf.function fornisce informazioni su altre strategie e strumenti, come TensorFlow Profiler che puoi utilizzare per ottimizzare le prestazioni dei tuoi modelli TensorFlow.