- Description:

A re-labeled version of CIFAR-10's test set with soft-labels coming from real human annotators. For every pair (image, label) in the original CIFAR-10 test set, it provides several additional labels given by real human annotators as well as the average soft-label. The training set is identical to the one of the original dataset.

Homepage: https://github.com/jcpeterson/cifar-10h

Source code:

tfds.image_classification.cifar10_h.Cifar10HVersions:

1.0.0(default): Initial release.

Download size:

172.92 MiBDataset size:

144.85 MiBAuto-cached (documentation): Yes

Splits:

| Split | Examples |

|---|---|

'test' |

10,000 |

'train' |

50,000 |

- Feature structure:

FeaturesDict({

'annotator_ids': Sequence(Scalar(shape=(), dtype=int32)),

'human_labels': Sequence(ClassLabel(shape=(), dtype=int64, num_classes=10)),

'id': Text(shape=(), dtype=string),

'image': Image(shape=(32, 32, 3), dtype=uint8),

'label': ClassLabel(shape=(), dtype=int64, num_classes=10),

'reaction_times': Sequence(Scalar(shape=(), dtype=float32)),

'soft_label': Tensor(shape=(10,), dtype=float32),

'trial_indices': Sequence(Scalar(shape=(), dtype=int32)),

})

- Feature documentation:

| Feature | Class | Shape | Dtype | Description |

|---|---|---|---|---|

| FeaturesDict | ||||

| annotator_ids | Sequence(Scalar) | (None,) | int32 | |

| human_labels | Sequence(ClassLabel) | (None,) | int64 | |

| id | Text | string | ||

| image | Image | (32, 32, 3) | uint8 | |

| label | ClassLabel | int64 | ||

| reaction_times | Sequence(Scalar) | (None,) | float32 | |

| soft_label | Tensor | (10,) | float32 | |

| trial_indices | Sequence(Scalar) | (None,) | int32 |

Supervised keys (See

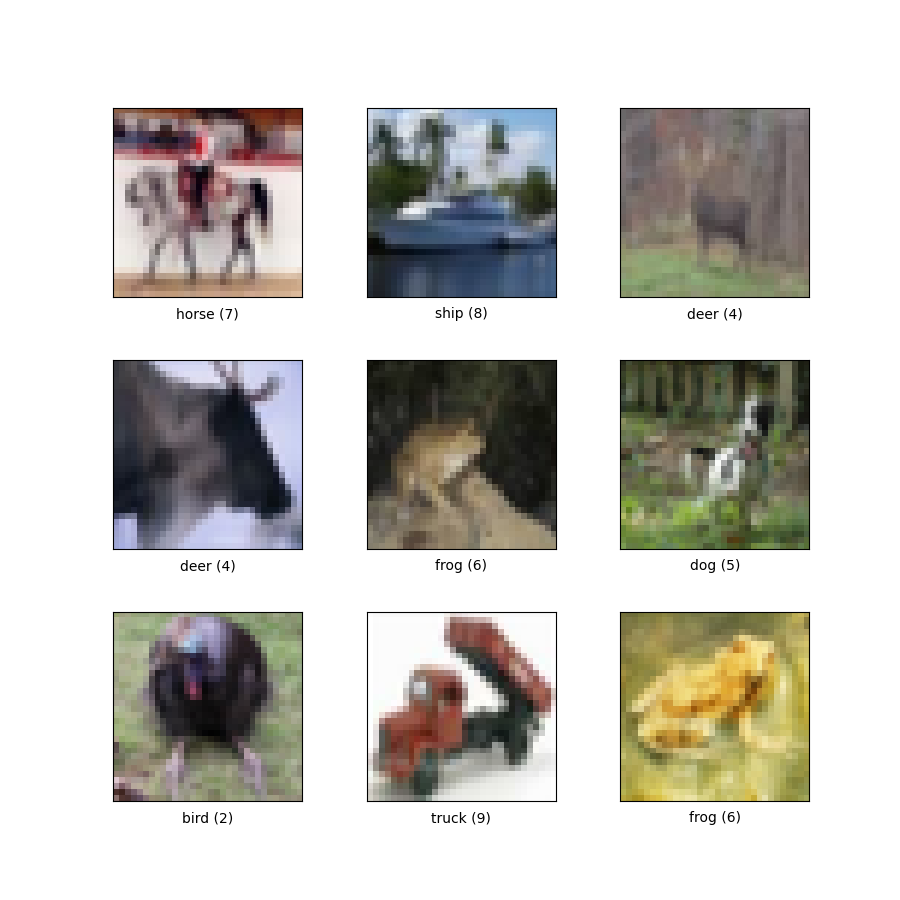

as_superviseddoc):NoneFigure (tfds.show_examples):

- Examples (tfds.as_dataframe):

- Citation:

@inproceedings{wei2022learning,

title={Human uncertainty makes classification more robust},

author={Joshua C. Peterson and Ruairidh M. Battleday and Thomas L. Griffiths

and Olga Russakovsky},

booktitle={IEEE International Conference on Computer Vision and Pattern

Recognition (CVPR)},

year={2019}

}