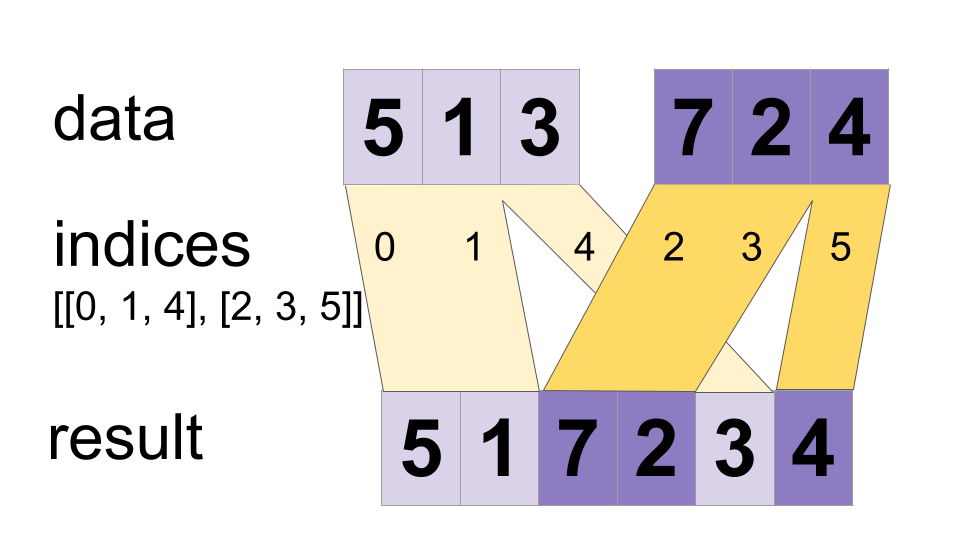

Interleave the values from the data tensors into a single tensor.

tf.dynamic_stitch(

indices: Annotated[List[Any], _atypes.Int32],

data: Annotated[List[Any], TV_DynamicStitch_T],

name=None

) -> Annotated[Any, TV_DynamicStitch_T]

Builds a merged tensor such that

merged[indices[m][i, ..., j], ...] = data[m][i, ..., j, ...]

For example, if each indices[m] is scalar or vector, we have

# Scalar indices:

merged[indices[m], ...] = data[m][...]

# Vector indices:

merged[indices[m][i], ...] = data[m][i, ...]

Each data[i].shape must start with the corresponding indices[i].shape,

and the rest of data[i].shape must be constant w.r.t. i. That is, we

must have data[i].shape = indices[i].shape + constant. In terms of this

constant, the output shape is

merged.shape = [max(indices) + 1] + constant

Values are merged in order, so if an index appears in both indices[m][i] and

indices[n][j] for (m,i) < (n,j) the slice data[n][j] will appear in the

merged result. If you do not need this guarantee, ParallelDynamicStitch might

perform better on some devices.

For example:

indices[0] = 6

indices[1] = [4, 1]

indices[2] = [[5, 2], [0, 3]]

data[0] = [61, 62]

data[1] = [[41, 42], [11, 12]]

data[2] = [[[51, 52], [21, 22]], [[1, 2], [31, 32]]]

merged = [[1, 2], [11, 12], [21, 22], [31, 32], [41, 42],

[51, 52], [61, 62]]

This method can be used to merge partitions created by dynamic_partition

as illustrated on the following example:

# Apply function (increments x_i) on elements for which a certain condition

# apply (x_i != -1 in this example).

x=tf.constant([0.1, -1., 5.2, 4.3, -1., 7.4])

condition_mask=tf.not_equal(x,tf.constant(-1.))

partitioned_data = tf.dynamic_partition(

x, tf.cast(condition_mask, tf.int32) , 2)

partitioned_data[1] = partitioned_data[1] + 1.0

condition_indices = tf.dynamic_partition(

tf.range(tf.shape(x)[0]), tf.cast(condition_mask, tf.int32) , 2)

x = tf.dynamic_stitch(condition_indices, partitioned_data)

# Here x=[1.1, -1., 6.2, 5.3, -1, 8.4], the -1. values remain

# unchanged.

Args | |

|---|---|

indices

|

A list of at least 1 Tensor objects with type int32.

|

data

|

A list with the same length as indices of Tensor objects with the same type.

|

name

|

A name for the operation (optional). |

Returns | |

|---|---|

A Tensor. Has the same type as data.

|